Ten years ago, persuasive design was a relatively new frontier in the field of UX. In a 2015 Smashing article, I was among those who showed a way for practitioners to move from being primarily focused on improving usability and removing friction to also guide users toward a desired outcome. The premise was simple: by leveraging psychology, we could influence user behavior and drive outcomes like higher sign-ups, faster and richer onboarding, and stronger retention and engagement.

A decade later, that promise has proven true — but not in the same way many of us expected. Most product teams still face familiar problems: high bounce rates, weak activation, and users dropping off before experiencing core value. Usability improvements help, but they don’t always address the behavioral gap that sits underneath these patterns.

Persuasive design didn’t disappear — it matured.

Today, the more useful version of this work is often called behavioral design: a way to align product experiences with the real drivers of human behavior, with an ethical mindset. Done well, it can improve conversion, onboarding completion, engagement, and long-term use without slipping into manipulation.

Here’s what I’ll cover:

- What has held up from the last decade of persuasive design;

- What didn’t hold up, especially the limits of pattern-first gamification;

- What changed in how we model behavior, from triggers to context and systems;

- How to use modern behavioral frameworks to improve both discovery and ideation;

- A practical way to run this work as a team, using a five-exercise workshop sequence, you can adapt to your product.

The goal is not to add more tactics to your toolkit. It’s to help you build a repeatable, shared approach to diagnosing behavioral barriers and designing solutions that support both users’ goals and business outcomes.

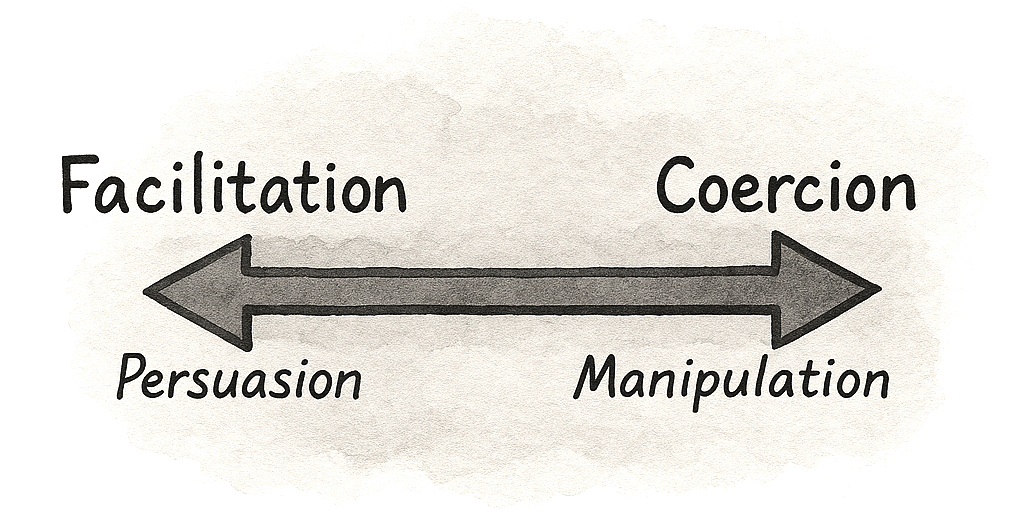

Is Persuasion The Same As Deception?

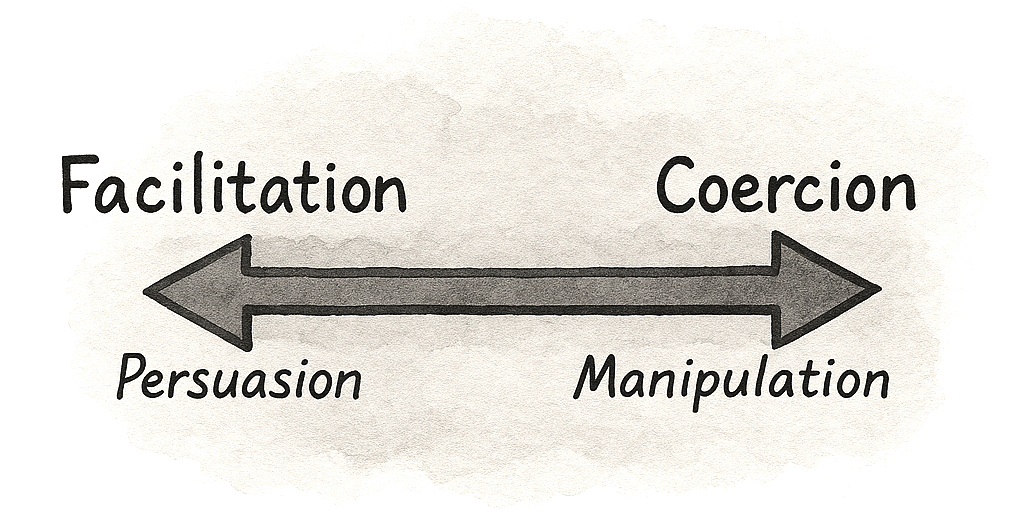

Behavioral Design is not about slapping deceptive patterns or superficial “growth hacks” onto your UI. It’s about understanding what truly enables or hinders your users on their way to achieving their goal and then designing experiences that guide them to success.

Behavioral design is more about bridging the gap between what users want (achieving their goals, feeling value) and what businesses need (activation, retention, revenue), creating win-win outcomes where good UX and good business results align.

But like with all powerful tools, they can be used both for good and bad. The difference lies in the intention of the designer. Some designers argue for not promoting behavioral or persuasive design, while others argue that we need to understand the tools to learn how to use them well and how we can easily, and often mindlessly, fall into the trap of promoting an unethical lens.

If we are not enlightened, then how can we judge what represents good and bad practice? If we do not understand how psychology works, then we lack the awareness needed to spot our biases. If we don’t understand these tools, we can’t spot when they’re misused.

The difference between persuasion and deception is intention, plus accountability.

A Decade Later, What Have We learned?

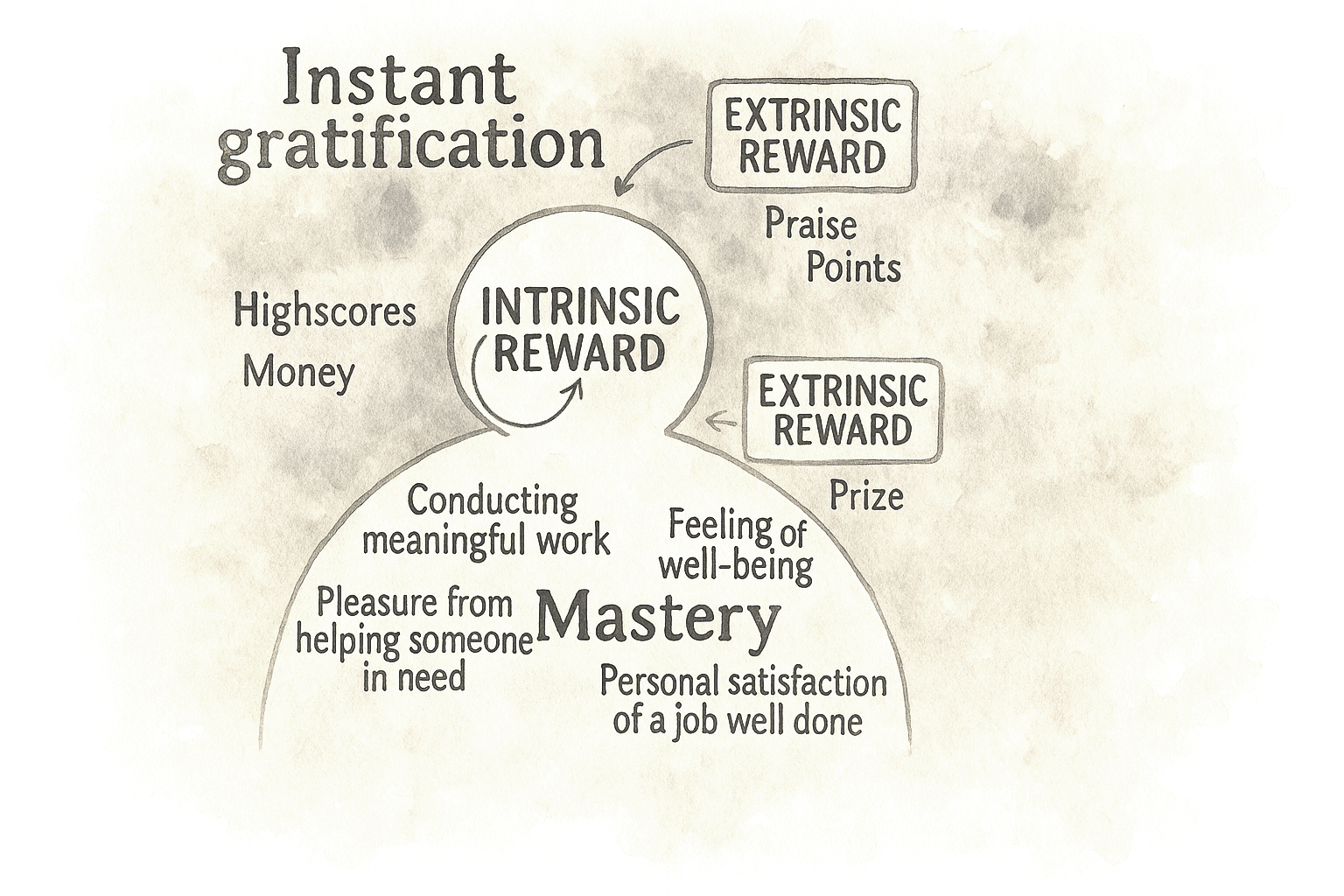

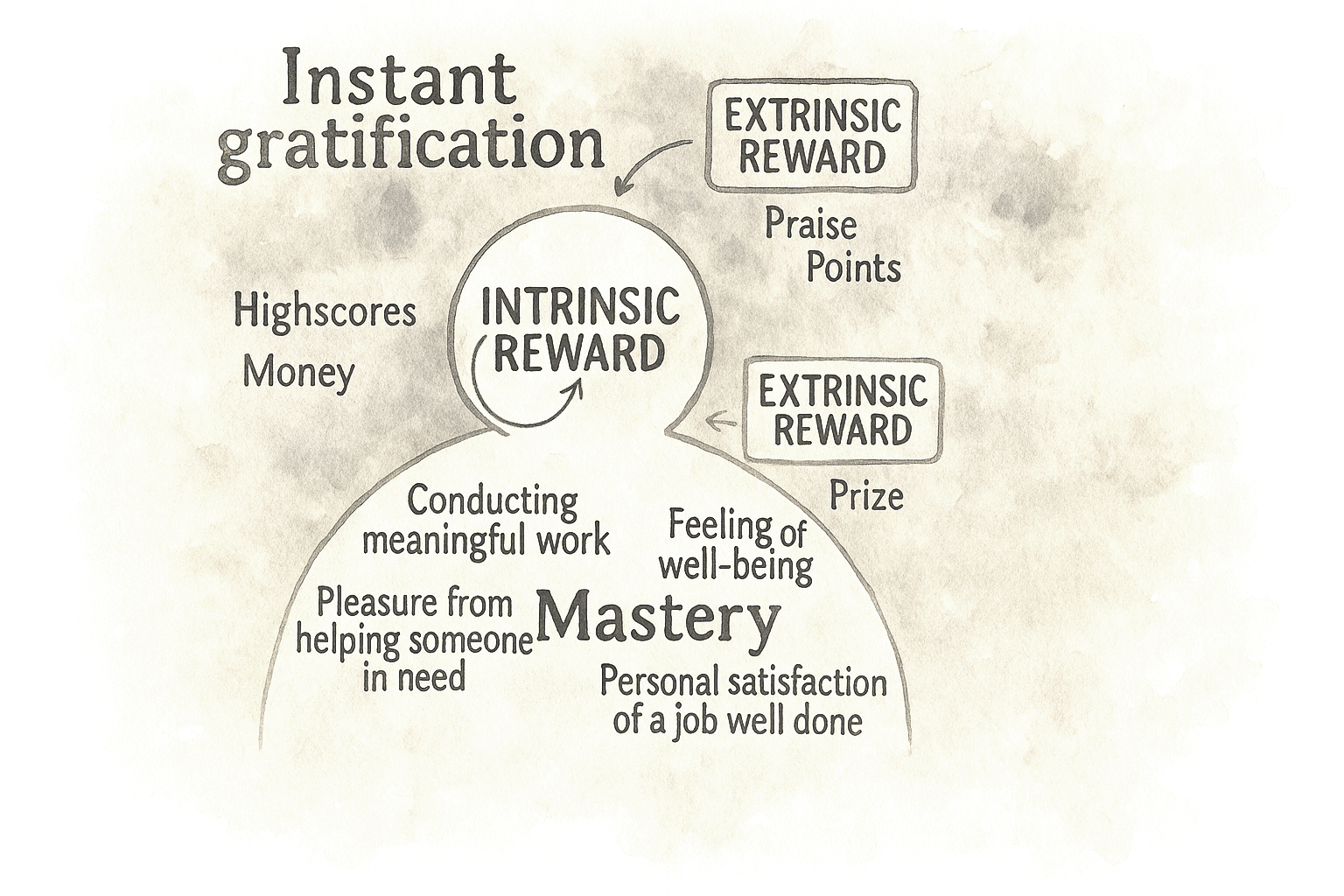

In the early 2010s, many teams treated persuasive design as almost synonymous with gamification. If you added points, badges, and leaderboards, you were doing psychology. And to be fair, those surface mechanics did work in some cases, at least in the short term. They could nudge people through onboarding flows or encourage a few extra logins. But over the decade, their limits became clear. Once the novelty wore off, many of these systems felt shallow. Users learned to ignore streaks that did not connect to anything meaningful or dropped out when they realized the game layer was not helping them reach a real goal.

This is where self-determination theory has quietly reshaped how serious teams think about motivation. It distinguishes between extrinsic motivators, such as rewards, points, and status, and intrinsic drivers like autonomy, competence, and relatedness. Put simply, if your “gamification” fights against what people actually care about, it will eventually fail. The interventions that have survived are the ones that support intrinsic needs. A language learning streak that makes you feel more capable and shows progress can work because it makes the core activity feel more meaningful and manageable. A badge that only exists to move a dashboard number, on the other hand, quickly becomes noise.

Lesson 1: From Quick Fixes To Behavioral Strategy

One key lesson from the past decade is that behavioral design creates the most value when it moves beyond isolated fixes and becomes a deliberate strategy. Many product teams start with a narrow goal: improve a sign-up rate, reduce drop-off, or boost early retention. When standard UX optimizations plateau, they turn to psychology for a quick lift, often with success.

The biggest opportunity is not one more uplift on a stubborn metric, but having a systematic way to understand and shape behavior across the product.

Behavioral design isn’t about hacks.

It’s about helping people succeed.

Common signals are easy to recognize: people sign up but never finish onboarding; they click around once and never return; key features sit unused. A behavioral strategy doesn’t just ask “What can we change on this screen?” It asks what is happening in the user’s mind and context at those moments.

That might lead you to design an onboarding experience that uses curiosity and the goal-gradient effect to guide people to a clear first win, instead of hoping they read a help doc. Or it might lead you to design for exploration and commitment over time: social proof where it actually matters, appropriate challenges that stretch but don’t overwhelm, progressive disclosure so advanced features show up when people are ready, and the right triggers at the most opportune moment instead of random nags.

Great products aren’t just easy to use.

They’re easier to commit to.

Product psychology has shifted from scattered hypotheses to a growing library of repeatable patterns. Those patterns only shine when they sit inside a coherent behavioral model: what users are trying to achieve, what blocks them, and which levers the team will pull at each stage.

Simple nudges, inspired by Thaler and Sunstein, have helped popularize behavioral thinking in design. But we’ve also learned that nudges alone rarely solve deeper behavioral challenges. A behavioral strategy goes further: it blends tactics, grounds them in real motivations, and ties experiments to a clear theory of change. The goal is not a one-off win on today’s dashboard, but a way of working that compounds over time.

Lesson 2: Game Mechanics Alone Are Not Enough

Game mechanics alone are no longer a credible behavioral strategy. Ten years ago, adding points, badges, and leaderboards was almost shorthand for “we’re doing psychology.” Today, most teams have learned the hard way that this is decoration unless it serves a real need.

A behavioral approach starts with a blunt question: What is the game layer in service of, and for whom? Does it help people make progress that matters to them, or does it just keep a dashboard happy? If it ignores intrinsic motivation, it will look clever in a slide deck and brittle in production.

In practice, that means points and streaks are not treated as automatic upgrades anymore. Teams ask whether a mechanic helps users feel more competent, more in control, or more connected to others. A streak only makes sense if it reflects real progress in a skill the user cares about. A leaderboard only adds value if people actually want to compare themselves and if the ranking helps them decide what to do next. If it does not pass those tests, it is clutter, not a motivational engine.

Streaks and badges only work when they support something users truly value.

The most effective products now start with the intrinsic side. They are clear about what the product helps users become or achieve, and only then ask whether a game mechanic can amplify that journey. When game elements are added, they live in the core loop rather than on top of it. They show mastery, mark meaningful milestones, and reinforce self-driven goals. That is the difference between treating gamification as a paint job and using it to support users on a path they already care about.

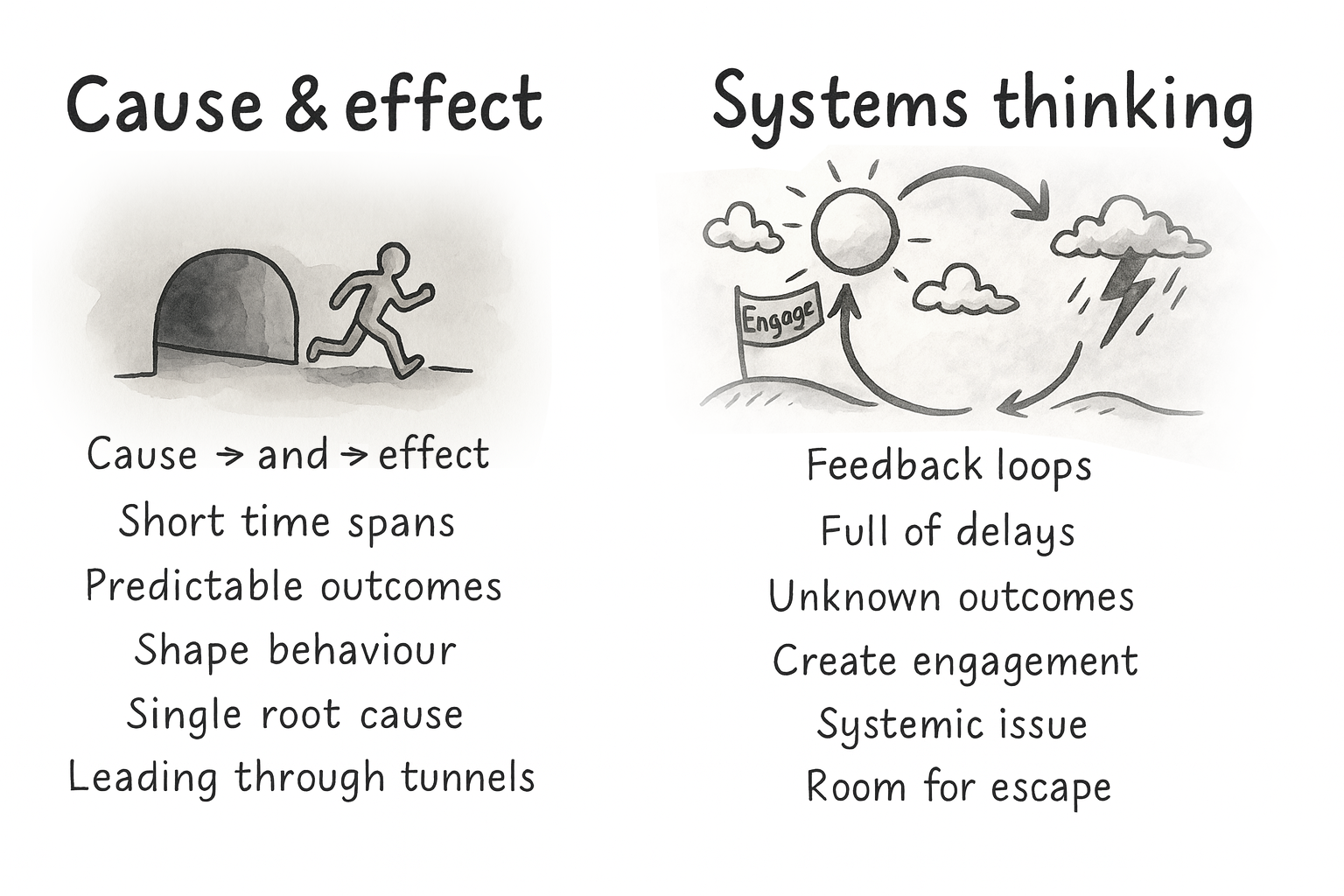

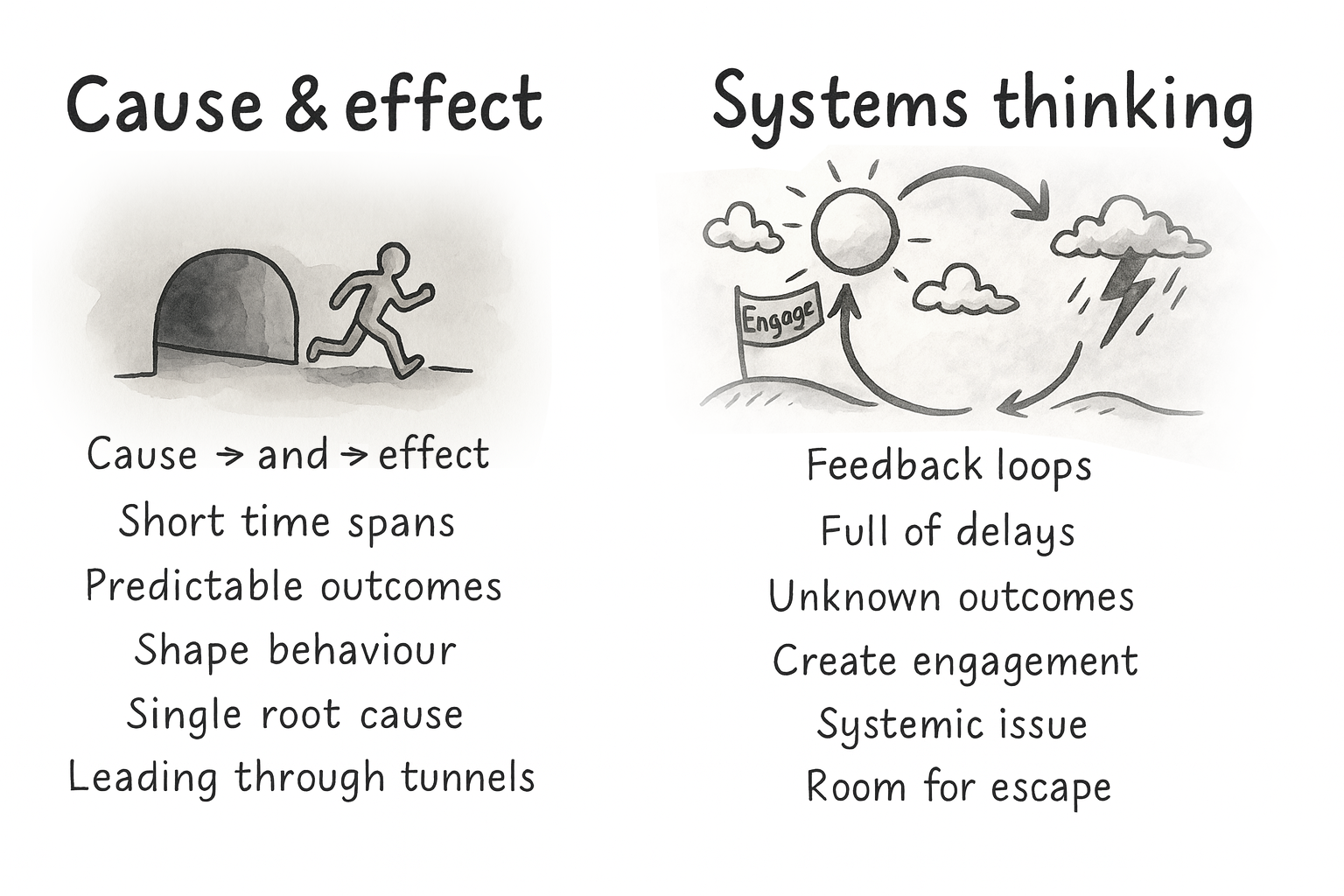

Lesson 3: From Cause And Effect To Holistic Systems Thinking

Early persuasive design often assumed a simple logic: find the broken step, add the right lever, and users move forward. Nice on a slide, rarely true in reality.

People don’t act for a single reason. They have context, history, competing goals, mood, time pressure, trust issues, and different definitions of success. Two users can take the same step for completely different reasons. The same user can behave differently on a different day.

That’s why systems thinking matters. Behavior is shaped by feedback loops and delays, not just one trigger. Outcomes we care about, trust, competence, and habit, are built over time. A change that boosts this week’s conversion can still weaken next month’s retention.

If you have ever shipped a “conversion win” and then watched support tickets, refunds, or churn go up, you have felt this. The local metric improved. The system got worse.

Your design structures either enable people or box them in. Defaults, navigation, feedback, pacing, rewards — each of these decisions reshapes the system and therefore the journeys people take through it.

So the job is not to perfect a single funnel. It is to build an environment where multiple valid paths can succeed, and where the system supports long-term goals, not just short-term clicks.

The job isn’t to perfect one funnel, but to support multiple valid paths.

A mature behavioral strategy is explicit about that. It is designed for several paths instead of one “happy flow,” supports autonomy instead of forcing compliance, and looks at downstream effects instead of only first-step conversion.

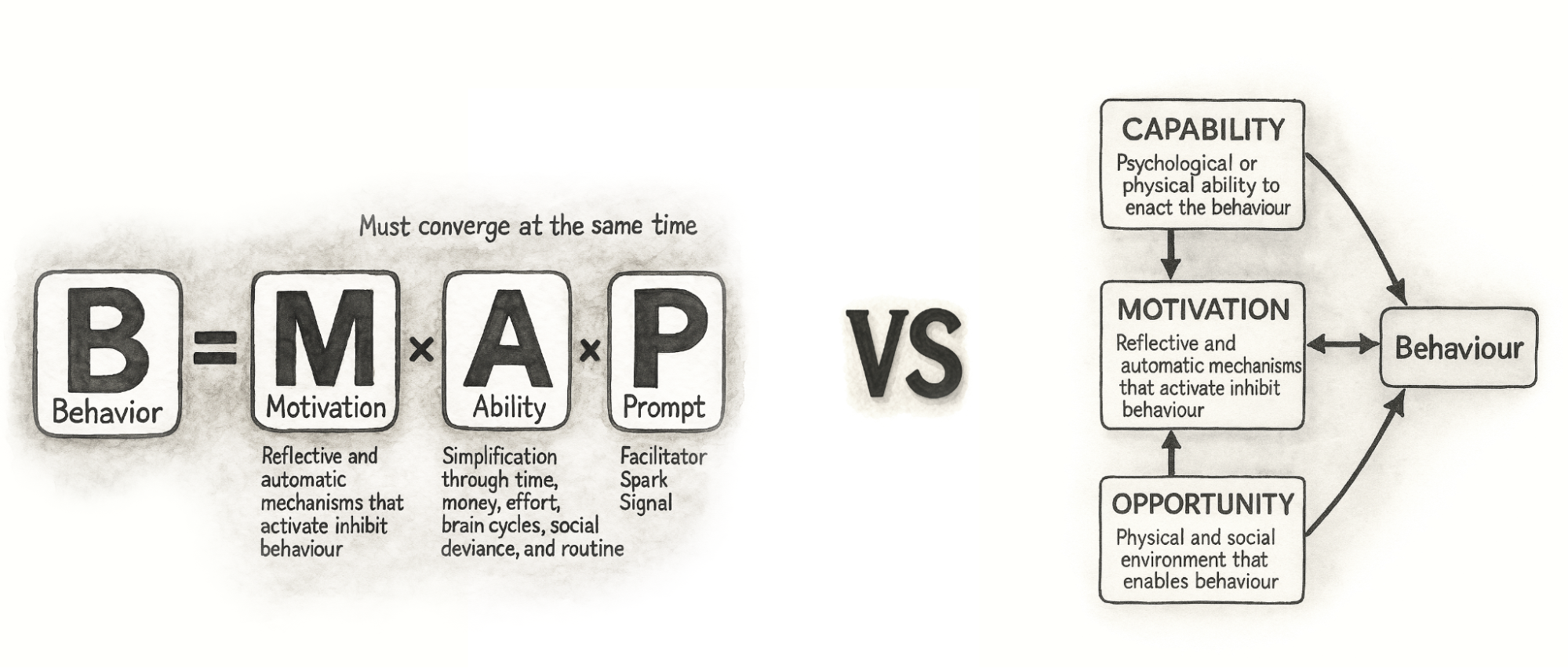

Lesson 4: From Triggers To Context

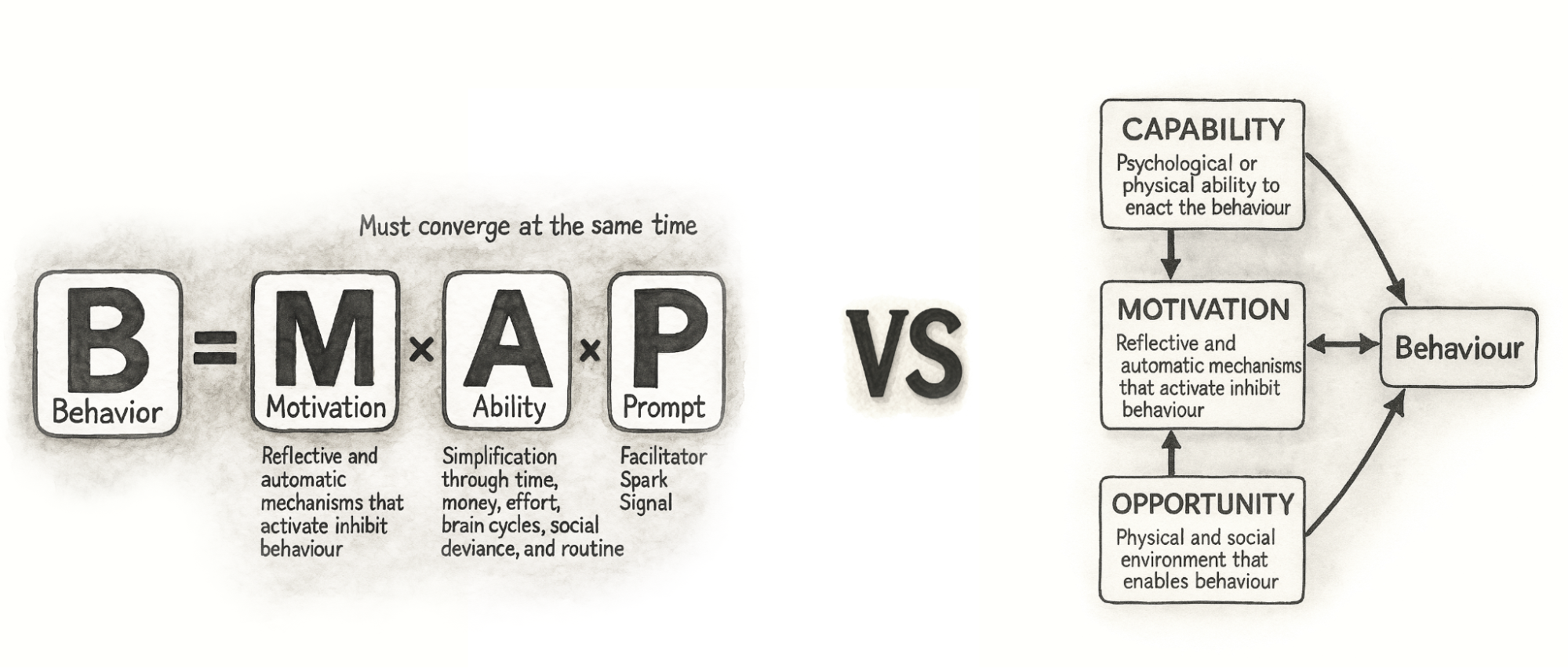

The same shift has happened in the frameworks we use. A decade ago, the Fogg Behavior Model (FBM) was everywhere. It gave teams a simple trio: motivation, ability, trigger — and a clear message: shouting louder with prompts does not fix low motivation or poor ability. That alone was a useful upgrade.

Fogg’s own work has moved on, too. With Tiny Habits, the focus leans more on identity, emotion, and making behaviors feel easy and personally meaningful. That mirrors a broader shift in the field: away from “fire more prompts” and toward designing environments where the right behavior feels natural.

Teams eventually ran into the same wall: prompts do not fix low capability or missing opportunity. You cannot nag people into skills they do not have or into contexts that do not exist. That is where many teams that work deeply with behavior change have gravitated toward COM-B as a more complete foundation.

COM-B breaks behavior into capability, opportunity, and motivation. It starts with a blunt check: can people actually do this, and does their environment let them? That maps well to modern products, where behavior happens across devices, channels, and moments, not on a single screen. It also plugs into broader behavior change work in health and public policy, so we do not have to reinvent everything inside UX.

Thinking this way nudges teams away from simple cause-and-effect stories. A drop in completion rate is no longer “the button is bad” or “we need more reminders,” but a question about how skills, context, and motivation interact. A capability issue might need a better interface and better education. An opportunity issue might be about device access, timing, or social surroundings, not layout. Motivation might be shaped as much by pricing and brand trust as by any in-product message.

Modern behavioral design is less about activating clicks and more about shaping conditions where action feels easy and meaningful.

This broader lens also makes cross-functional work simpler. Product, design, marketing, and data can share one behavior model and still see their own responsibilities in it. Designers shape perceived capability and opportunity in the interface, marketing shapes motivational framing and triggers, and operations shape the structural opportunity in the service. Instead of everyone pushing their own levers in isolation, COM-B helps teams see that they are working on different parts of the same system.

Lesson 5: Psychology Can Also Be Used To Design And Decode Discovery

COM-B is often used as a bridge between discovery and ideation. On the discovery side, it gives structure to research. You can use it to design interview guides, read analytics, and make sense of observational studies. It was built to diagnose what needs to change for a behavior to shift, which maps neatly onto early product discovery.

Good discovery doesn’t just ask what users say, but examines what their behavior reveals.

Instead of asking “Why did you stop using the product?” and writing down the first answer, you deliberately walk through capability, opportunity, and motivation. You ask things like:

- Can users actually do this, given their skills and knowledge?

- Does their context help or hinder them in practice?

- How strong is their motivation compared with other demands on their time and money?

You walk through recent experiences in detail: which device they used, what time of day it was, who else was around, and what else they were juggling. You talk about how important this behavior is compared with everything else in their life and what trade-offs they make. To participants, these questions feel natural. Under the hood, you are systematically covering all three parts of COM-B, in line with how behavior change practitioners use the model in qualitative work.

You can look at behavioral data in the same way. Funnel drop-offs, time on task, and click patterns are clues: are people stuck because they cannot progress, because the environment gets in the way, or because they do not care enough to continue? Modern analytics tools make it easier to watch what people actually do rather than only what they report, and combining quantitative and qualitative data gives you a fuller picture than either alone.

When there is a gap between what people say and what they do, you treat it as a signal rather than an irritation. Someone might say that saving for retirement is very important, but never set up a recurring transfer. A user might claim that onboarding was simple, while their session shows repeated back and forth between steps. Those mismatches are often where biases, habits, and emotional barriers live. By labelling them in terms of capability, opportunity, and motivation, and linking them to specific barriers like risk aversion, analysis paralysis, status quo bias or present bias, you move from vague “insights” to a structured map of what is actually in the way.

The gap between what people say and what they do is not noise — it’s the map.

The output of this kind of discovery is not just personas and journeys. You also get a clear statement of the current behavior, the target behavior, and the behavioral barriers and enablers that sit between them.

Lesson 6: Use Behavioral Discovery In Your Ideation

The bridge from discovery to ideation can be a single sentence template:

From current behavior to target behavior, by doing X, because of barrier Y.

This “from–to–by–why” framing forces teams to say what they actually believe. You are not just saying “add a checklist.” You are saying: “We believe a checklist will help new users feel more capable, which will increase the chance they complete setup in their first session.” Now it is a behavioral hypothesis you can test with experiments, not just a design idea you hope for.

From there, you can generate several variants that express the same principle in different ways and design experiments around them. You might try a few messages that all lean on loss aversion, or several ways of simplifying a high-friction step, or different forms of social proof that vary in tone and proximity.

The important shift is that you are no longer throwing ideas at the wall. You are deliberately targeting the capability, opportunity, or motivation issues that discovery surfaced, and testing which levers actually work in your context.

Every idea should answer one question: which barrier are we trying to change?

Over time, this loop between behavioral discovery and ideation turns into a local playbook. You learn that in your product, some principles reliably help your users and others fall flat. You also learn that patterns from glowing case studies do not automatically transfer. Even gamification and behavior change research often emphasize context-specific, user-centred implementations rather than generic recipes.

This dual use of psychology in discovery and ideation is one of the bigger shifts of the past decade. A product trio can look at a stubborn drop-off point and ask, together, “Is this a capability, opportunity, or motivation issue?” Then they generate ideas that target that part of the system instead of guessing. That shared language makes behavioral design less of a specialist add-on and more of a normal way for cross-functional teams to reason about their work.

A Decade Later: What Has Proven To Work In Practice

If the first decade of persuasive design taught us anything, it is that behavioral insight is cheap until a team can act on it together.

Methods matter.

Over time, a small set of workshop formats has consistently helped product teams uncover behavioral barriers, align on opportunities, and generate solutions grounded in real psychology instead of surface patterns. As behavioral design has grown from tactical nudges into a strategic discipline, an obvious question keeps coming up: How do teams actually do this work together in practice?

How do product managers, designers, researchers, and engineers move from scattered observations (“people seem confused here”) to a shared behavioral diagnosis, and then to targeted ideas that reflect the real drivers of capability, opportunity, and motivation?

One effective way to make this concrete is through a workshop format. The aim is to help teams:

- Interpret research through a behavioral lens,

- Surface capability, opportunity, and motivation gaps,

- Prioritize high-potential opportunities, and

- Generate ideas that are both psychologically sound and ethically considered.

Real product work is messy and full of feedback loops; nobody follows a perfect step-by-step checklist. But for learning, and especially for introducing behavioral design into a team for the first time, a structured sequence of exercises gives people a mental model. It shows the journey from early discovery to behavioral clarity, from opportunities to ideas, and finally to interventions that have been stress-tested through an ethical lens.

The exercises below are one such recipe. The order is intentional: each step builds on the previous one to move from empathy and insight to prioritized opportunities, concrete concepts, and responsible solutions. No team will follow it letter-perfect every time, but it reflects how behavioral design work tends to unfold when it goes well.

Before diving into the details, here is the full recipe and how each exercise contributes to the bigger behavioral design process:

- Behavioral Empathy Mapping

Builds a shared understanding of the user’s psychological landscape: emotions, habits, misconceptions, and sources of friction.

- Behavioral Journey Mapping

Maps the user’s flow over time, and overlays behavioral enablers and obstacles.

- Behavior Scoring

Prioritizes which behavioral opportunities to tackle first based on impact, feasibility, and evidence.

- Ideas First, Patterns Later

Encourages context-first ideation, then uses persuasive patterns to refine and strengthen promising concepts.

- Dark Reality

Evaluates ethical risks, unintended consequences, and potential misuse.

A note on timing: In practice, this sequence can be run in different formats depending on constraints. For a compact format, teams often run Exercises 1–3 in a half-day workshop, and Exercises 4–5 in a second half-day session. With more time, the work can be spread across a full week: discovery synthesis early in the week, prioritization mid-week, and ideation plus ethical review toward the end. The structure matters more than the schedule; the goal is to preserve the progression from understanding → prioritization → ideation → reflection.

Below is a brief walkthrough of each exercise as I typically facilitate them in workshops in tandem with a library of persuasive patterns.

Exercise 1: Behavioral Empathy Mapping

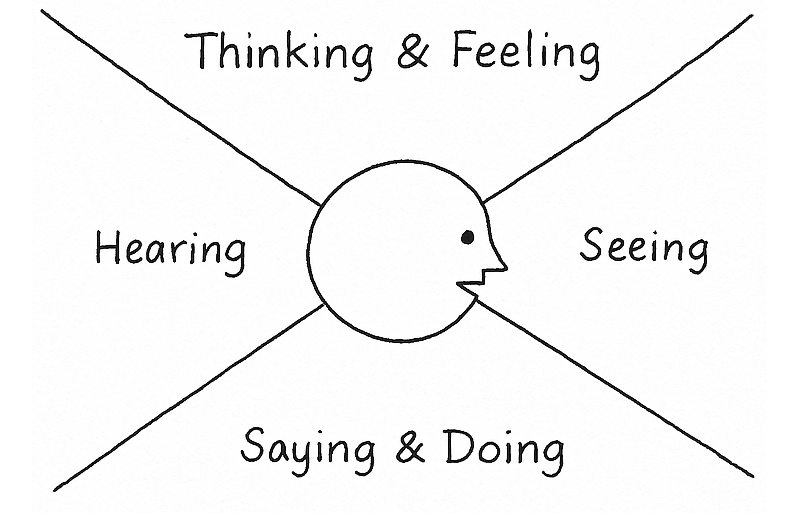

The first step is building a shared, psychologically informed understanding of users. Behavioral Empathy Mapping extends traditional empathy mapping by paying attention to what users attempt, avoid, postpone, misunderstand, or feel uncertain about. These subtle behavioral signals often reveal more than stated needs or pain points.

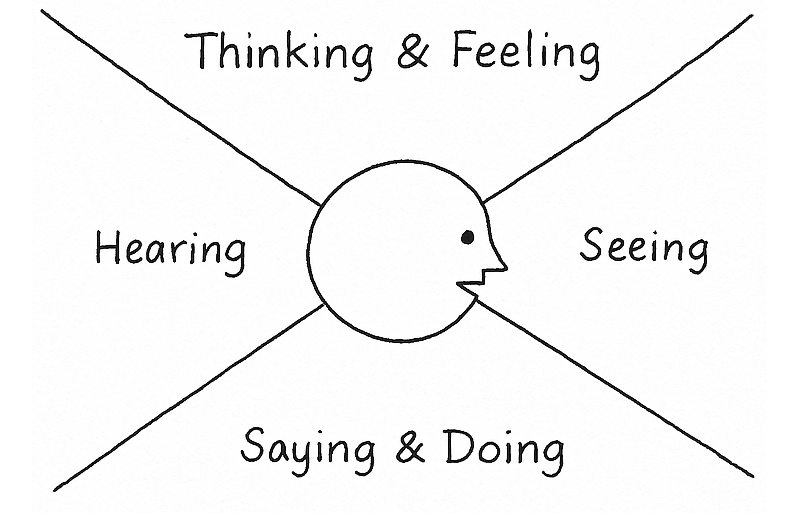

Goal: Understand what drives or blocks the target behavior by capturing what users think, feel, say, and do — and spotting behavioral barriers and enablers.

Steps:

- On a whiteboard or large paper, draw an empathy map: Thinking & Feeling, Seeing, Saying & Doing, and Hearing.

- Add research insights by letting everyone silently add sticky notes from interviews, data, support logs, or observations into the quadrants. One insight per note.

- Identify barriers and enablers.

Cluster notes that make the behavior harder (barriers) or easier (enablers).

Output: A focused map of the psychological and contextual forces shaping the target behavior, ready to feed into Behavioral Journey Mapping.

Exercise 2: Behavioral Journey Mapping

Once you understand the user’s mindset and context, the next step is to map how those forces play out across time. Behavioral Journey Mapping overlays the user’s goals, actions, emotions, and environment onto the product journey, highlighting the specific moments where behavior tends to stall or shift.

Unlike traditional journey maps, the behavioral version focuses on where capability breaks down, where the environment works against the user, and where motivation fades or conflicts arise. These become early signals of where change is both needed and possible.

The output shows the team precisely where the product is asking too much, where users lack support, or where additional motivation or clarity might be required.

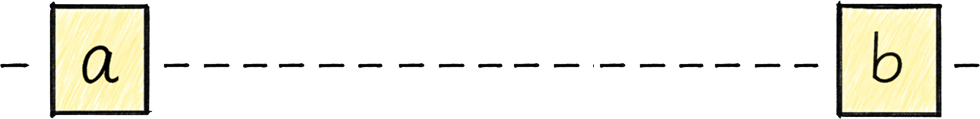

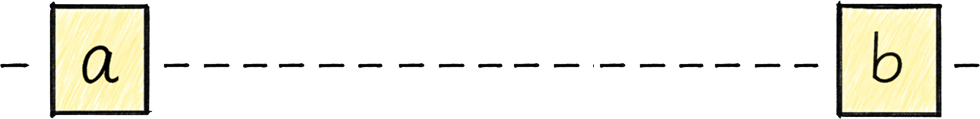

Goal: Map the steps from the user’s starting point to the target behavior, and capture the key enablers and barriers along the way.

Steps:

- Draw a horizontal line from A (starting point) to B (target behavior).

- Have everyone write the steps a user takes from A to B on sticky notes (one per note). Include actions inside and outside the product.

- Place the notes in order along the line. Merge duplicates and align on a shared sequence.

- Extend the vertical axis with two rows:

- Enablers (what could help users move forward),

- Barriers (what could slow or stop users).

- Look for steps with many barriers or few enablers. These are behavioral hot spots.

- Highlight the steps where a good nudge could meaningfully help users complete the journey.

Output: A clear, behavior-focused journey showing where users struggle, why, and which moments offer the most leverage for change.

Exercise 3: Behavior Scoring

With a clearer picture of the user journey and what moments could benefit from a behaviorally helpful hand, you are now ready to identify the behavior it makes most sense to focus on trying to influence.

Goal: Decide which potential target behaviors are worth focusing on first, based on impact, ease of change, and ease of measurement.

Steps:

- List potential target behaviors. Based on the output of the Behavioral Journey Mapping, list behaviors that could potentially be targeted. One behavior per sticky note. Be as concrete as possible (what users do, where, and when).

- Create a table with the following columns:

- Impact of behavior change (how much it could move the goal),

- Ease of change (how realistic it is to influence),

- Ease of measurement (how straightforward it is to track).

| Potential target behaviors |

Impact of behavior change |

Ease of change |

Ease of measurement |

Total |

| … |

|

|

|

|

| … |

|

|

|

|

| … |

|

|

|

|

- Enter each listed behavior into the table and score them from 0 to 10 in each column.

- Sort behaviors by total score and discuss the highest-scoring ones:

- Do they make sense given what you know about users and constraints?

- Select the primary target behaviors you want to carry into the next exercises.

Optionally, note “bonus behaviors” that might follow as a side effect.

Output: A small set of prioritized target behaviors with a clear rationale for why they matter now, and a list of lower-priority behaviors you may revisit later.

A filled-out Behavior Scoring table could look like this:

| Potential target behaviors |

Impact of behavior change |

Ease of change |

Ease of measurement |

Total |

| User completes onboarding checklist in first session. |

8 |

6 |

9 |

23 |

| User invites at least one teammate within 7 days. |

9 |

4 |

8 |

21 |

| User watches the full product tour video. |

4 |

7 |

6 |

17 |

| User reads help documentation during onboarding. |

3 |

5 |

4 |

12 |

In this case, the checklist completion emerges as the strongest initial focus: it has high impact, is realistically influenceable through design changes, and can be measured reliably. Inviting a teammate may be strategically important, but it may require broader changes beyond interface design, making it a secondary focus.

Exercise 4: Ideas First, Patterns Later

Once the team has agreed on which behavior matters most, the next risk is jumping too quickly to familiar psychological tricks. One of the clearest lessons has been that starting with “the pattern” often leads to generic solutions that feel clever but fail in context.

This exercise deliberately separates idea generation from psychological framing.

Goal: Generate solutions grounded in user context first, then use psychological principles to sharpen and strengthen them.

Steps:

- Start by restating the prioritized target behavior and the key barrier identified during journey mapping. Keep this visible throughout the exercise.

- Then give the team a short, focused ideation window (10–15 minutes).

The rule here is simple: no references to behavioral models, cognitive biases, or persuasive patterns yet. Ideas should come directly from the user context, constraints, and moments uncovered earlier.

- Collect ideas on a shared surface and group similar concepts. Look for multiple ways of solving the same underlying problem (cluster them together).

-

Only now do you introduce a library of psychological principles and techniques. I developed the persuasive patterns for this exact purpose. The goal of this step is not to replace ideas, but to refine them:

- Which ideas could be strengthened by reducing friction?

- Which might benefit from clearer feedback, social signals, or better timing?

-

Are there alternative ways to achieve the same effect more respectfully or more clearly?

Patterns are used as lenses, not prescriptions. If a pattern does not improve clarity, agency, or usefulness in this context, it is simply ignored.

Output: A refined set of solution concepts that are grounded in real user context and supported, where appropriate, by behavioral principles rather than driven by them.

This sequencing helps teams avoid “pattern-first design,” where ideas are reverse-engineered to fit a theory instead of addressing real human situations.

Exercise 5: Dark Reality

Before ideas turn into experiments or shipped features, they need one final test. Not for feasibility or metrics, but for ethics.

Over the years, this step has proven critical. Many persuasive solutions only reveal their downside when you imagine them working too well, or being applied in the wrong hands, or used on the wrong day by the wrong person.

Goal: Surface ethical risks, unintended consequences, and potential misuse before implementation.

Steps:

- Take one or two of the strongest ideas from the previous exercise.

- Imagine worst-case scenarios by asking the team to deliberately shift perspective:

- What if a competitor used this against us?

- What if this nudges users when they’re stressed, tired, or vulnerable?

- What happens if this works repeatedly over months, not once?

- Could this create pressure, guilt, or dependence?

- Capture concerns around autonomy, trust, fairness, inclusivity, or long-term well-being.

- For each risk, explore ways to soften or counterbalance the effect:

- Clearer intent or transparency,

- Lower frequency or gentler timing,

- Explicit opt-outs,

- Alternative paths forward.

- Some ideas are reshaped. Some are paused.

Some survive intact, but now with greater confidence.

Output: Solutions that have been stress-tested ethically, with known risks acknowledged and mitigated rather than ignored.

Building A Shared Vocabulary For Product Psychology

The teams that get the most out of behavioral design rarely have a single “psychology expert.” Instead, their team shares a vocabulary around product psychology and knows how to communicate around customer problem behaviorally.

A shared vocabulary turns psychology into cross-functional work.

When patterns and principles are shared:

- Product, design, engineering, and marketing can talk about behavior without talking past each other.

- Discovery insights are easier to interpret because common barriers and drivers have names.

- Ideas can be framed as behavioral hypotheses (“we believe this will increase early competence…”) instead of vague guesses.

The Persuasive Patterns collection grew from this need: giving teams a common language and a concrete set of examples to point at. Whether used as a printed deck in a workshop or as long-form references during everyday work, the goal is the same: make product psychology something the whole team can see and discuss.

Persuasive design was often framed as a bag of tricks. Today, the work looks different:

- Game mechanics are used to support intrinsic motivation, not drive vanity engagement.

- Frameworks like COM-B and systems thinking help teams see behavior in context, not as a single trigger.

- Behavioral insight is used to shape discovery and ideation, not just last-minute copy changes.

- Ethics is part of the design brief, not an afterthought.

The next step is not more sophisticated nudges. It is a more systematic practice: simple methods, shared language, and a habit of asking “What is really going on in our users’ lives here?”

If you start by focusing on one behavioral problem, use a couple of the exercises in this article, and give your team a shared set of patterns to reference, you are already practicing persuasive design in the way it has evolved over the last ten years: grounded in evidence, respectful of users, and aimed at outcomes that matter on both sides of the screen.