867 Discord servers. 1,000+ active users. $10–11 every time someone played a one-hour D&D session.

I was the only engineer. There was no revenue. And that number wasn’t going down on its own.

I want to be upfront before we go any further: Scrollbook is no longer running.

I built it because I was always the Dungeon Master. My wife, my son, and I had a standing D&D night, and I wanted to actually play for once instead of running the whole session. So I built an AI dungeon master to take my seat. It worked well enough that I shared it. I did not expect anyone else to care.

They did. 867 servers and 1,000+ users later, I was looking at $10-11 every time someone played a one-hour session with no revenue, no paywall, and no plan for either. (Scrollbook is one of three production projects I break down in my case studies. The other two are live and generating revenue. The contrast is instructive.) I shut it down because the cost of operating it solo, without a monetization model that kept pace with usage, made it unsustainable. By the time I pulled the plug, prompt caching had dropped that same session to $0.50-1.50. The technical solution worked. The business math didn’t.

Both of those things are worth talking about.

This post covers the technical side in detail: what the problem was, what I changed, and the actual production code behind it. The business lesson is at the end. I’d argue it’s the more important one.

The Cost Problem

Every message to Claude sent the entire conversation context from scratch. In a D&D session, that context grows with every exchange between the player and the AI.

Before caching, each API call looked like this:

[system prompt: ~1,800 lines of D&D rules + Cipher's personality]

[campaign context: setting, NPCs, quests, locations, active encounter]

[character context: stats, equipment, spells, conditions, companions]

[party context: all active players and their characters]

[message history: every exchange in the session so far]

[current question: "can I grapple the goblin?"]

The system prompt and campaign context alone sat at 4,000–5,000 tokens, reprocessed at full price on every single message.

A one-hour D&D session averages 15–25 back-and-forth exchanges. Context grows on each call. At Sonnet pricing ($3.00/M input, $15.00/M output): $10–11 per session. Multiply that across hundreds of active servers running concurrent sessions and it stops being a line item. It becomes a ceiling. Every new user makes the situation structurally worse.

The Architecture

Scrollbook runs on six services:

| Service | Role |

|---|---|

bot/ |

Discord bot — receives player commands |

api/ |

REST API for the companion web app |

shared/services/cipher_service.py |

Owns all Anthropic API calls |

shared/services/ai_usage_tracker.py |

Token counting and budget enforcement |

shared/services/ai_extraction_service.py |

PDF/content extraction via Bedrock |

infrastructure/ |

AWS CDK — ECS Fargate, RDS, ALB |

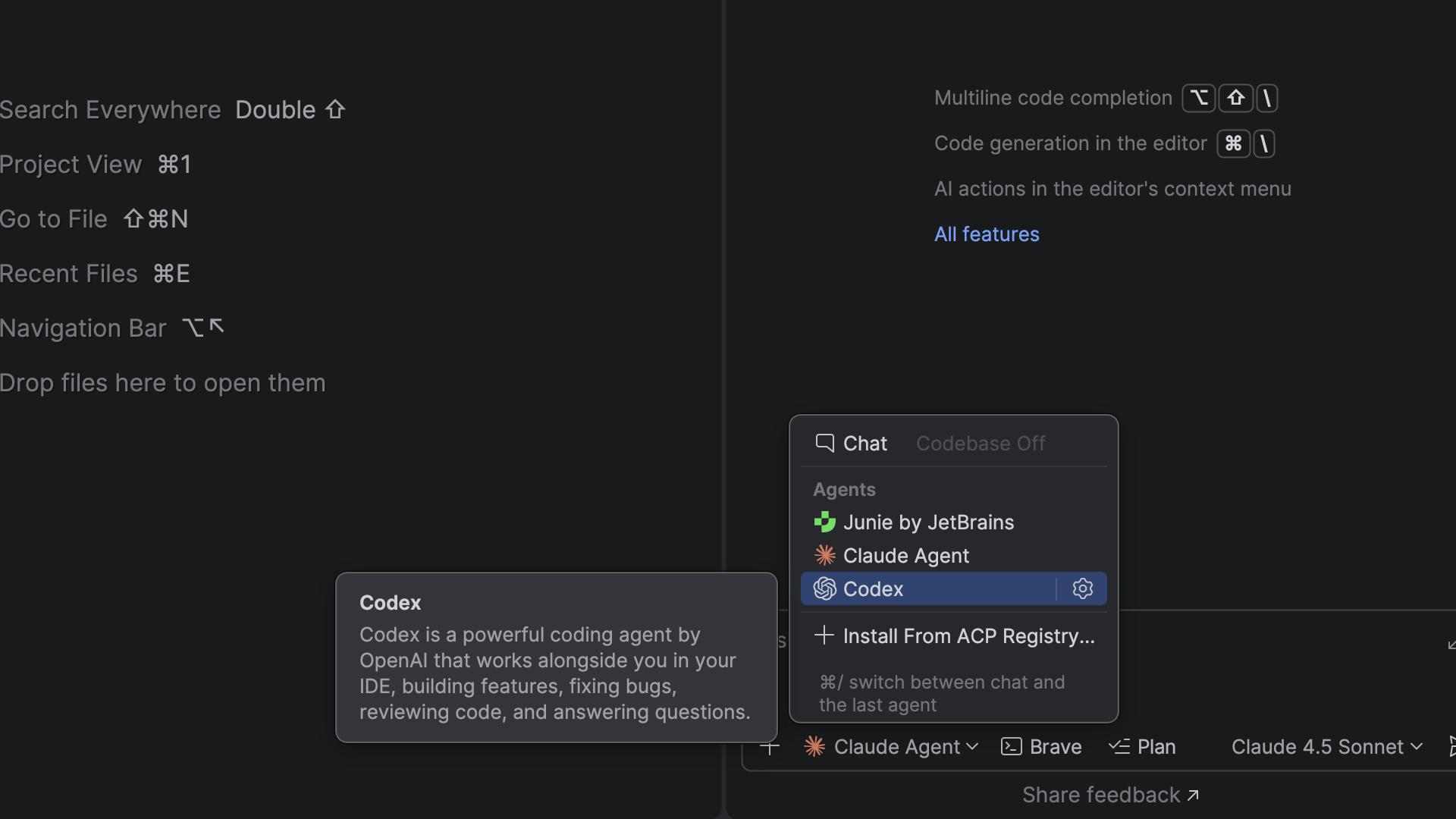

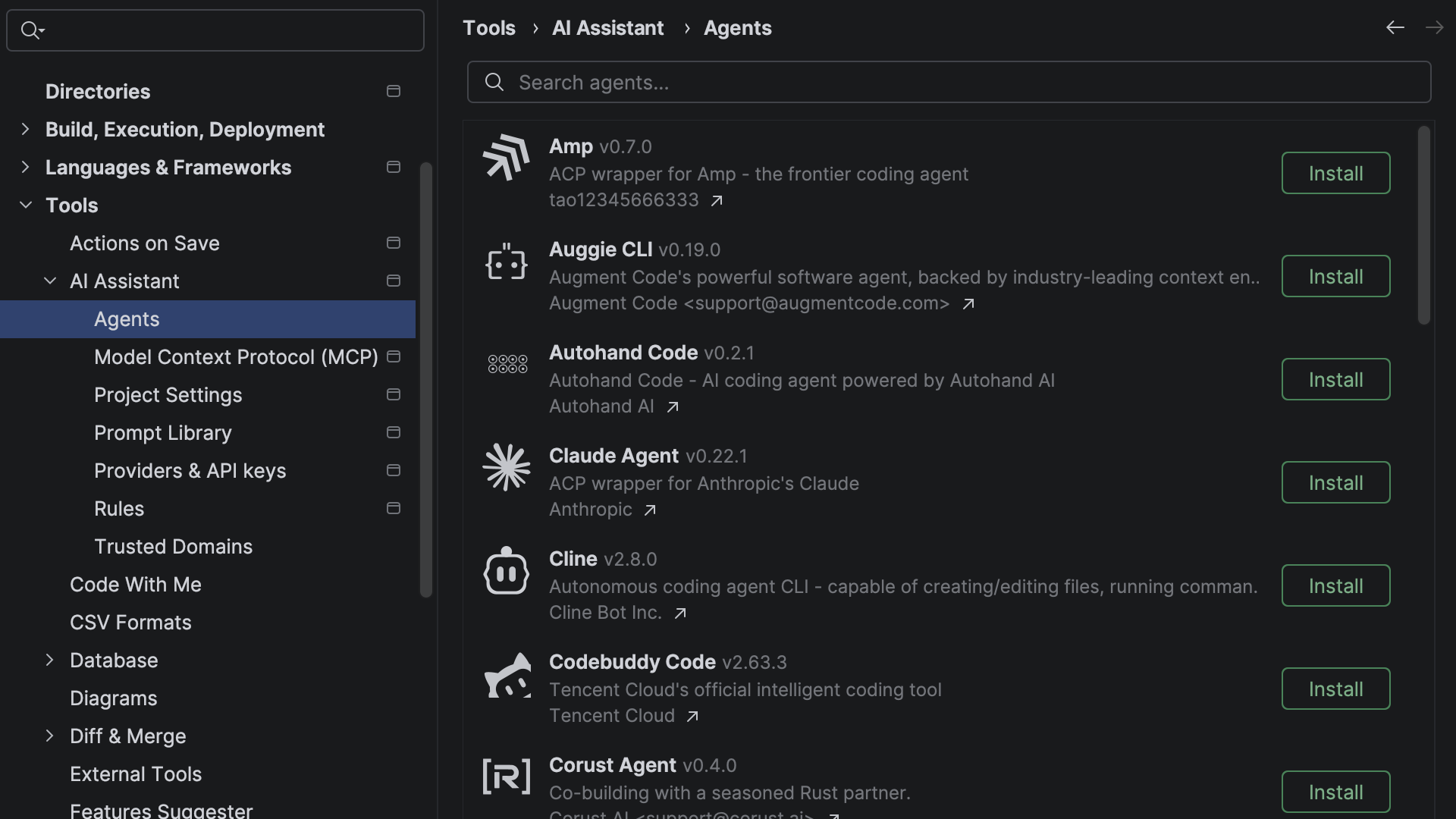

cipher_service.py is the single point of contact with the Anthropic API. Context is assembled per-request by ContextManager.build_context(), pulling campaign data, character stats, active party, quests, encounters, and NPCs from Postgres — all scoped to the Discord guild ID.

Here is the insight that unlocked the fix: the system prompt and campaign context were structurally identical on every request for a given server. The D&D rules, Cipher’s personality, the campaign world — none of it changes message-to-message. It was being sent and fully reprocessed every single time, on every message, for every server.

What Prompt Caching Actually Is

Anthropic caches the prefix of your prompt on their infrastructure for a TTL window. Subsequent requests that match that prefix byte-for-byte skip the reprocessing cost. Instead of paying full input token price, you pay roughly 10% of that on a cache hit.

A few things that matter:

Prefix, not arbitrary sections. The cache applies to the beginning of your prompt. Everything you want cached must come before everything that changes. This means prompt order is the entire game.

Cache hits vs. misses. A hit means the prefix was already in cache; you pay about 10% of the normal input token price. A miss means the prefix gets written to cache at roughly 1.25x the normal input token price — slightly more expensive than a regular call, but a one-time cost within each TTL window. After the first message in a session, you want hits almost exclusively.

The TTL is 5 minutes for the ephemeral cache type on Anthropic’s infrastructure. For active D&D sessions this is fine — messages come fast. For a server that runs one session a week, you pay write costs every time with zero read benefit. The math only works at session density.

This is a first-class API feature, not a workaround. You opt in by passing structured content blocks with a cache_control field instead of a plain string. Two lines of code. Anthropic’s infrastructure handles everything else.

One more thing worth saying clearly: this is not client-side caching. You are not storing API responses locally. You are telling Anthropic’s infrastructure which portion of your prompt is stable so it does not need to recompute it.

The Implementation

Centralizing Prompt Assembly

With six services in play, the first structural requirement was centralizing all prompt assembly into one place. The cacheable prefix must be byte-for-byte identical across every request. That cannot happen if prompts are assembled in multiple code paths and concatenated at call time. A trailing space, a newline difference, a Unicode normalization inconsistency — any of it produces a full cache miss.

All prompt assembly in Scrollbook runs through one function: cipher_service.py:_build_conversational_prompt().

Prompt Order

The ordering decision is the whole thing:

1. System prompt (D&D rules + Cipher personality) CACHED

2. Campaign and character context (per-guild, stable) included in cache

3. Conversation history [0 ... N-3] CACHED at breakpoint

4. Conversation history [N-2, N-1] NOT cached

5. Current question NOT cached

Static content at the top. Dynamic content at the bottom. The most expensive tokens, cached. The tokens that change on every message, not cached.

The Code

Before caching, the system prompt was passed as a plain string:

# Every call: full system text + context, reprocessed at full price every time

response = self.anthropic_client.messages.create(

model=self.model_id,

system=full_system_text, # plain string, no caching

messages=messages,

)

After caching, it becomes a structured content block:

# cipher_service.py:2070-2079

if self.enable_caching:

system_blocks = [

{

"type": "text",

"text": full_system_text,

"cache_control": {"type": "ephemeral"} # two lines

}

]

else:

system_blocks = [{"type": "text", "text": full_system_text}]

The conversation history gets a second cache breakpoint at the third-to-last message, capturing the entire prior session:

# cipher_service.py:2084-2098

for i, msg in enumerate(conversation_history):

content_blocks = [{"type": "text", "text": msg["content"]}]

if self.enable_caching:

is_last_two = i >= len(conversation_history) - 2

# Cache breakpoint at third-to-last message

if not is_last_two and i == len(conversation_history) - 3:

content_blocks[0]["cache_control"] = {"type": "ephemeral"}

messages.append({"role": msg["role"], "content": content_blocks})

# Current question is never cached

messages.append({"role": "user", "content": [{"type": "text", "text": question}]})

Two cache breakpoints: one on the system prompt, one on the conversation history. The Anthropic API limits the number of cache control markers per request, so placement matters. You want those markers positioned to maximize the ratio of cached-to-uncached tokens on every call — that ratio is what drives your actual savings.

The API call itself barely changes. The system parameter is now a content block array instead of a string:

# cipher_service.py:2221-2228

response = self.anthropic_client.messages.create(

model=self.model_id,

max_tokens=self.max_tokens,

temperature=self.temperature,

system=system_blocks, # content block array instead of plain string

messages=msgs,

tools=tools_to_use,

)

The Multi-Tenant Problem

867 servers means 867 sets of campaign state — different characters, different HP totals, different active encounters, different party compositions. Keeping per-guild context out of a polluted shared prefix requires a specific architectural decision.

In Scrollbook, guild-specific data lives inside the cached block:

# cipher_service.py:2066-2068

context_section = context.to_prompt_section()

full_system_text = f"{system_prompt_text}nn{context_section}"

# This full_system_text then receives the cache_control block

This works because campaign context is stable within a session. Cipher updates game state via tool calls when something changes — it does not receive externally updated context as new input mid-session. For the duration of an active session, the system prompt plus campaign context is genuinely identical across every message for that guild. Each guild gets its own cached prefix. No cross-contamination.

If your situation is different — if state changes externally between messages — that dynamic content needs to live below the cache breakpoint, not inside it.

The Results

A one-hour session that cost $10–11 dropped to $0.50–1.50.

To verify you are actually hitting the cache, read the usage object on the response. Do not assume. Log it explicitly:

# cipher_service.py:2268-2288

if self.enable_caching and hasattr(response, "usage"):

usage = response.usage

input_tokens = getattr(usage, "input_tokens", 0)

cache_read_tokens = getattr(usage, "cache_read_input_tokens", 0)

cache_creation = getattr(usage, "cache_creation_input_tokens", 0)

if cache_read_tokens > 0:

savings_pct = (

cache_read_tokens / (input_tokens + cache_read_tokens)

) * 100

logger.info(

f"Cache HIT: {cache_read_tokens} tokens read from cache "

f"({savings_pct:.1f}% savings), {input_tokens} new tokens"

)

elif cache_creation > 0:

logger.info(f"Cache MISS: {cache_creation} tokens written to cache")

Three fields to understand:

-

input_tokens— tokens billed at full price this call -

cache_creation_input_tokens— tokens written to cache, billed at approximately 1.25x the base input token price (one-time cost per TTL window) -

cache_read_input_tokens— tokens read from cache, billed at approximately 10% of normal (this is where the 90% savings comes from)

The feature flag that controlled it all:

# shared/config/settings.py:86-98

anthropic_enable_prompt_caching: bool = Field(

default=True, description="Enable Anthropic prompt caching (90% cost savings)"

)

# Bedrock fallback has no equivalent — hardcoded off

bedrock_enable_prompt_caching: bool = Field(

default=False, description="Enable prompt caching (not supported on AWS Bedrock)"

)

A note on Bedrock: At the time Scrollbook was built, Bedrock did not support prompt caching. That gap made it a non-starter as the primary provider and locked the architecture to the direct Anthropic API. Bedrock has since caught up — prompt caching went GA in April 2025, with 1-hour TTL support added in January 2026. If you are on Bedrock today, the same technique applies.

When optimization becomes load-bearing infrastructure, provider lock-in follows. That was true when I built this. It is less true now.

Gotchas That Will Kill Your Cache Hit Rate

Prompt order is everything. If you accidentally flip the ordering — campaign context before system prompt, for example — every call is a full miss. The cache matches from the beginning of the prompt in sequence. There is no partial matching.

Dynamic content in the cached prefix. This is the hardest mistake to catch. Timestamps, counters, random values, user-specific data — anything that changes per-message, if it bleeds into the section you are trying to cache, every call is a miss. In Scrollbook, character HP and active conditions are inside the cached block intentionally, because Cipher controls those updates via tool calls. If your state changes externally, that content belongs below the breakpoint.

The 5-minute TTL cliff. Servers with long gaps between messages cold-start on every session. Write costs get paid repeatedly with zero read benefit. The math works at session density. For sparse traffic, run the calculation before assuming caching helps.

Whitespace and encoding. The prefix match is byte-level. A trailing space, a newline inconsistency, a Unicode normalization difference — any of it is a miss. Prompt assembly must run through a single code path. If you are concatenating in multiple places, you will have inconsistency you cannot see.

Don’t assume, verify. The logging block above takes ten minutes to add. Add it. The usage object will tell you immediately whether your cache hit rate matches your expectations. Ship it before you ship the feature.

Why I Still Had to Shut It Down

The honest math: 90% off still leaves 10% of a cost that grows with usage.

At $0.50–1.50 per session across 867 servers with no subscription revenue, the situation improved dramatically and remained unsustainable. I had bought runway. I had not fixed the underlying problem.

There was no paywall. No subscription tier. No mechanism for Scrollbook to generate revenue as usage scaled. Every new server was a new cost center with nothing offsetting it. Prompt caching made the slope of that curve shallower. It did not change the direction.

Beyond the API costs: solo maintenance at that user count meant incident response, server reliability, and the full weight of being the only person accountable to 867 active communities. That is not something you can optimize your way out of.

What I would do differently: charge earlier. I know that is a strange thing to say about something I built so my family could play D&D together. But the moment it left that context and became someone else’s tool, it became a product. I just did not treat it like one. Even a small subscription changes the entire math and the entire psychology of the product.

I built the technical foundation first, optimized costs second, and never got to monetization. The right order is the reverse: figure out how this sustains itself, then build, then optimize. I applied that lesson to the next two products I shipped. ReptiDex launched with a three-tier subscription model on day one and hit 50 paid subscribers in 9 days. Geckistry collects payment at checkout. Both are still running.

What to Take From This

Prompt caching is a real, production-grade optimization. The cache_control field is two lines of code. A 90% reduction in inference cost is achievable if your prompt has a large, stable prefix and your traffic density is high enough for cache reads to consistently outpace cache writes.

If you are building on Claude at any meaningful scale, look at your prompt structure. If you are sending the same system prompt on every request and that prompt is long, you are paying for reprocessing you do not need.

But the bigger lesson is not technical. If you are building an AI product solo, get to monetization before you get to optimization. The optimization I built here was real and it worked. The product did not survive anyway — not because the code was wrong, but because I treated cost reduction as a substitute for a business model.

It is not.

I run Built By Dusty, a software studio that builds custom apps and sales platforms for animal breeders and small businesses. The AI cost optimization techniques from Scrollbook now power features in the breeding software I deliver to clients. If you’re building on Claude at scale, or you’re a founder with a product that has real infrastructure costs to manage, I’d like to hear from you.

All code references in this article are from the actual Scrollbook production codebase. The codebase is private, but every snippet shown here ran in production.