The summer of 2025 brought an unlikely alliance to Washington. Senators from opposite sides of the aisle stood together to introduce legislation forcing American companies to disclose when they’re replacing human customer service agents with artificial intelligence or shipping those jobs overseas. The Keep Call Centers in America Act represents more than political theatre. It signals a fundamental shift in how governments perceive the relationship between automation, labour markets, and national economic security.

For Canada, the implications are sobering. The same AI technologies promising productivity gains are simultaneously enabling economic reshoring that threatens to pull high-value service work back to the United States whilst leaving Canadian workers scrambling for positions that may no longer exist. This isn’t a distant possibility. It’s happening now, measurable in job postings, employment data, and the lived experiences of early-career workers already facing what Stanford researchers call a “significant and disproportionate impact” from generative AI.

The question facing Canadian policymakers is no longer whether AI will reshape service economies, but how quickly, how severely, and what Canada can do to prevent becoming collateral damage in America’s automation-driven industrial strategy.

Manufacturing’s Dress Rehearsal

To understand where service jobs are heading, look first at manufacturing. The Reshoring Initiative’s 2024 annual report documented 244,000 U.S. manufacturing jobs announced through reshoring and foreign direct investment, continuing a trend that has brought over 2 million jobs back to American soil since 2010. Notably, 88% of these 2024 positions were in high or medium-high tech sectors, rising to 90% in early 2025.

The drivers are familiar: geopolitical tensions, supply chain disruptions, proximity to customers. But there’s a new element. According to research cited by Deloitte, AI and machine learning are projected to contribute to a 37% increase in labour productivity by 2025. When Boston Consulting Group estimated that reshoring would add 10-30% in costs versus offshoring, they found that automating tasks with digital workers could offset these expenses by lowering overall labour costs.

Here’s the pattern: AI doesn’t just enable reshoring by replacing expensive domestic labour. It makes reshoring economically viable by replacing cheap foreign labour too. The same technology threatening Canadian service workers is simultaneously making it affordable for American companies to bring work home from India, the Philippines, and Canada.

The specifics are instructive. A mid-sized electronics manufacturer that reshored from Vietnam to Ohio in 2024 cut production costs by 15% within a year. Semiconductor investments created over 17,600 new jobs through mega-deals involving TSMC, Samsung, and ASML. Nvidia opened AI supercomputer facilities in Arizona and Texas in 2025, tapping local engineering talent to accelerate next-generation chip design.

Yet these successes mask deeper contradictions. More than 600,000 U.S. manufacturing jobs remain unfilled as of early 2025, even as retirements accelerate. According to the Manufacturing Institute, five out of ten open positions for skilled workers remain unoccupied due to the skills gap crisis. The solution isn’t hiring more workers. It’s deploying AI to do more with fewer people, a dynamic that manufacturing pioneered and service sectors are now replicating at scale.

Texas, South Carolina, and Mississippi emerged as top 2025 states for reshoring and foreign direct investment. Access to reliable energy and workforce availability now drives site selection, elevating regions like Phoenix, Dallas-Fort Worth, and Salt Lake City. Meanwhile, tariffs have become a key motivator, cited in 454% more reshoring cases in 2025 versus 2024, whilst government incentives were cited 49% less as previous subsidies phase out.

The manufacturing reshoring story reveals proximity matters, but automation matters more. When companies can manufacture closer to American customers using fewer workers than foreign operations required, the economic logic of Canadian manufacturing operations deteriorates rapidly.

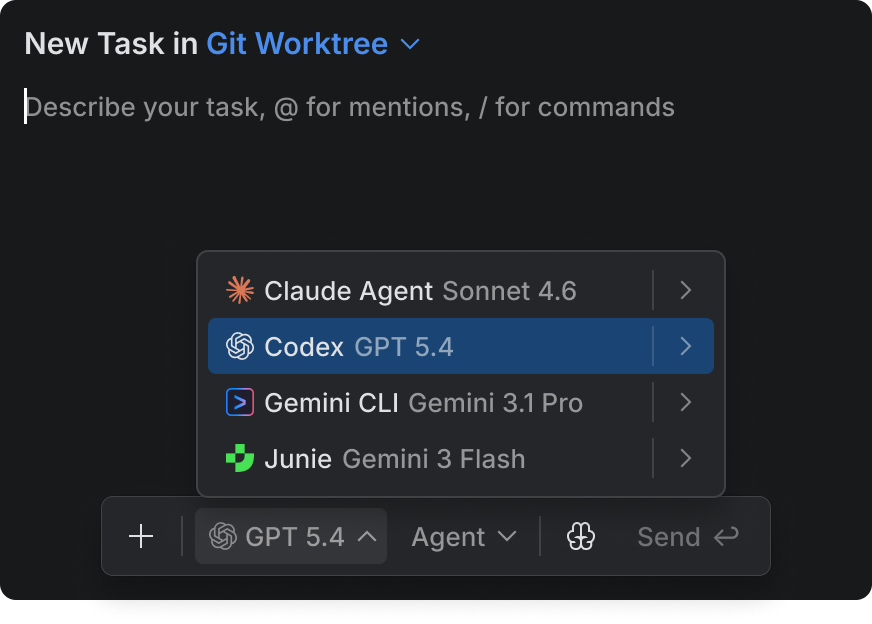

The Contact Centre Transformation

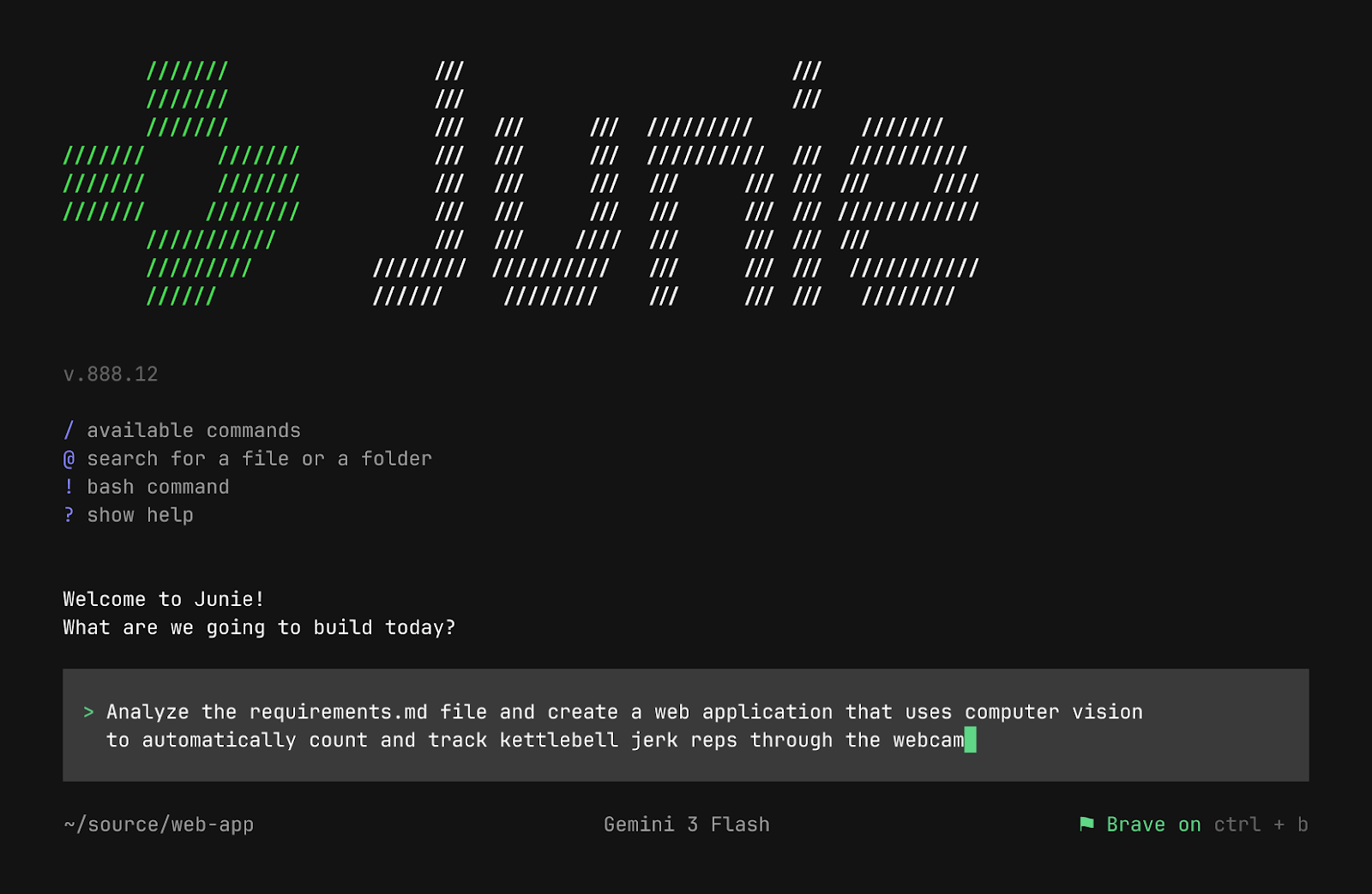

The contact centre industry offers the clearest view of this shift. In August 2022, Gartner predicted that conversational AI would reduce contact centre agent labour costs by $80 billion by 2026. Today, that looks conservative. The average cost per live service interaction ranges from $8 to $15. AI-powered resolutions cost $1 or less per interaction, a 5x to 15x cost reduction at scale.

The voice AI market has exploded faster than anticipated, projected to grow from $3.14 billion in 2024 to $47.5 billion by 2034. Companies report containing up to 70% of calls without human interaction, saving an estimated $5.50 per contained call.

Modern voice AI agents merge speech recognition, natural language processing, and machine learning to automate complex interactions. They interpret intent and context, handle complex multi-turn conversations, and continuously improve responses by analysing past interactions.

By 2027, Gartner predicts that 70% of customer interactions will involve voice AI. The technology handles fully automated call operations with natural-sounding conversations. Some platforms operate across more than 30 languages and scale across thousands of simultaneous conversations. Advanced systems provide real-time sentiment analysis and adjust responses to emotional tone. Intent recognition allows these agents to understand a speaker’s goal even when poorly articulated.

AI assistants that summarise and transcribe calls save at least 20% of agents’ time. Intelligent routing systems match customers with the best-suited available agent. Rather than waiting on hold, customers receive instant answers from AI agents that resolve 80% of inquiries independently.

For Canada’s contact centre workforce, these numbers translate to existential threat. The Bureau of Labor Statistics projects a loss of 150,000 U.S. call centre jobs by 2033. Canadian operations face even steeper pressure. When American companies can deploy AI to handle customer interactions at a fraction of the cost of nearshore Canadian labour, the economic logic of maintaining operations across the border evaporates.

The Keep Call Centers in America Act attempts to slow this shift through requirements that companies disclose call centre locations and AI usage, with mandates to transfer to U.S.-based human agents on customer request. Companies relocating centres overseas face notification requirements 120 days in advance, public listing for up to five years, and ineligibility for federal contracts. Civil penalties can reach $10,000 per day for noncompliance.

Whether this legislation passes is almost beside the point. The fact that it exists, with bipartisan support, reveals how seriously American policymakers take the combination of offshoring and AI as threats to domestic employment. Canada has no equivalent framework, no similar protections, and no comparable political momentum to create them.

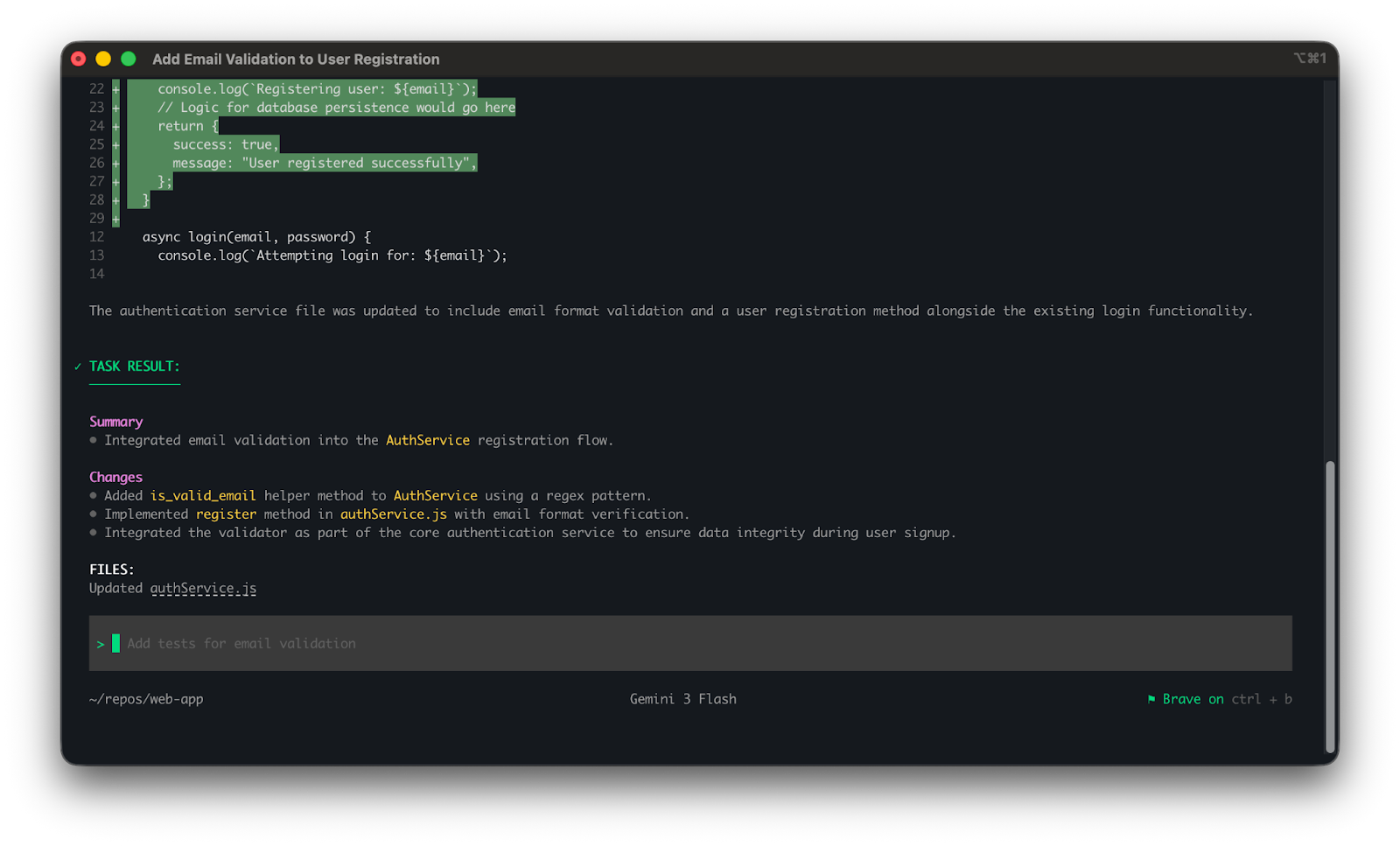

The emerging model isn’t complete automation but human-AI collaboration. AI handles routine tasks and initial triage whilst human agents focus on complex cases requiring empathy, judgement, or escalated authority. This sounds promising until you examine the mathematics. If AI handles 80% of interactions, organisations need perhaps 20% of their previous workforce. Even assuming some growth in total interaction volume, the net employment impact remains sharply negative.

The Entry-Level Employment Collapse

Whilst contact centres represent the most visible transformation, the deeper structural damage is occurring amongst early-career workers across multiple sectors. Research from Stanford economists Erik Brynjolfsson, Bharat Chandar, and Ruyu Chen, drawing on ADP’s 25 million worker database, found that early-career employees in fields most exposed to AI have experienced a 13% drop in employment since 2022 compared to more experienced workers in the same fields.

Employment for 22- to 25-year-olds in jobs with high AI exposure fell 6% between late 2022 and July 2025, whilst employment amongst workers 30 and older grew between 6% and 13%. The pattern holds across software engineering, marketing, customer service, and knowledge work occupations where generative AI overlaps heavily with skills gained through formal education.

Brynjolfsson explained to CBS MoneyWatch: “That’s the kind of book learning that a lot of people get at universities before they enter the job market, so there is a lot of overlap between these LLMs and the knowledge young people have.” Older professionals remain insulated by tacit knowledge and soft skills acquired through experience.

Venture capital firm SignalFire quantified this in their 2025 State of Talent Report, analysing data from 80 million companies and 600 million LinkedIn employees. They found a 50% decline in new role starts by people with less than one year of post-graduate work experience between 2019 and 2024. The decline was consistent across sales, marketing, engineering, recruiting, operations, design, finance, and legal functions.

At Big Tech companies, new graduates now account for just 7% of hires, down 25% from 2023 and over 50% from pre-pandemic 2019 levels. The share of new graduates landing roles at the Magnificent Seven (Alphabet, Amazon, Apple, Meta, Microsoft, NVIDIA, and Tesla) has dropped by more than half since 2022. Meanwhile, these companies increased hiring by 27% for professionals with two to five years of experience.

The sector-specific data reveals where displacement cuts deepest. In technology, 92% of IT jobs face transformation from AI, hitting mid-level (40%) and entry-level (37%) positions hardest. Unemployment amongst 20- to 30-year-olds in tech-exposed occupations has risen by 3 percentage points since early 2025. Customer service projects 80% automation by 2025, displacing 2.24 million out of 2.8 million U.S. jobs. Retail faces 65% automation risk, concentrated amongst cashiers and floor staff. Data entry and administrative roles could see AI eliminate 7.5 million positions by 2027, with manual data entry clerks facing 95% automation risk.

Financial services research from Bloomberg reveals that AI could replace 53% of market research analyst tasks and 67% of sales representative tasks, whilst managerial roles face only 9% to 21% automation risk. The pattern repeats across sectors: entry-level analytical, research, and customer-facing work faces the highest displacement risk, whilst senior positions requiring judgement, relationship management, and strategic thinking remain more insulated.

For Canada, the implications are acute. Canadian universities produce substantial numbers of graduates in precisely the fields seeing the steepest early-career employment declines. These graduates traditionally competed for positions at U.S. tech companies or joined Canadian offices of multinationals. As those entry points close, they either compete for increasingly scarce Canadian opportunities or leave the field entirely, representing a massive waste of educational investment.

Research firm Revelio Labs documented that postings for entry-level jobs in the U.S. overall have declined about 35% since January 2023, with AI playing a significant role. Entry-level job postings, particularly in corporate roles, have dropped 15% year over year, whilst the number of employers referencing “AI” in job descriptions has surged by 400% over the past two years. This isn’t simply companies being selective. It’s a fundamental restructuring of career pathways, with AI eliminating the bottom rungs of the ladder workers traditionally used to gain experience and progress to senior roles.

The response amongst some young workers suggests recognition of this reality. In 2025, 40% of young university graduates are choosing careers in plumbing, construction, and electrical work, trades that cannot be automated, representing a dramatic shift from pre-pandemic career preferences.

The Canadian Response

Against this backdrop, Canadian policy responses appear inadequate. Budget 2024 allocated $2.4 billion to support AI in Canada, a figure that sounds impressive until you examine the details. Of that total, just $50 million over four years went to skills training for workers in sectors disrupted by AI through the Sectoral Workforce Solutions Program. That’s 2% of the envelope, divided across millions of workers facing potential displacement.

The federal government’s Canadian Sovereign AI Compute Strategy, announced in December 2024, directs up to $2 billion toward building domestic AI infrastructure. These investments address Canada’s competitive position in developing AI technology. As of November 2023, Canada’s AI compute capacity represented just 0.7% of global capacity, half that of the United Kingdom, the next lowest G7 nation.

But developing AI and managing AI’s labour market impacts are different challenges. The $50 million for workforce retraining is spread thin across affected sectors and communities. There’s no coordinated strategy for measuring AI’s employment effects, no systematic tracking of which occupations face the highest displacement risk, and no enforcement mechanisms ensuring companies benefiting from AI subsidies maintain employment levels.

Valerio De Stefano, Canada research chair in innovation law and society at York University, argued that “jobs may be reduced to an extent that reskilling may be insufficient,” suggesting the government should consider “forms of unconditional income support such as basic income.” The federal response has been silence.

Provincial efforts show more variation but similar limitations. Ontario invested an additional $100 million in 2024-25 through the Skills Development Fund Training Stream. Ontario’s Bill 194, passed in 2024, focuses on strengthening cybersecurity and establishing accountability, disclosure, and oversight obligations for AI use across the public sector. Bill 149, the Working for Workers Four Act, received Royal Assent on 21 March 2024, requiring employers to disclose in job postings whether they’re using AI in the hiring process, effective 1 January 2026.

Quebec’s approach emphasises both innovation commercialisation through tax incentives and privacy protection through Law 25, major privacy reform that includes requirements for transparency and safeguards around automated decision-making, making it one of the first provincial frameworks to directly address AI implications. British Columbia has released its own framework and principles to guide AI use.

None of these initiatives addresses the core problem: when AI makes it economically rational for companies to consolidate operations in the United States or eliminate positions entirely, retraining workers for jobs that no longer exist becomes futile. Due to Canada’s federal style of government with constitutional divisions of legislative powers, AI policy remains decentralised and fragmented across different levels and jurisdictions. The failure of the Artificial Intelligence and Data Act (AIDA) to pass into law before the 2025 election has left Canada with a significant regulatory gap precisely when comprehensive frameworks are most needed.

Measurement as Policy Failure

The most striking aspect of Canada’s response is the absence of robust measurement frameworks. Statistics Canada provides experimental estimates of AI occupational exposure, finding that in May 2021, 31% of employees aged 18 to 64 were in jobs highly exposed to AI and relatively less complementary with it, whilst 29% were in jobs highly exposed and highly complementary. The remaining 40% were in jobs not highly exposed.

These estimates measure potential exposure, not actual impact. A job may be technically automatable without being automated. As Statistics Canada acknowledges, “Exposure to AI does not necessarily imply a risk of job loss. At the very least, it could imply some degree of job transformation.” This framing is methodologically appropriate but strategically useless. Policymakers need to know which jobs are being affected, at what rate, in which sectors, and with what consequences.

What’s missing is real-time tracking of AI adoption rates by industry, firm size, and region, correlated with indicators of productivity and employment. In 2024, only approximately 6% of Canadian businesses were using AI to produce goods or services, according to Statistics Canada. This low adoption rate might seem reassuring, but it actually makes the measurement problem more urgent. Early adopters are establishing patterns that laggards will copy. By the time AI adoption reaches critical mass, the window for proactive policy intervention will have closed.

Job posting trends offer another measurement approach. In Canada, postings for AI-competing jobs dropped by 18.6% in 2023, followed by an 11.4% drop in 2024. AI-augmenting roles saw smaller declines of 9.9% in 2023 and 7.2% in 2024. These figures suggest displacement is already underway, concentrated in roles most vulnerable to full automation.

Statistics Canada’s findings reveal that 83% to 90% of workers with a bachelor’s degree or higher held jobs highly exposed to AI-related job transformation in May 2021, compared with 38% of workers with a high school diploma or less. This inverts conventional wisdom about technological displacement. Unlike previous automation waves that primarily affected lower-educated workers, AI poses greatest risks to knowledge workers with formal educational credentials, precisely the population Canadian universities are designed to serve.

Policy Levers and Their Limitations

Within current political and fiscal constraints, what policy levers could Canadian governments deploy to retain and create added-value service roles?

Tax incentives represent the most politically palatable option, though their effectiveness is questionable. Budget 2024 proposed a new Canadian Entrepreneurs’ Incentive, reducing the capital gains inclusion rate to 33.3% on a lifetime maximum of $2 million CAD in eligible capital gains. The budget simultaneously increased the capital gains inclusion rate from 50% to 66% for businesses effective June 25, 2024, creating significant debate within the technology industry.

The Scientific Research and Experimental Development (SR&ED) tax incentive programme, which provided $3.9 billion in tax credits against $13.7 billion of claimed expenditures in 2021, underwent consultation in early 2024. But tax incentives face an inherent limitation: they reward activity that would often occur anyway, providing windfall benefits whilst generating uncertain employment effects.

Procurement rules offer more direct leverage. The federal government’s creation of an Office of Digital Transformation aims to scale technology solutions whilst eliminating redundant procurement rules. The Canadian Chamber of Commerce called for participation targets for small and medium-sized businesses. However, federal IT procurement has long struggled with misaligned incentives and internal processes.

The more aggressive option would be domestic content requirements for government contracts. The Keep Call Centers in America Act essentially does this for U.S. federal contracts. Canada could adopt similar provisions, requiring that customer service, IT support, data analysis, and other service functions for government contracts employ Canadian workers.

Such requirements face immediate challenges. They risk retaliation under trade agreements, particularly the Canada-United States-Mexico Agreement. They may increase costs without commensurate benefits. Yet the alternative, allowing AI-driven reshoring to hollow out Canada’s service economy whilst maintaining rhetorical commitment to free trade principles, is not obviously superior.

Retraining programmes represent the policy option with broadest political support and weakest evidentiary basis. The premise is that workers displaced from AI-exposed occupations can acquire skills for AI-complementary or AI-insulated roles. This premise faces several problems. First, it assumes sufficient demand exists for the occupations workers are being trained toward. If AI eliminates more positions than it creates or complements, retraining simply reshuffles workers into a shrinking pool. Second, it assumes workers can successfully transition between occupational categories, despite research showing that mid-career transitions often result in significant wage losses.

Research from the Institute for Research on Public Policy found that generative AI is more likely to transform work composition within occupations rather than eliminate entire job categories. Most occupations will evolve rather than disappear, with workers needing to adapt to changing task compositions. This suggests workers must continuously adapt as AI assumes more routine tasks, requiring ongoing learning rather than one-time retraining.

Recent Canadian government AI consultations highlight the skills gap in AI knowledge and the lack of readiness amongst workers to engage with AI tools effectively. Given that 57.4% of workers are in roles highly susceptible to AI-driven disruption in 2024, this technological transformation is already underway, yet most workers lack the frameworks to understand how their roles will evolve or what capabilities they need to develop.

Creating Added-Value Roles

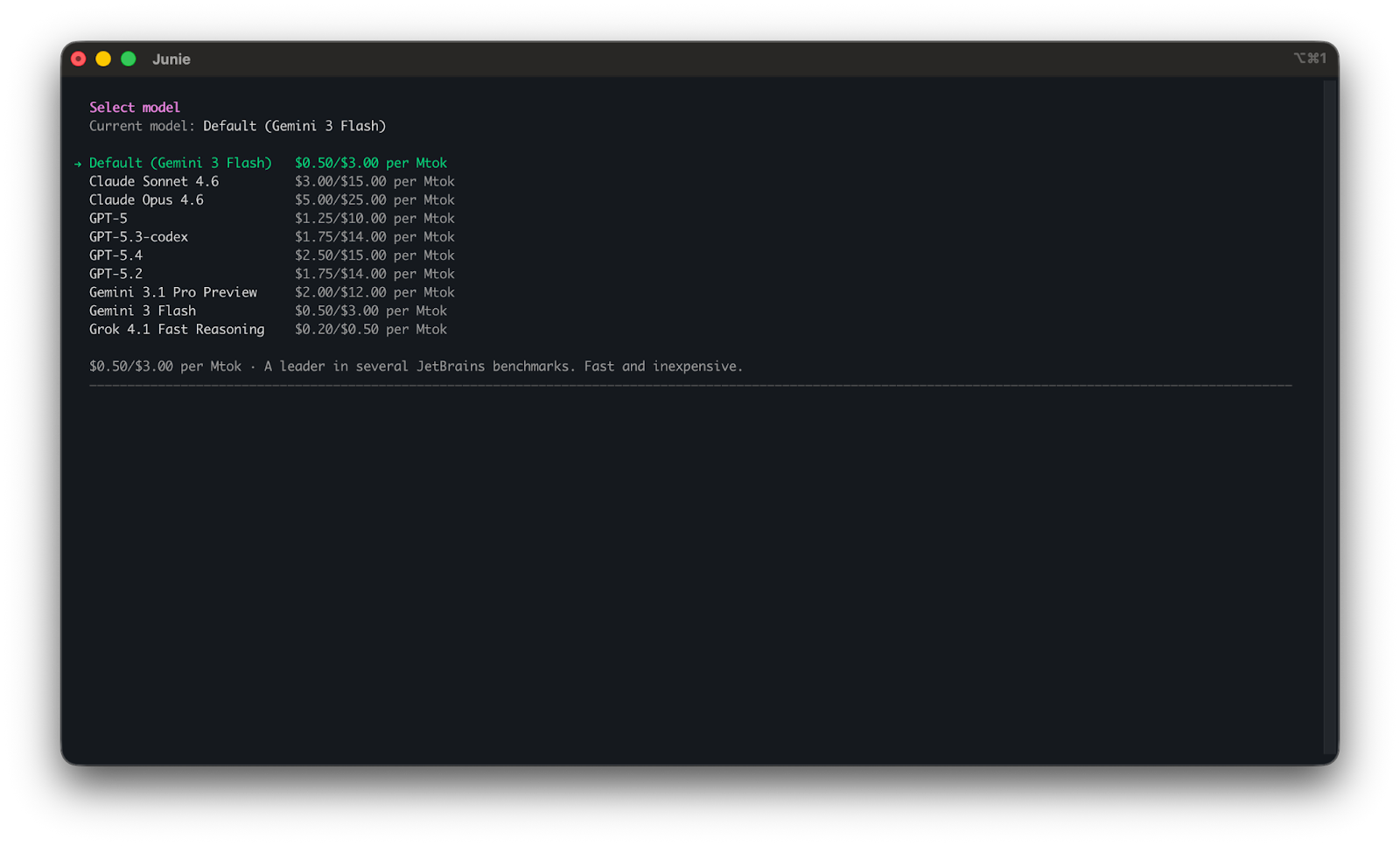

Beyond retention, Canadian governments face the challenge of creating added-value roles that justify higher wages than comparable U.S. positions and resist automation pressures. The 2024 federal budget’s AI investments totalling $2.4 billion reflect a bet that Canada can compete in developing AI technology even as it struggles to manage AI’s labour market effects.

Canada was the first country to introduce a national AI strategy and has invested over $2 billion since 2017 to support AI and digital research and innovation. The country was recently ranked number 1 amongst 80 countries (tied with South Korea and Japan) in the Center for AI and Digital Policy’s 2024 global report on Artificial Intelligence and Democratic Values.

These achievements have not translated to commercial success or job creation at scale. Canadian AI companies frequently relocate to the United States once they reach growth stage, attracted by larger markets, deeper venture capital pools, and more favourable regulatory environments.

Creating added-value roles requires not just research excellence but commercial ecosystems capable of capturing value from that research. On each dimension, Canada faces structural disadvantages. Venture capital investment per capita lags the United States significantly. Toronto Stock Exchange listings struggle to achieve valuations comparable to NASDAQ equivalents. Procurement systems remain biased toward incumbent suppliers, often foreign multinationals.

The Artificial Intelligence and Data Act (AIDA), introduced as part of Bill C-27 in June 2022, was designed to promote responsible AI development in Canada’s private sector. The legislation has been delayed indefinitely pending an election, leaving Canada without comprehensive AI-specific regulation as adoption accelerates.

Added-value roles in the AI era are likely to cluster around several categories: roles requiring deep contextual knowledge and relationship-building that AI struggles to replicate; roles involving creative problem-solving and judgement under uncertainty; roles focused on AI governance, ethics, and compliance; and roles in sectors where human interaction is legally required or culturally preferred.

Canadian competitive advantages in healthcare, natural resources, financial services, and creative industries could theoretically anchor added-value roles in these categories. Healthcare offers particular promise. Teaching hospitals employ residents and interns despite their limited productivity, understanding that medical expertise requires supervised practice. AI will transform clinical documentation, diagnostic imaging interpretation, and treatment protocol selection, but the judgement-intensive aspects of patient care, in complex cases remain difficult to automate fully.

Natural resources, mining and forestry combine physical environments where automation faces practical limits with analytical challenges where AI excels at pattern recognition in geological or environmental data. Financial services increasingly deploy AI for routine analysis and risk assessment, but relationship management with high-net-worth clients and structured financing for complex transactions require human judgement and trust-building.

Creative industries present paradoxes. AI generates images, writes copy, and composes music, seemingly threatening creative workers most directly. Yet the cultural and economic value of creative work often derives from human authorship and unique perspective. Canadian film, television, music, and publishing industries could potentially resist commodification by emphasising distinctly Canadian voices and stories that AI-generated content struggles to replicate.

These opportunities exist but won’t materialise automatically. They require active industrial policy, targeted educational investments, and willingness to accept that some sectors will shrink whilst others grow. Canada’s historical reluctance to pursue aggressive industrial policy, combined with provincial jurisdiction over education and workforce development, makes coordinated national strategies politically difficult to implement.

Preparing for Entry-Level Displacement

The question of how labour markets should measure and prepare for entry-level displacement requires confronting uncomfortable truths about career progression and intergenerational equity.

The traditional model assumed entry-level positions served essential functions. They allowed workers to develop professional norms, build tacit knowledge, establish networks, and demonstrate capability before advancing to positions with greater responsibility.

AI is systematically destroying this model. When systems can perform entry-level analysis, customer service, coding, research, and administrative tasks as well as or better than recent graduates, the economic logic for hiring those graduates evaporates. Companies can hire experienced workers who already possess tacit knowledge and professional networks, augmenting their productivity with AI tools.

McKinsey research estimated that without generative AI, automation could take over tasks accounting for 21.5% of hours worked in the U.S. economy by 2030. With generative AI, that share jumped to 29.5%. Current generative AI and other technologies have potential to automate work activities that absorb 60% to 70% of employees’ time today. The economic value unlocked could reach $2.9 trillion in the United States by 2030 according to McKinsey’s midpoint adoption scenario.

Up to 12 million occupational transitions may be needed in both Europe and the U.S. by 2030, driven primarily by technological advancement. Demand for STEM and healthcare professionals could grow significantly whilst office support, customer service, and production work roles may decline. McKinsey estimates demand for clerks could decrease by 1.6 million jobs, plus losses of 830,000 for retail salespersons, 710,000 for administrative assistants, and 630,000 for cashiers.

For Canadian labour markets, these projections suggest several measurement priorities. First, tracking entry-level hiring rates by sector, occupation, firm size, and geography to identify where displacement is occurring most rapidly. Second, monitoring the age distribution of new hires to detect whether companies are shifting toward experienced workers. Third, analysing job posting requirements to see whether entry-level positions are being redefined to require more experience. Fourth, surveying recent graduates to understand their employment outcomes and career prospects.

This creates profound questions for educational policy. If university degrees increasingly prepare students for jobs that won’t exist or will be filled by experienced workers, the value proposition of higher education deteriorates. Current student debt loads made sense when degrees provided reliable paths to professional employment. If those paths close, debt becomes less investment than burden.

Preparing for entry-level displacement means reconsidering how workers acquire initial professional experience. Apprenticeship models, co-op programmes, and structured internships may need expansion beyond traditional trades into professional services. Educational institutions may need to provide more initial professional socialisation and skill development before graduation.

Alternative pathways into professions may need development. Possibilities include mid-career programmes that combine intensive training with guaranteed placement, government-subsidised positions that allow workers to build experience, and reformed credentialing systems that recognise diverse paths to expertise.

The model exists in healthcare, where teaching hospitals employ residents and interns despite their limited productivity, understanding that medical expertise requires supervised practice. Similar logic could apply to other professions heavily affected by AI: teaching firms, demonstration projects, and publicly funded positions that allow workers to develop professional capabilities under supervision.

Educational institutions must prepare students with capabilities AI struggles to match: complex problem-solving under ambiguity, cross-disciplinary synthesis, ethical reasoning in novel situations, and relationship-building across cultural contexts. This requires fundamental curriculum reform, moving away from content delivery toward capability development, a transformation implemented slowly

The Uncomfortable Arithmetic

Underlying all these discussions is an arithmetic that policymakers rarely state plainly: if AI can perform tasks at $1 per interaction that previously cost $8 to $15 via human labour, the economic pressure to automate is effectively irresistible in competitive markets. A firm that refuses to automate whilst competitors embrace it will find itself unable to match their pricing, productivity, or margins.

Government policy can delay this dynamic but not indefinitely prevent it. Subsidies can offset cost disadvantages temporarily. Regulations can slow deployment. But unless policy fundamentally alters the economic logic, the outcome is determined by the cost differential.

This is why focusing solely on retraining, whilst politically attractive, is strategically insufficient. Even perfectly trained workers can’t compete with systems that perform equivalent work at a fraction of the cost. The question isn’t whether workers have appropriate skills but whether the market values human labour at all for particular tasks.

The honest policy conversation would acknowledge this and address it directly. If large categories of human labour become economically uncompetitive with AI systems, societies face choices about how to distribute the gains from automation and support workers whose labour is no longer valued. This might involve shorter work weeks, stronger social insurance, public employment guarantees, or reforms to how income and wealth are taxed and distributed.

Canada’s policy discourse has not reached this level of candour. Official statements emphasise opportunity and transformation rather than displacement and insecurity. Budget allocations prioritise AI development over worker protection. Measurement systems track potential exposure rather than actual harm. The political system remains committed to the fiction that market economies with modest social insurance can manage technological disruption of this scale without fundamental reforms.

This creates a gap between policy and reality. Workers experiencing displacement understand what’s happening to them. They see entry-level positions disappearing, advancement opportunities closing, and promises of retraining ring hollow when programmes prepare them for jobs that also face automation. The disconnection between official optimism and lived experience breeds cynicism about government competence and receptivity to political movements promising more radical change.

An Honest Assessment

Canada faces AI-driven reshoring pressure that will intensify over the next decade. American policy, combining domestic content requirements with aggressive AI deployment, will pull high-value service work back to the United States whilst using automation to limit the number of workers required. Canadian service workers, particularly in customer-facing roles, back-office functions, and knowledge work occupations, will experience significant displacement.

Current Canadian policy responses are inadequate in scope, poorly targeted, and insufficiently funded. Tax incentives provide uncertain benefits. Procurement reforms face implementation challenges. Retraining programmes assume labour demand that may not materialise. Measurement systems track potential rather than actual impacts. Added-value role creation requires industrial policy capabilities that Canadian governments have largely abandoned.

The policy levers available can marginally improve outcomes but won’t prevent significant disruption. More aggressive interventions face political and administrative obstacles that make implementation unlikely in the near term.

Entry-level displacement is already underway and will accelerate. Traditional career progression pathways are breaking down. Educational institutions have not adapted to prepare students for labour markets where entry-level positions are scarce. Alternative mechanisms for acquiring professional experience remain underdeveloped.

The fundamental challenge is that AI changes the economic logic of labour markets in ways that conventional policy tools can’t adequately address. When technology can perform work at a fraction of human cost, neither training workers nor subsidising their employment provides sustainable solutions. The gains from automation accrue primarily to technology owners and firms whilst costs concentrate amongst displaced workers and communities.

Addressing this requires interventions beyond traditional labour market policy: reforms to how technology gains are distributed, strengthened social insurance, new models of work and income, and willingness to regulate markets to achieve social objectives even when this reduces economic efficiency by narrow measures.

Canadian policymakers have not demonstrated appetite for such reforms. The political coalition required has not formed. The public discourse remains focused on opportunity rather than displacement, innovation rather than disruption, adaptation rather than protection.

This may change as displacement becomes more visible and generates political pressure that can’t be ignored. But policy developed in crisis typically proves more expensive, less effective, and more contentious than policy developed with foresight. The window for proactive intervention is closing. Once reshoring is complete, jobs are eliminated, and workers are displaced, the costs of reversal become prohibitive.

The great service job reversal is not a future possibility. It’s a present reality, measurable in employment data, visible in job postings, experienced by early-career workers, and driving legislative responses in the United States. Canada can choose to respond with commensurate urgency and resources, or it can maintain current approaches and accept the consequences. But it cannot pretend the choice doesn’t exist.

References & Sources

- 59 AI Job Statistics: Future of U.S. Jobs | National University

- Reshoring Initiative 2024 Annual Report: 244,000 U.S. Manufacturing Jobs Were Announced in 2024 via Reshoring, FDI

- Reshoring manufacturing to the US: The role of AI, automation and digital labor | IBM

- 2026 Manufacturing Industry Outlook | Deloitte Insights

- Canada | The Essex AI Policy Observatory for the World of Work | University of Essex

- Harnessing Generative AI: Navigating the Transformative Impact on Canada’s Labour Market – IRPP

- Budget 2024 aims to upskill Canadian workers affected by AI | Canadian HR Reporter

- The Keep Call Centers in America Act: A Turning Point?

- US Reshoring Bill Sparks Debate on Future of Offshore Call Centers

- Gartner Predicts Conversational AI Will Reduce Contact Center Agent Labor Costs by $80 Billion in 2026

- AI in Customer Service | IBM

- AI is not just ending entry-level jobs. It’s the end of the career ladder as we know it – CNBC

- First-of-its-kind Stanford study says AI is starting to have a ‘significant and disproportionate impact’ on entry-level workers in the U.S. | Fortune

- Who’s Losing Jobs to AI? New Stanford Analysis Breaks It Down | TIME

- Yes, AI is affecting employment. Here’s the data. – ADP Research

- New study sheds light on what kinds of workers are losing jobs to AI – CBS News

- The SignalFire State of Tech Talent Report – 2025

- Sorry, grads: Entry-level tech jobs are getting wiped out – SF Standard

- Exposure to artificial intelligence in Canadian jobs: Experimental estimates – Statistics Canada

- Experimental Estimates of Potential Artificial Intelligence Occupational Exposure in Canada – Statistics Canada

-

What’s in#Budget2024 for Canadian tech? | BetaKit

- Deputy Prime Minister announces action to protect and create good-paying jobs for Canadian workers – Canada.ca

- Federal Budget 2024: AI Update – McCarthy Tétrault

- Canadian Sovereign AI Compute Strategy – Innovation, Science and Economic Development Canada

- Federal government outlines $2 billion in AI compute spending commitment | BetaKit

- The state of AI in 2025: Agents, innovation, and transformation – McKinsey

- Generative AI and the future of work in America | McKinsey

- The economic potential of generative AI: The next productivity frontier – McKinsey

- Keep Call Centers in America Act of 2025 – Senator Gallego

- Gallego, Justice Introduce Bipartisan Bill to Protect Americans’ Access to Quality Customer Service – Senator Ruben Gallego

- Can retraining programs ease the fear of AI job loss? – Northeastern University

- Jobs Lost to Automation Statistics in 2024 | TeamStage

- Jobs lost, jobs gained: What the future of work will mean for jobs, skills, and wages – McKinsey

- AI Voice Agents: 2025 Update | Andreessen Horowitz

- Voice AI Technology: Cutting Call Center Costs by 60% in 2025 | SideTool

- Reshoring Initiative 2024 Annual Report, Plus 1Q2025 | Reshoring Initiative

- Reshoring Reality: What’s Fueling the Manufacturing Revival? | Camoin Associates

- AI Job Displacement 2025: Which Jobs Are At Risk? | Final Round AI

- 73 AI Job Replacement Statistics (2025 Reports & Data) | DemandSage

- The Future of Jobs Report 2025 | World Economic Forum

- Artificial Intelligence 2025 – Canada | Chambers and Partners

Tim Green

UK-based Systems Theorist & Independent Technology Writer

Tim explores the intersections of artificial intelligence, decentralised cognition, and posthuman ethics. His work, published at smarterarticles.co.uk, challenges dominant narratives of technological progress while proposing interdisciplinary frameworks for collective intelligence and digital stewardship.

His writing has been featured on Ground News and shared by independent researchers across both academic and technological communities.

ORCID: 0009-0002-0156-9795

Email: tim@smarterarticles.co.uk