This blog post was brought to you by Aykut Bulgu, draft.dev.

When a Jenkins installation starts to feel slow, the first symptom is usually the queue. Builds sit longer than they should, feedback takes too long to reach developers, and the CI system starts demanding more attention from the platform team than anyone wants to give it.

That pattern is familiar to teams that adopted Jenkins early and then kept expanding it. Jenkins can scale, but at larger sizes it often requires careful controller sizing, plugin management, and, in many organizations, multiple controllers to spread the load. That works, but it also adds operational overhead.

For DevOps engineers and architects, that overhead matters. CI/CD is part of the delivery path, and when the platform becomes harder to maintain, engineering teams feel it quickly.

In this article, we’ll look at the scaling challenges teams commonly run into with Jenkins and how TeamCity’s server–agent architecture helps reduce that operational burden while supporting growth from a few pipelines to hundreds.

The scaling challenges of Jenkins

At a high level, Jenkins uses a controller–agent model. A central controller manages configuration, scheduling, and coordination, while agents run the actual builds. TeamCity also uses a central server with build agents, so the high-level pattern is similar. The difference shows up in how the two systems are typically operated and extended at scale.

Running Jenkins on Kubernetes can improve agent provisioning and make burst capacity easier to manage, but it does not remove the need to manage controller load, plugin compatibility, and governance across the system.

Controllers can become bottlenecks

As more teams, repositories, and pipelines are added, the Jenkins controller takes on more work:

- Managing job and pipeline configuration

- Scheduling builds and coordinating agents

- Serving the UI and handling API requests

- Maintaining plugin state and runtime behavior

Under heavier load, the controller can become a bottleneck. Jenkins documentation and ecosystem guidance often point larger organizations toward multi-controller strategies to distribute load. That can be effective, but it introduces additional work around governance, version alignment, and visibility across teams.

Horizontal scaling is not just a matter of adding agents

Adding more Jenkins agents improves execution capacity, but it does not solve controller-side coordination and configuration challenges. As teams grow, they often end up dealing with:

- Different plugin versions across controllers

- Inconsistent job definitions and conventions

- Repeated work to manage credentials, shared libraries, and policy enforcement

At that point, scaling Jenkins often means operating a group of controllers, maintaining shared libraries, and building internal processes to keep everything consistent.

Plugin dependency adds operational risk

A large part of Jenkins’s flexibility comes from its plugin ecosystem. That is one of its strengths, but it also creates operational tradeoffs at scale. Plugin-heavy environments can:

- Create upgrade chains where one plugin update affects others

- Add performance or memory overhead on the controller

- Make troubleshooting harder because behavior is distributed across plugin-specific logs and extension points

In many Jenkins environments, the platform team ends up spending significant time validating plugin updates, checking compatibility, and troubleshooting interactions between components.

TeamCity’s server–agent architecture

TeamCity also uses a central server with build agents, but the platform is designed to keep configuration centralized while letting execution scale outward.

The TeamCity server handles orchestration. It stores configuration, build history, and artifact metadata, manages queues and dependencies, and provides the UI and REST API. For production use, TeamCity supports external databases, which is an important part of scaling larger installations.

Build agents handle execution. They check out source code, run build steps and tests, publish artifacts and reports, and send results back to the server.

Agents are separate pieces of software installed on physical or virtual machines. They maintain a connection to the server and receive work assignments there, which simplifies deployment in environments where inbound networking is restricted.

That separation matters in practice. Agents can be added horizontally, including in cloud environments, while the platform retains centralized configuration and visibility.

Built-in scalability features in TeamCity

Beyond the core server–agent model, TeamCity includes features that help teams scale without continually redesigning the CI system.

Elastic agents and cloud integrations

TeamCity supports agents on both physical and cloud-hosted machines and can start cloud agents on demand through built-in cloud integrations and officially supported plugins. That makes it easier to handle temporary spikes in demand without permanently increasing capacity.

Consider a team that usually runs on ten on-premises agents and keeps build times predictable during a normal week. After a large batch of pull requests is merged, the queue grows sharply. With cloud profiles configured, TeamCity can start temporary cloud agents, reduce the queue during the spike, and then remove that temporary capacity when demand drops.

From the developer’s perspective, the important result is consistency: feedback remains reasonably fast even when build volume changes.

Visual build chains instead of heavily assembled pipeline logic

TeamCity’s build chains let you define sequences and graphs of builds connected through snapshot and artifact dependencies. This makes it easier to model pipelines where related parts of the workflow share a consistent VCS snapshot.

Build chains can model workflows such as build → test → package → deploy, run dependent builds in parallel when possible, and reuse artifacts to avoid redundant work. Because build chains are a core concept in TeamCity, teams can model complex flows without stitching together multiple extensions to get dependency visibility.

Jenkins pipelines do support multi-stage workflows natively through Jenkinsfile, but in larger installations teams often combine pipelines with shared libraries, controller-specific conventions, and additional plugins for orchestration, visibility, or environment handling. TeamCity’s approach is more opinionated and more centralized.

Take a product made up of a shared library, a backend API, and a frontend SPA. In TeamCity, you can define a build chain where the shared library build runs first, then fans out into backend and frontend builds, and finally feeds a packaging or deployment build that depends on both.

That dependency graph is visible in the UI and managed as part of the platform rather than assembled from several separate pieces.

Intelligent agent selection

TeamCity matches builds to agents based on requirements and capabilities. That helps with resource use and reduces manual scheduling overhead as environments become more specialized.

For example, an organization might have:

- Linux agents with Docker and Java 21 for backend services

- Windows agents with .NET SDKs for legacy applications

- macOS agents with Xcode for mobile builds

Each build configuration can declare what it needs: operating system, installed toolchains, or custom parameters such as docker.server.osType = linux or specific version requirements.

When a build is queued, TeamCity routes it to an agent that satisfies those requirements. That keeps scheduling rules in configuration instead of leaving them in tribal knowledge or local conventions.

Reliability and maintainability advantages

Scaling is not only about throughput. It is also about how much effort it takes to keep the platform stable as the number of projects grows.

Fewer moving parts

TeamCity includes first-class support for many common workflows, so teams often rely less on third-party extensions for core CI/CD behavior. Features such as test reporting, parallel test execution support, flaky test detection, and visual dependency management are part of the product. That generally leads to more predictable upgrades and fewer surprises caused by extension interactions.

Centralized configuration

In Jenkins environments with multiple controllers, teams often duplicate configuration patterns, credentials management, and job conventions across instances. In TeamCity, projects, templates, and build configurations live under a single server or a smaller number of servers, which makes it easier to standardize quality gates, permissions, and reusable settings across teams.

That centralization makes governance easier to implement consistently.

Simplified upgrades and lower downtime risk

A plugin-heavy Jenkins environment can turn upgrades into a lengthy validation exercise. With TeamCity, teams are usually dealing with fewer critical third-party dependencies, a clearer upgrade path for the server and agents, and centralized control over versioning. Upgrades still require planning, but the operational surface area is typically smaller.

Real-world benefits for DevOps engineers and architects

In practice, this leads to several benefits:

- Lower operational overhead: Scaling is more often about adding or tuning agents, reviewing queue behavior, and standardizing configuration rather than adding more controllers and validating large plugin combinations.

- Better developer feedback loops: Visual build chains, parallel execution, and detailed reporting help teams understand failures faster and keep queue times more predictable.

- More manageable growth: As organizations add services, languages, and delivery targets, TeamCity gives platform teams a centralized way to grow CI/CD capacity without rebuilding governance from scratch.

Jenkins vs. TeamCity

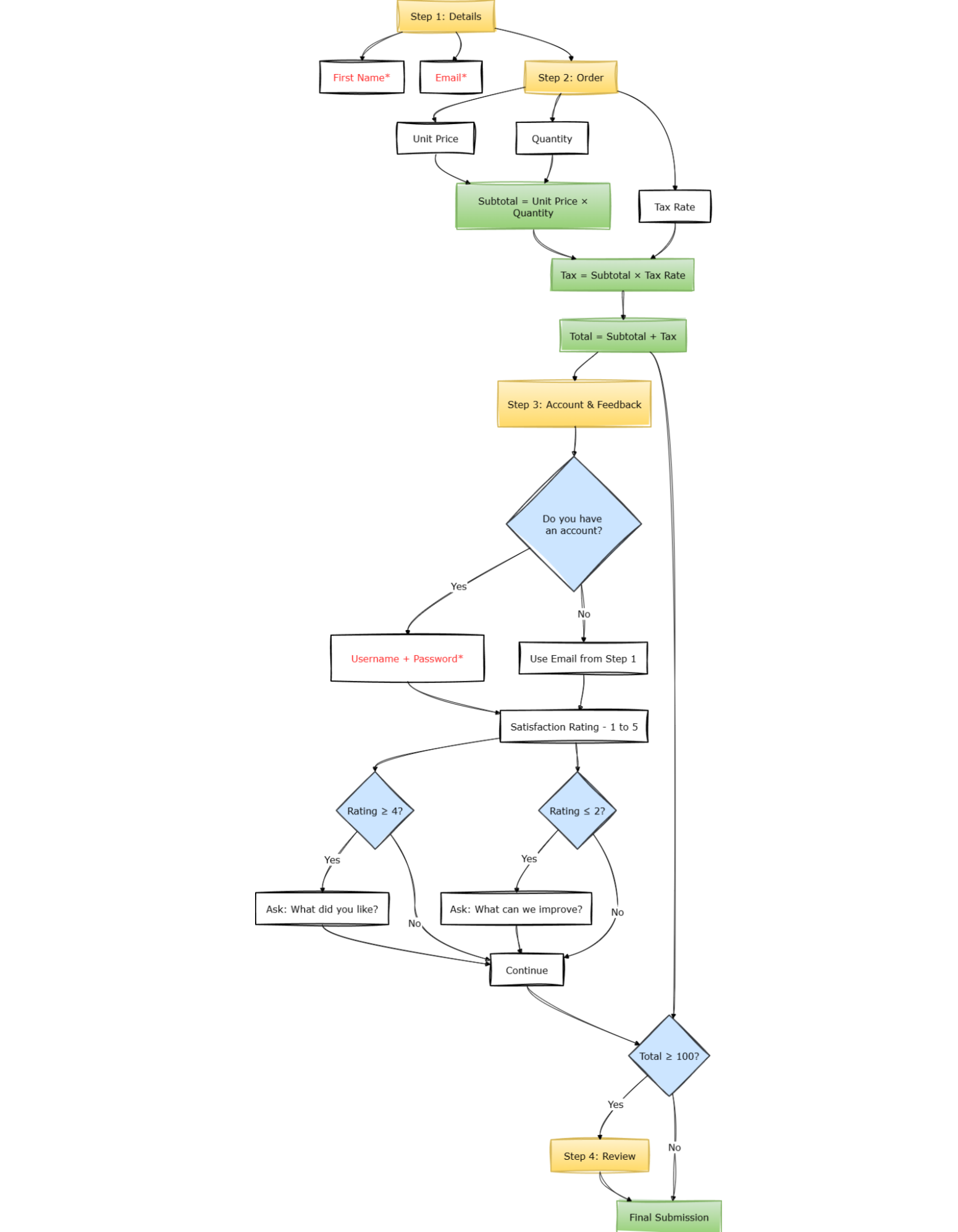

The following diagram provides a high-level comparison of how Jenkins and TeamCity are commonly operated at scale.

Here’s a summary of how the two architectures compare on the dimensions discussed in the article:

| Aspect | Jenkins | TeamCity | Why it matters |

|---|---|---|---|

| Core architecture | Controller–agent model; controller handles UI, scheduling, and extensions | Server–agent model; server handles orchestration and state while agents execute builds | Both use a central coordinator, but operational complexity differs at scale |

| Scaling strategy | Can scale, but larger installations often use multiple controllers and careful governance | Typically scales by adding agents and organizing projects centrally | Lower operational overhead makes growth easier to manage |

| Plugin dependence | Strong ecosystem; many installations rely on plugins and shared libraries for integrations and platform behavior | Many core capabilities are built in, reducing dependence on third-party extensions for central workflows | Fewer critical dependencies generally reduce upgrade and troubleshooting risk |

| Pipelines / orchestration | Jenkinsfile-based pipelines are native; larger setups often add shared libraries and plugins around them | Build chains, snapshot dependencies, and artifact dependencies are first-class concepts with visual support | Easier dependency visibility can simplify large delivery flows |

| Agent management | Dynamic agents are often implemented through plugins or external platform work | Supports physical and cloud agents, with built-in cloud integrations and supported plugins | Both can scale execution, but TeamCity centralizes more of the experience |

| Workload placement | Labels, node selection, and pipeline logic | Agent requirements and capabilities matched by the server | Better placement reduces environment mismatch issues |

| Maintainability at scale | Multi-controller environments and plugin coordination increase admin effort | Centralized server model and fewer critical external dependencies simplify administration | Lower maintenance burden improves platform stability over time |

Note: TeamCity’s on-premises edition is free for up to three build agents; scaling beyond that requires additional agent licenses, as described on the TeamCity on-premises pricing page. TeamCity Cloud uses a different usage-based pricing model and does not have the same “three agent” limit.

Conclusion

Jenkins remains a capable and widely used CI/CD platform, but at enterprise scale it often requires more architectural planning and more day-to-day coordination from the platform team. Controller load, plugin management, and multi-controller governance are all manageable, but they come with real operational cost.

TeamCity approaches the same problem with centralized orchestration, horizontally scalable agents, and more built-in support for dependency modeling, test visibility, and environment management. For teams that want to scale CI/CD without assembling as much of the platform themselves, that can be a meaningful advantage.

If your current Jenkins setup is already demanding controller workarounds, plugin validation cycles, and custom governance processes, it may be worth evaluating whether a more centralized platform would reduce that burden. TeamCity is designed to support that shift while keeping the developer experience consistent as the organization grows.