When I first started building websites, rounded corners required five background images, one for each corner, one for the body, and a prayer that the client wouldn’t ask for a different radius. Then the border-radius property landed, and the entire web collectively sighed with relief. That was over fifteen years ago, and honestly, we’ve been riding that same wave ever since. Just as then, I hope that we can look at this feature as a progressive enhancement slowly making its way to other browsers.

I like a good border-radius like any other guy, but the fact is that it only gives us one shape. Round. That’s it. Want beveled corners? Clip-path. Scooped ticket edges? SVG mask. Squircle app icons? A carefully tuned SVG that you hope nobody asks you to animate. We’ve been hacking around the limitations of border-radius for years, and those hacks come with real trade-offs: borders don’t follow clip-paths, shadows get cut off, and you end up with brittle code that breaks the moment someone changes a padding value.

Well, the new corner-shape changes all of that.

What Is corner-shape?

The corner-shape property is a companion to border-radius. It doesn’t replace it; it modifies the shape of the curve that border-radius creates. Without border-radius, corner-shape does nothing. But together, they’re a powerful pair.

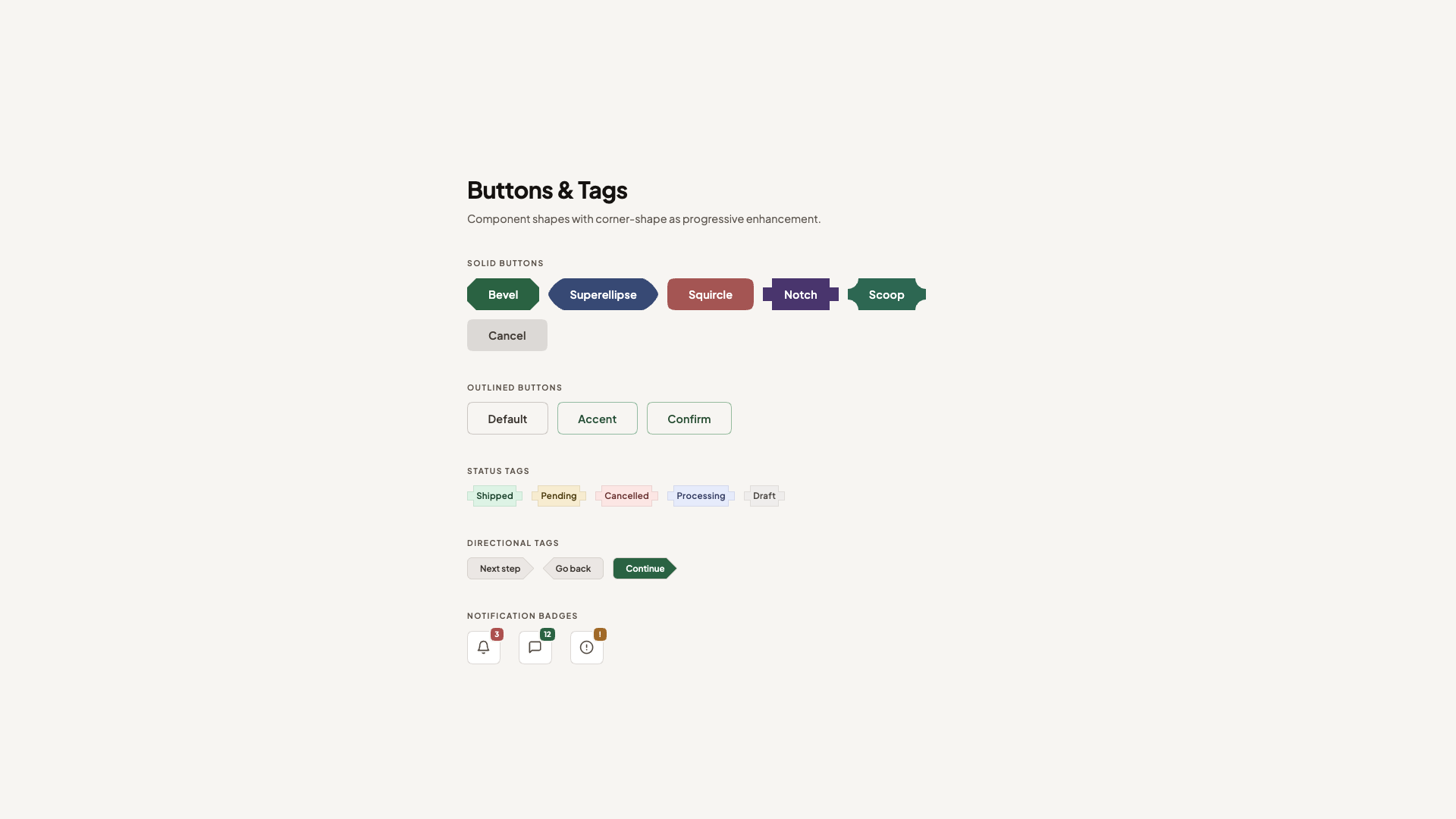

The property accepts these values:

round: the default, same as regular border-radius,squircle: a superellipse, the smooth Apple-style rounded square,bevel: a straight line between the two radius endpoints (snipped corners),scoop: an inverted curve, creating concave corners,notch: sharp inward cuts,square: effectively removes the rounding, overriding border-radius.

And you can set different values per corner, just like border-radius:

*corner-shape: bevel round scoop squircle;

/* top-left, top-right, bottom-right, bottom-left */

You can also use the superellipse() function with a numeric parameter for fine-grained control.

.element {

border-radius: 25px;

corner-shape: superellipse(0); /* equal to 'bevel' */

}

So the question here might be: why not call this property “border-shape” instead? Well, first of all, that is something completely different that we’ll get to play around with soon. Second, it does apply to a bit more than borders, such as outlines, box shadows, and backgrounds. That’s the thing that the clip-path property could never do.

Why Progressive Enhancement Matters Here

At the time of writing (March 2026), corner-shape is only supported in Chrome 139+ and other Chromium-based browsers. That’s a significant chunk of users, but certainly not everyone. The temptation is to either ignore the property until it’s everywhere or to build demos that fall apart without it.

I don’t think either approach is right. The way I see it, corner-shape is the perfect candidate for progressive enhancement, just as border-radius was in the age of Internet Explorer 6. The baseline should use the techniques we already know, such as border-radius, clip-path, radial-gradient masks and look intentionally good. Then, for browsers that support corner-shape, we upgrade the experience. Sometimes this can be as simple as just providing a more basic default; sometimes it might need to be a bit more.

Every demo in this article is created with that progressive enhancement idea. The structure for the demos looks like:

@layer base, presentation, demo;

The presentation layer contains the full polished UI using proven techniques. The demo layer wraps everything in @supports:

@layer demo {

@supports (corner-shape: bevel) {

/* upgrade styles here */

}

}

No fallback banners, no “your browser doesn’t support this” messages. Just two tiers of design: good and better. I thought it could be nice just to show some examples. There are a few out there already, but I hope I can add a bit of extra inspiration on top of those.

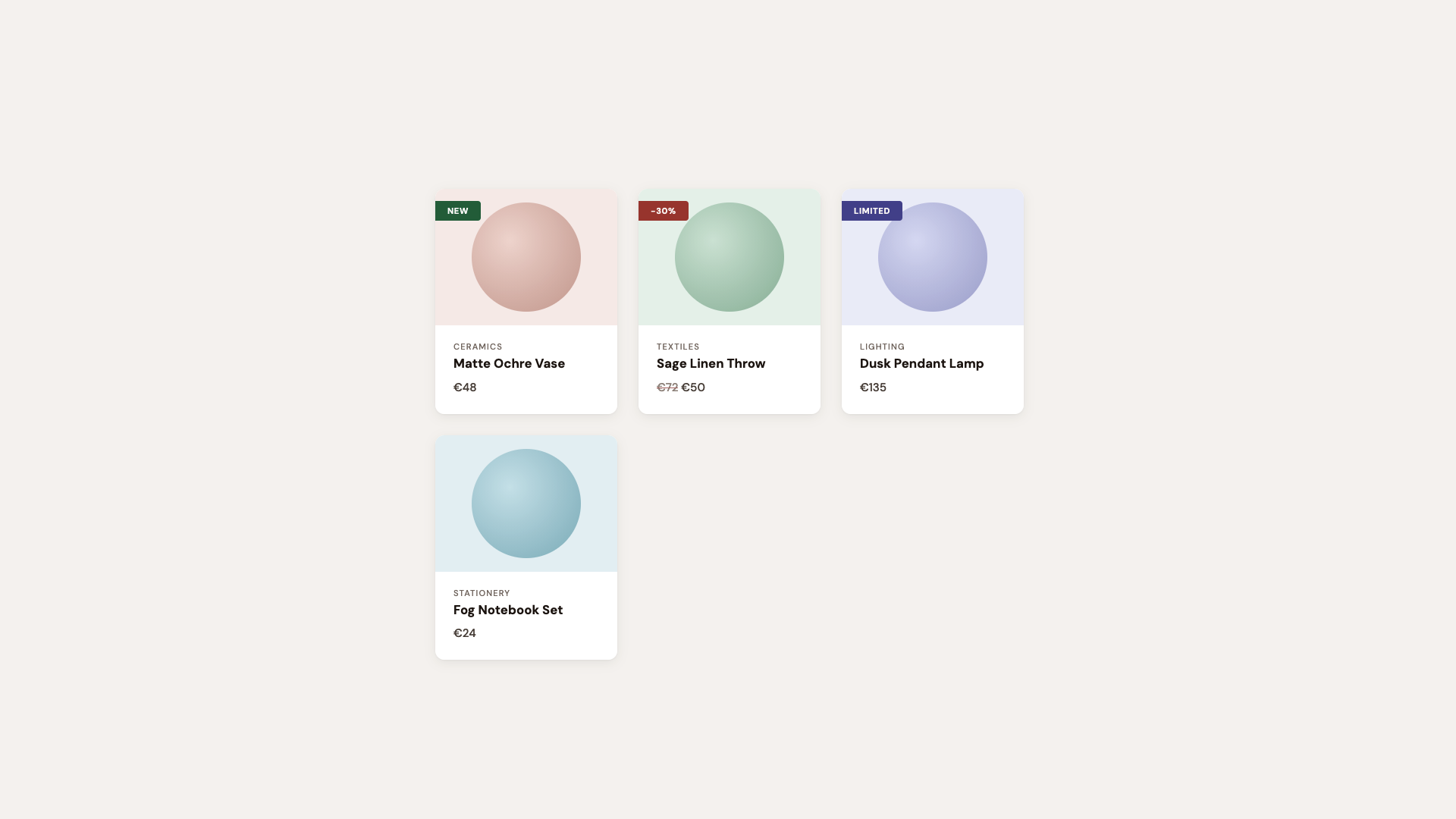

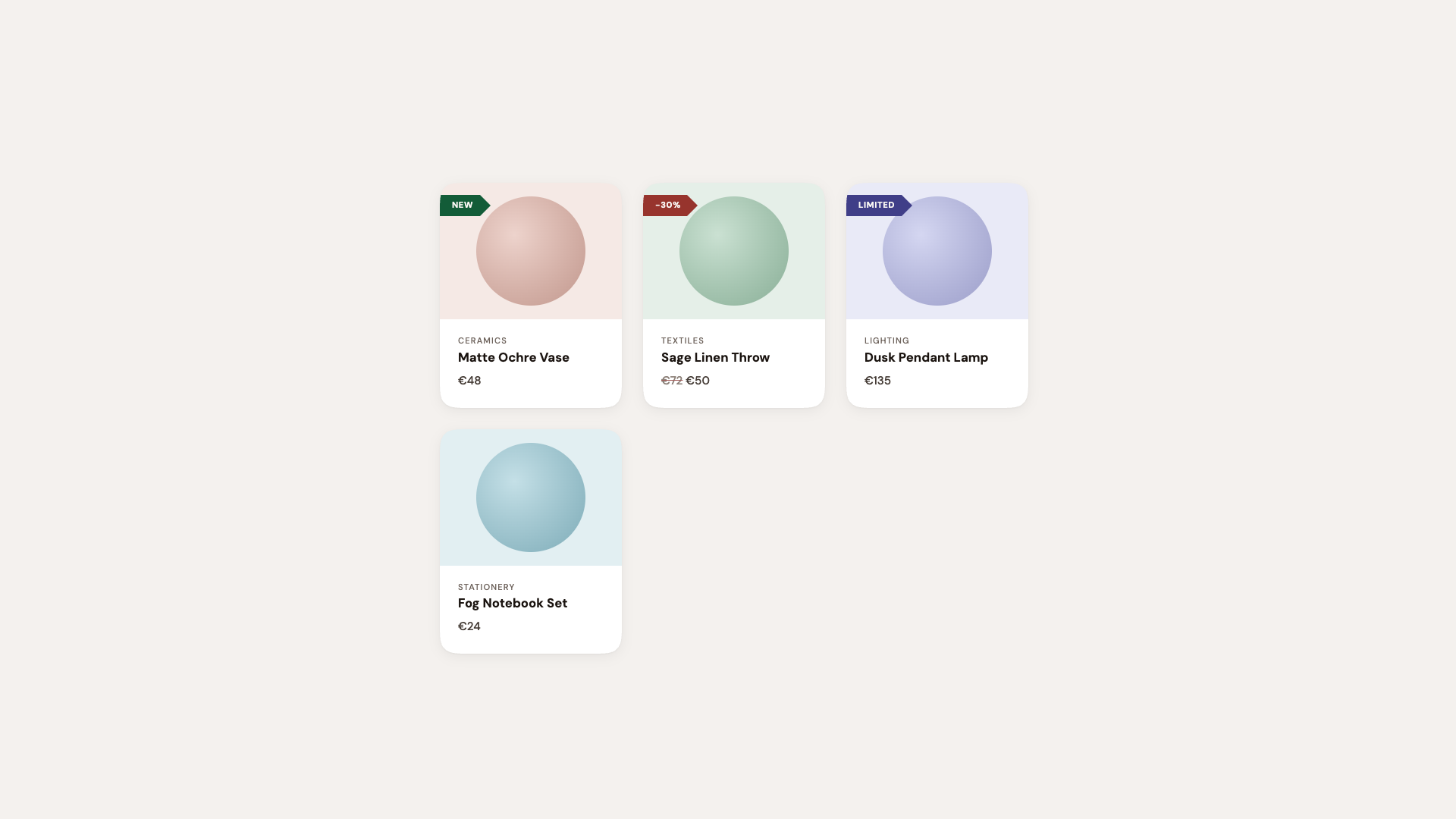

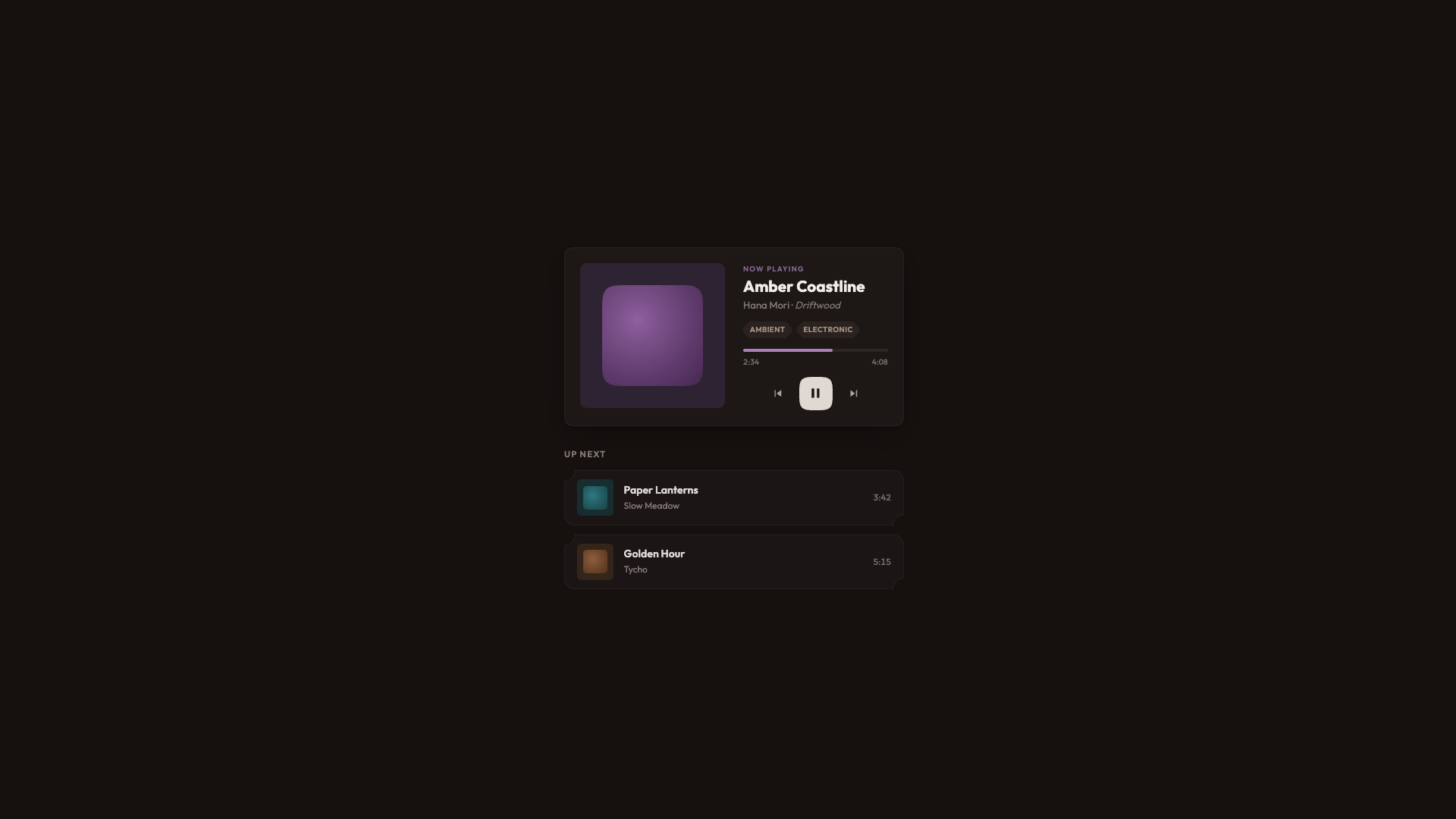

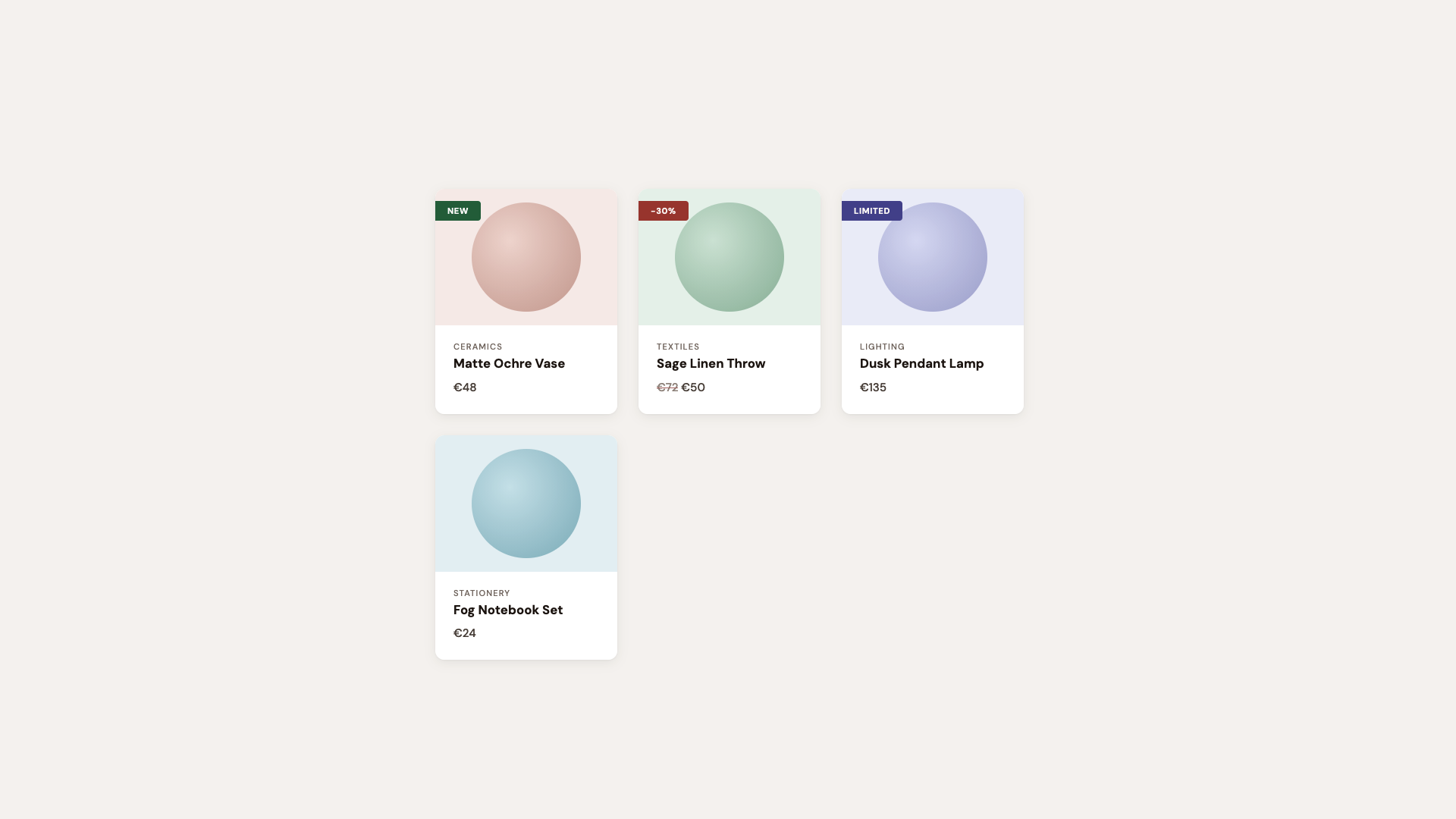

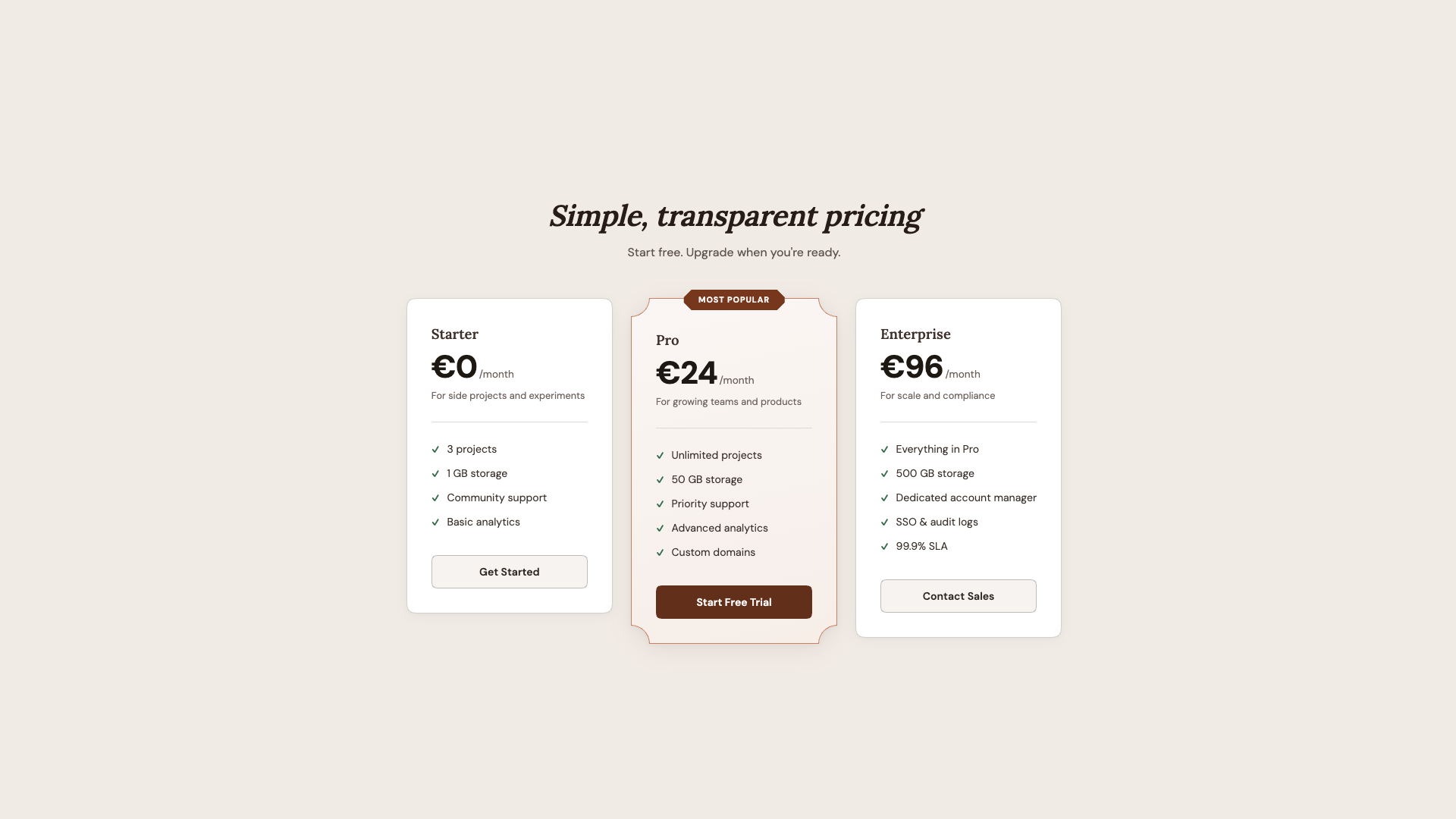

Demo 1: Product Cards With Ribbon Badges

Every e-commerce site has them: those little “New” or “Sale” badges pinned to the corner of a product card. Traditionally, getting that ribbon shape means reaching for clip-path: polygon() or a rotated pseudo-element, let’s call it “fiddly code” that has the chance to fall apart the moment someone changes a padding value.

But here’s the thing: we don’t need the ribbon shape in the baseline. A simple badge with slightly rounded corners tells the same story and looks perfectly fine:

.product__badge {

border-radius: 0 4px 4px 0;

background-color: var(--badge-bg);

}

That’s it. A small, clean label sitting flush against the left edge of the card. Nothing fancy, nothing broken. It works in every browser.

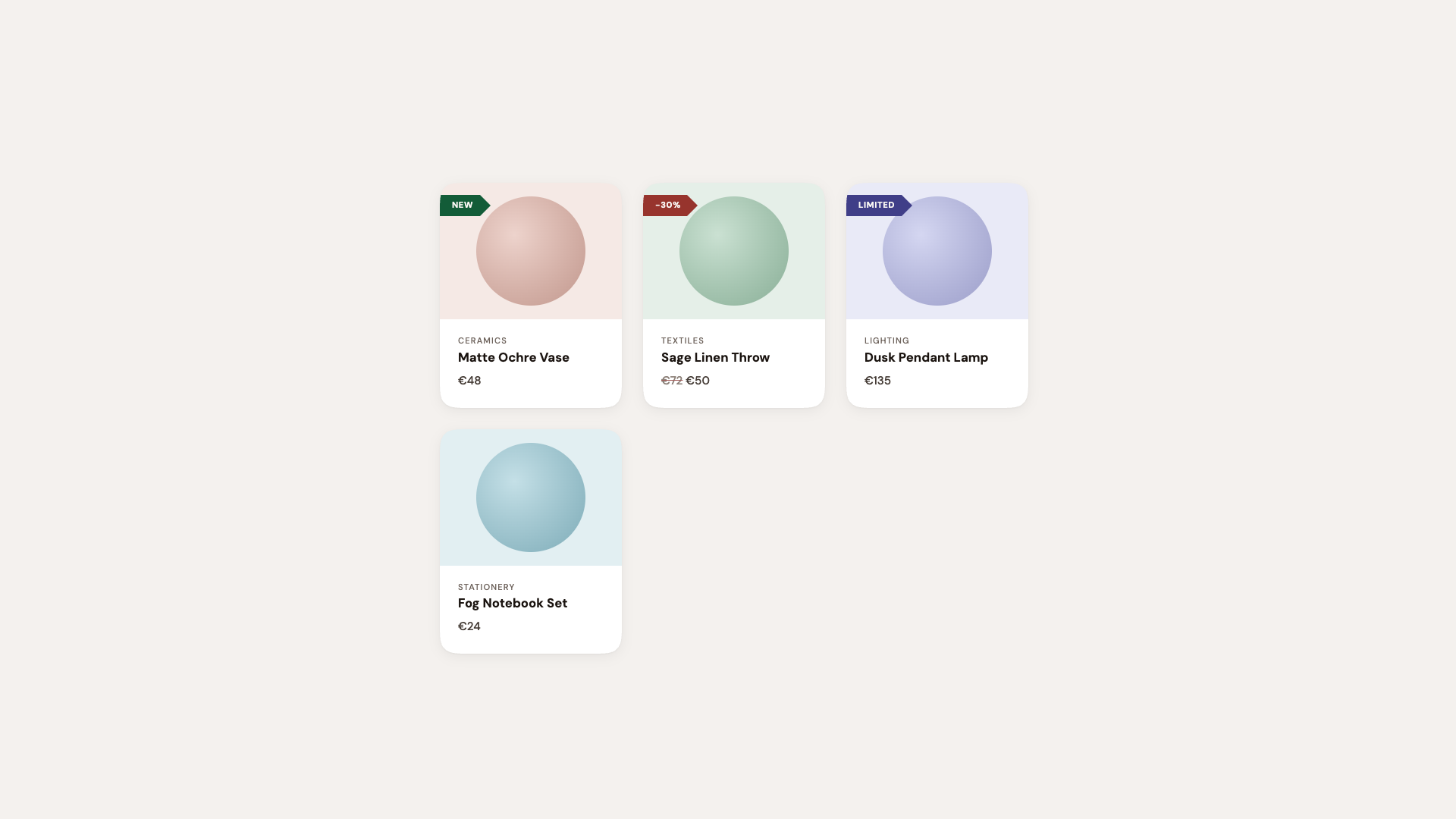

For browsers that support corner-shape, we enhance:

@layer demo {

/* If the browser supports `corner-shape` */

@supports (corner-shape: bevel) {

.product {

border-radius: 40px;

corner-shape: squircle;

}

.product__badge {

padding: 0.35rem 1.4rem 0.35rem 1rem;

border-radius: 0 16px 16px 0;

corner-shape: round bevel bevel round;

}

}

}

The round bevel bevel round combination creates a directional ribbon. Round where it meets the card edge, beveled to a point on the other side. No clip-path, no pseudo-element tricks. Borders, shadows, and backgrounds all follow the declared shape because it is the shape.

The cards themselves upgrade from border-radius: 12px to a larger size and the squircle corner-shape, that smooth superellipse curve that makes standard rounding look slightly off by comparison. Designers will notice immediately. Everyone else will just say it “feels more premium.”

Hot tip: Using the squircle value on card components is one of those upgrades where the before-and-after difference can be subtle in isolation, but transformative across an entire page. It’s the iOS effect: once everything uses superellipse curves, plain circular arcs start looking out of place. In this demo, I did exaggerate a bit.

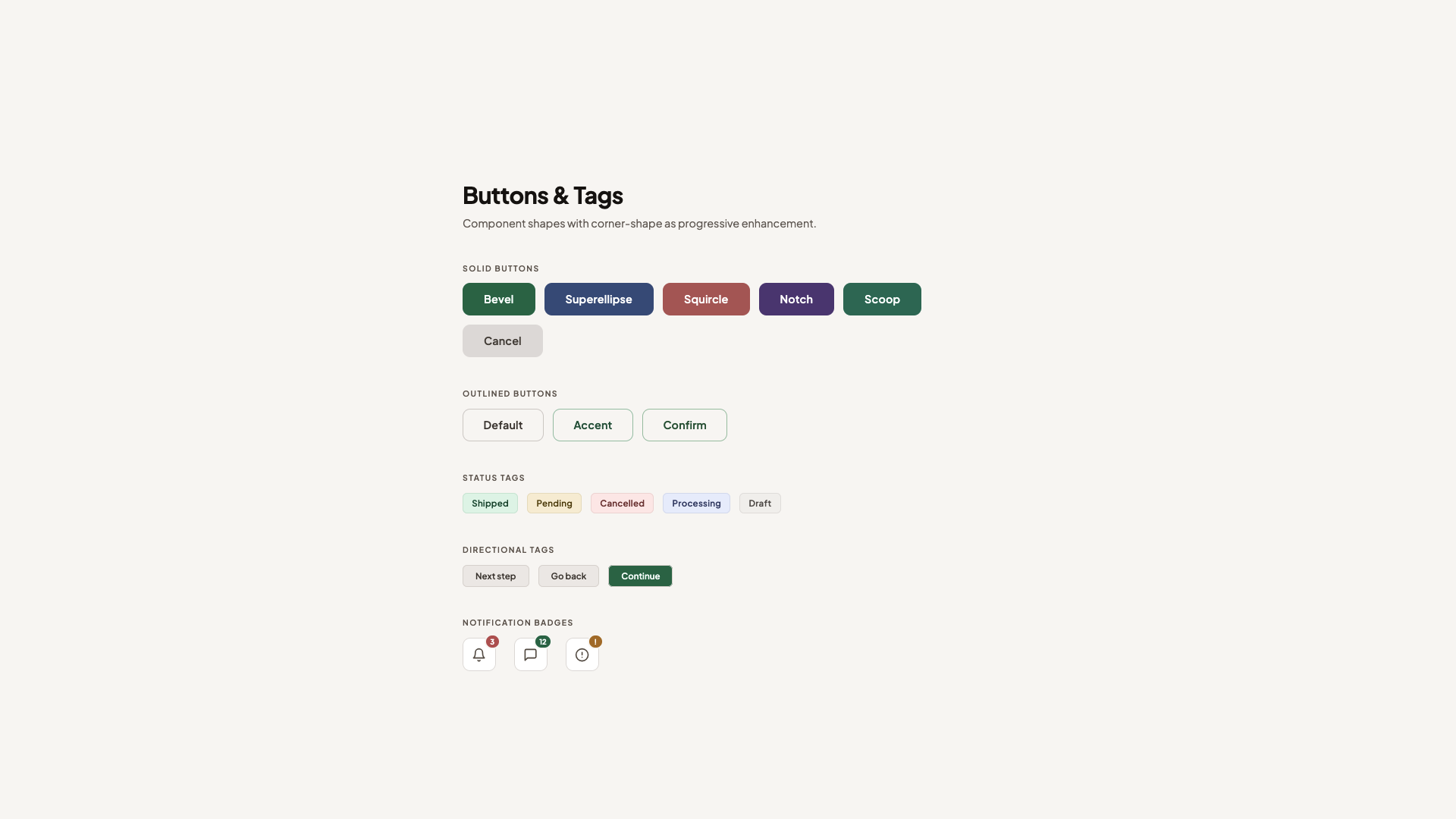

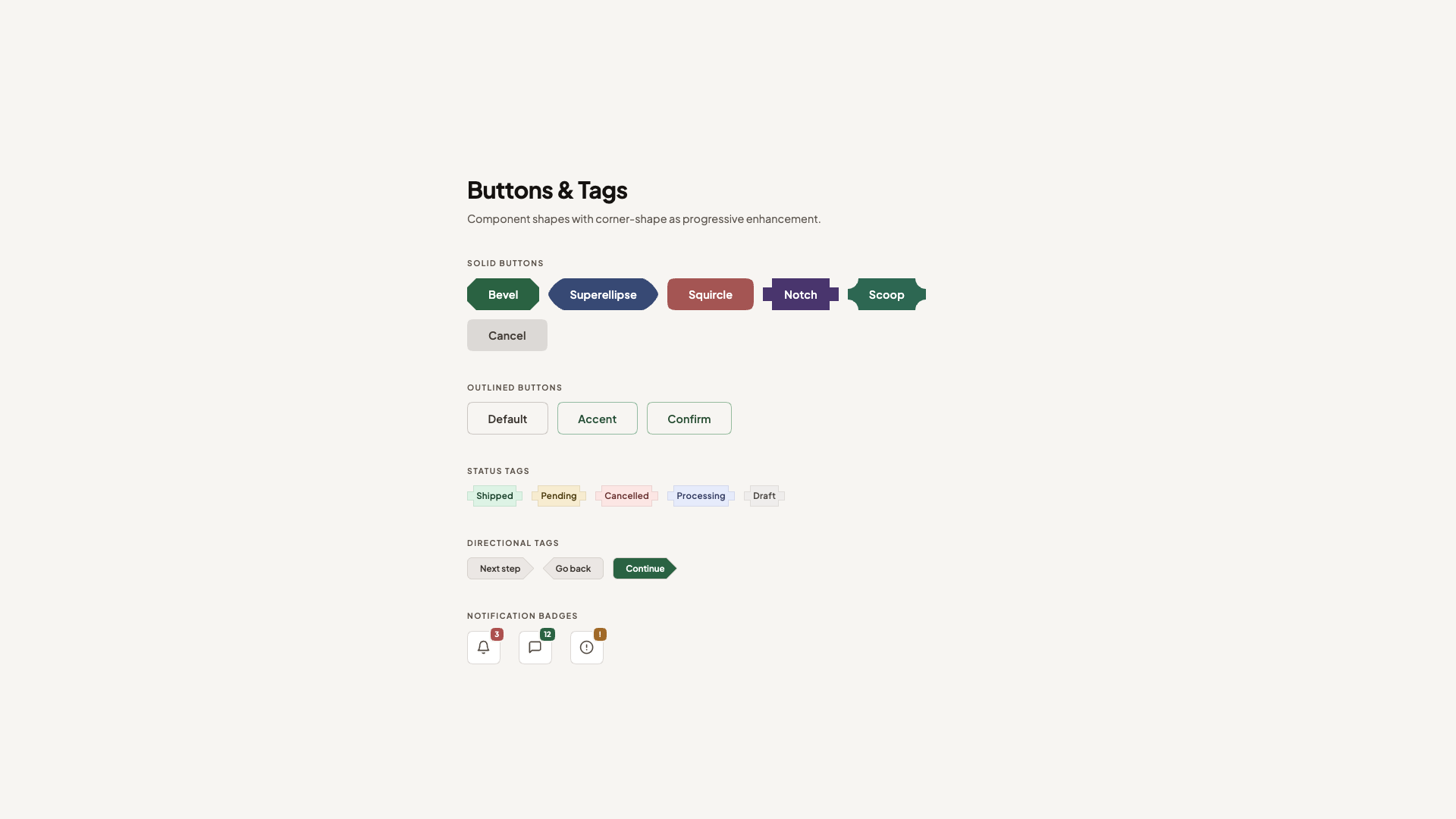

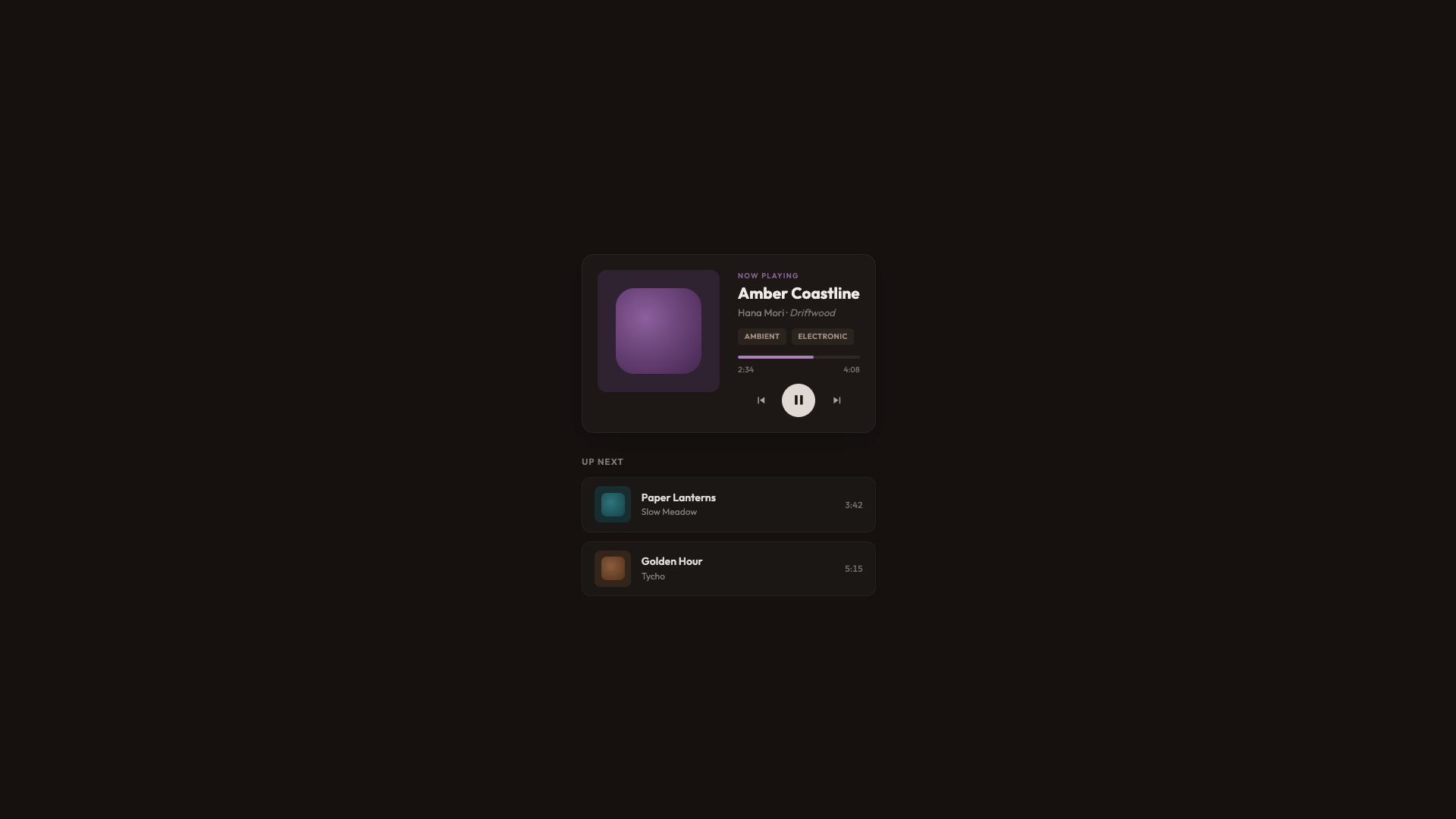

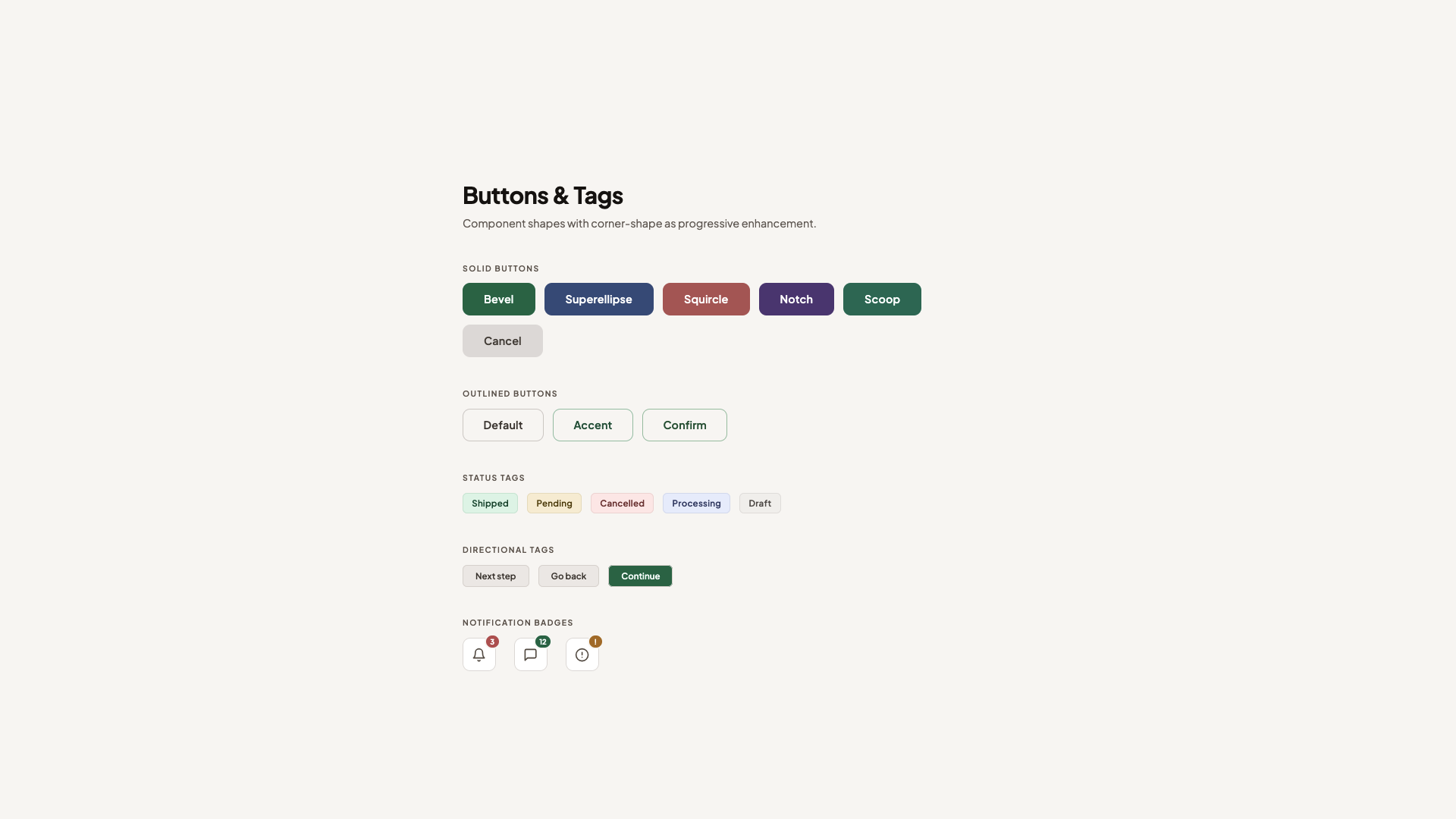

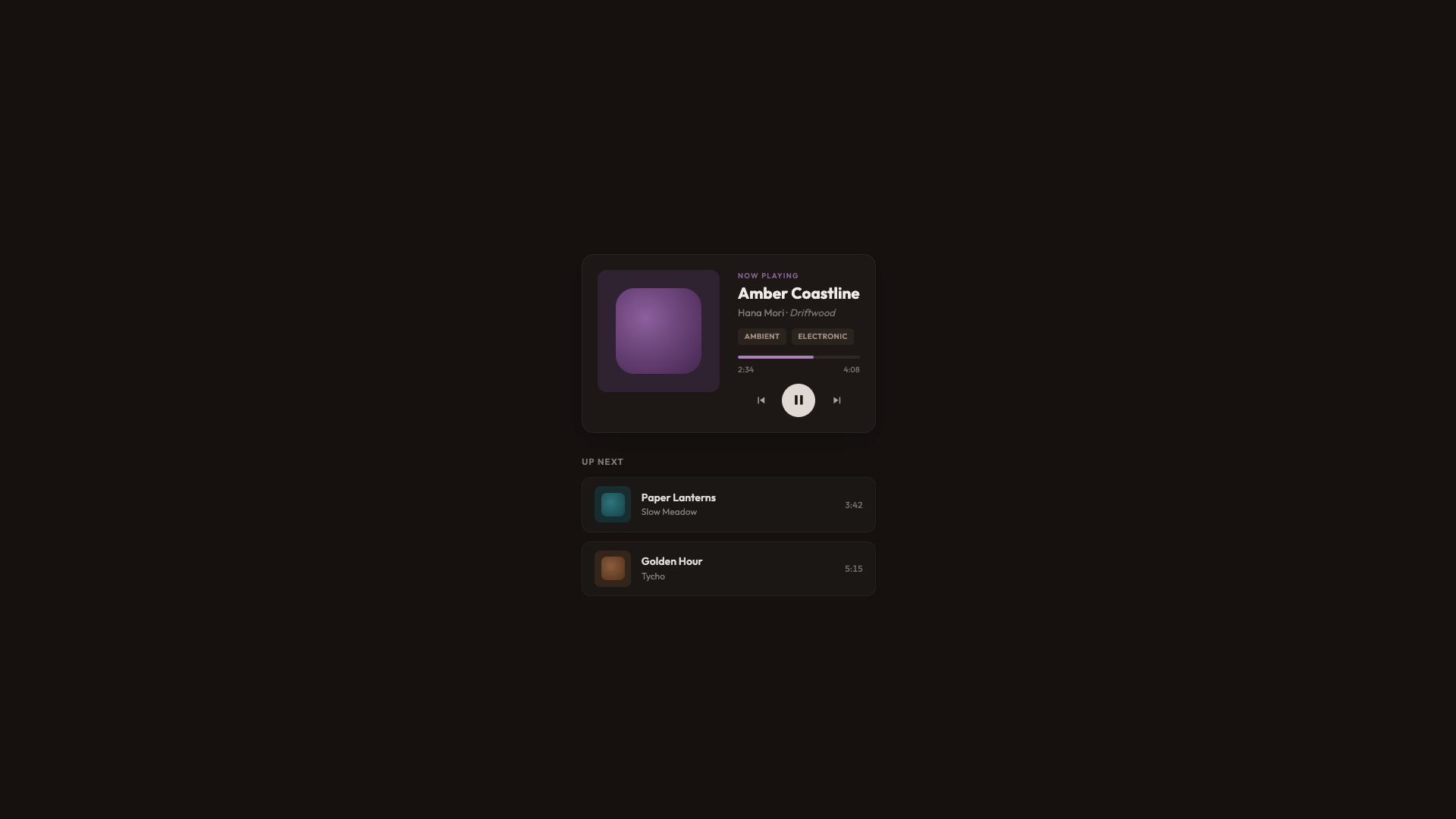

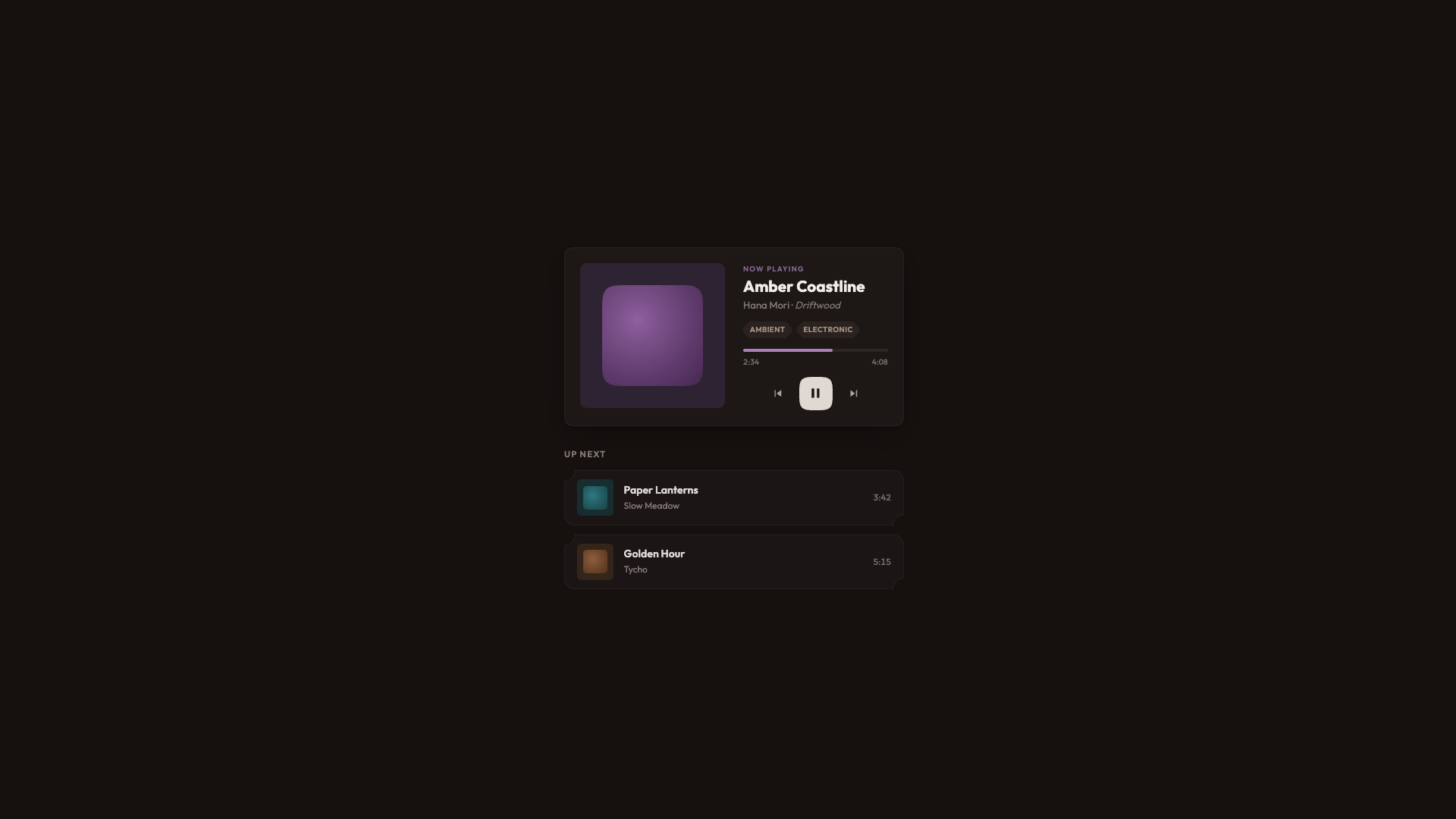

The primary button starts beveled, faceted, and gem-like, and softens to squircle on hover. Because corner-shape values animate via their superellipse() equivalents, the transition is smooth. It’s a fun interaction that used to be hard to achieve but is now a single property (used alongside border-radius, of course).

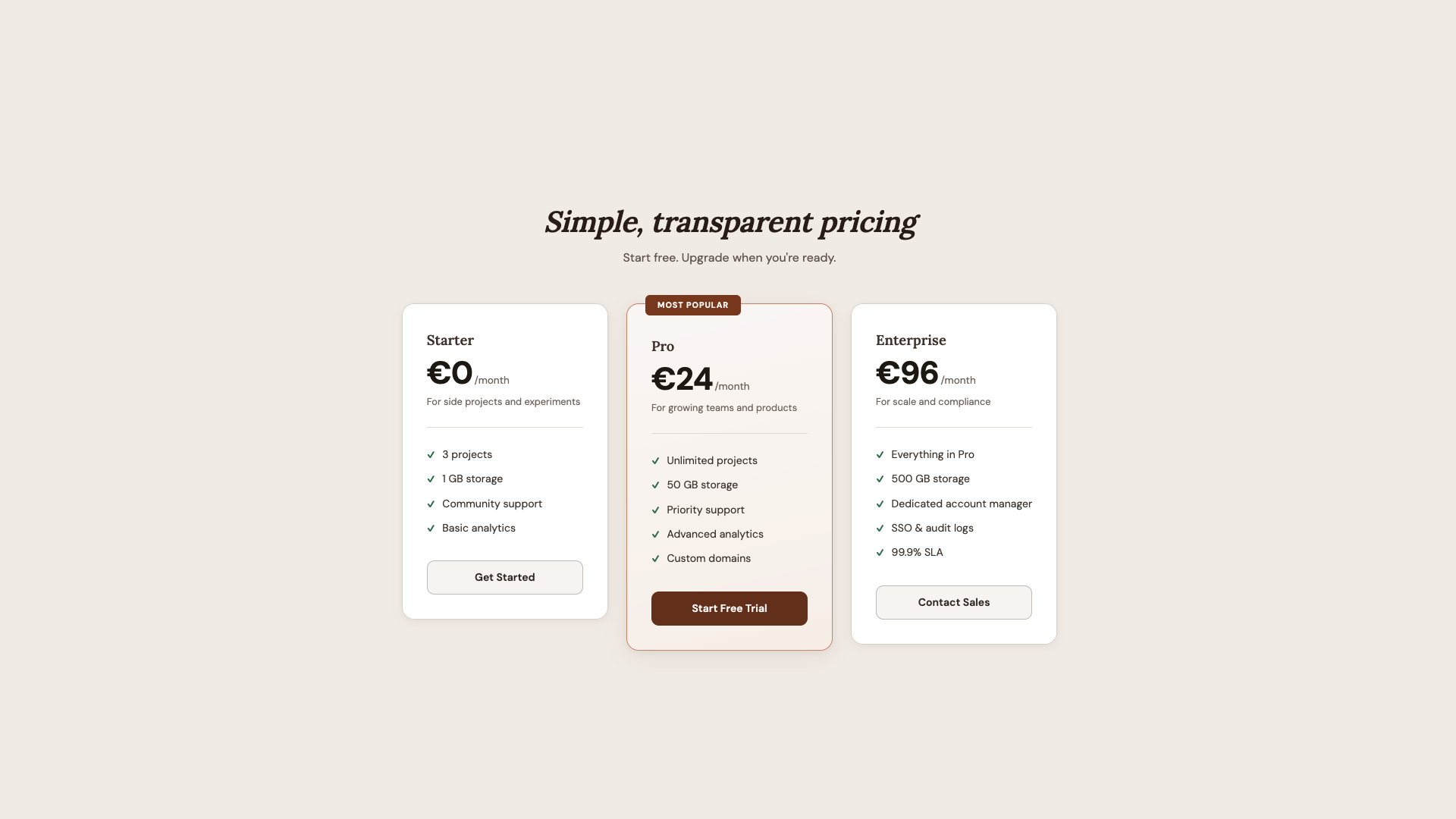

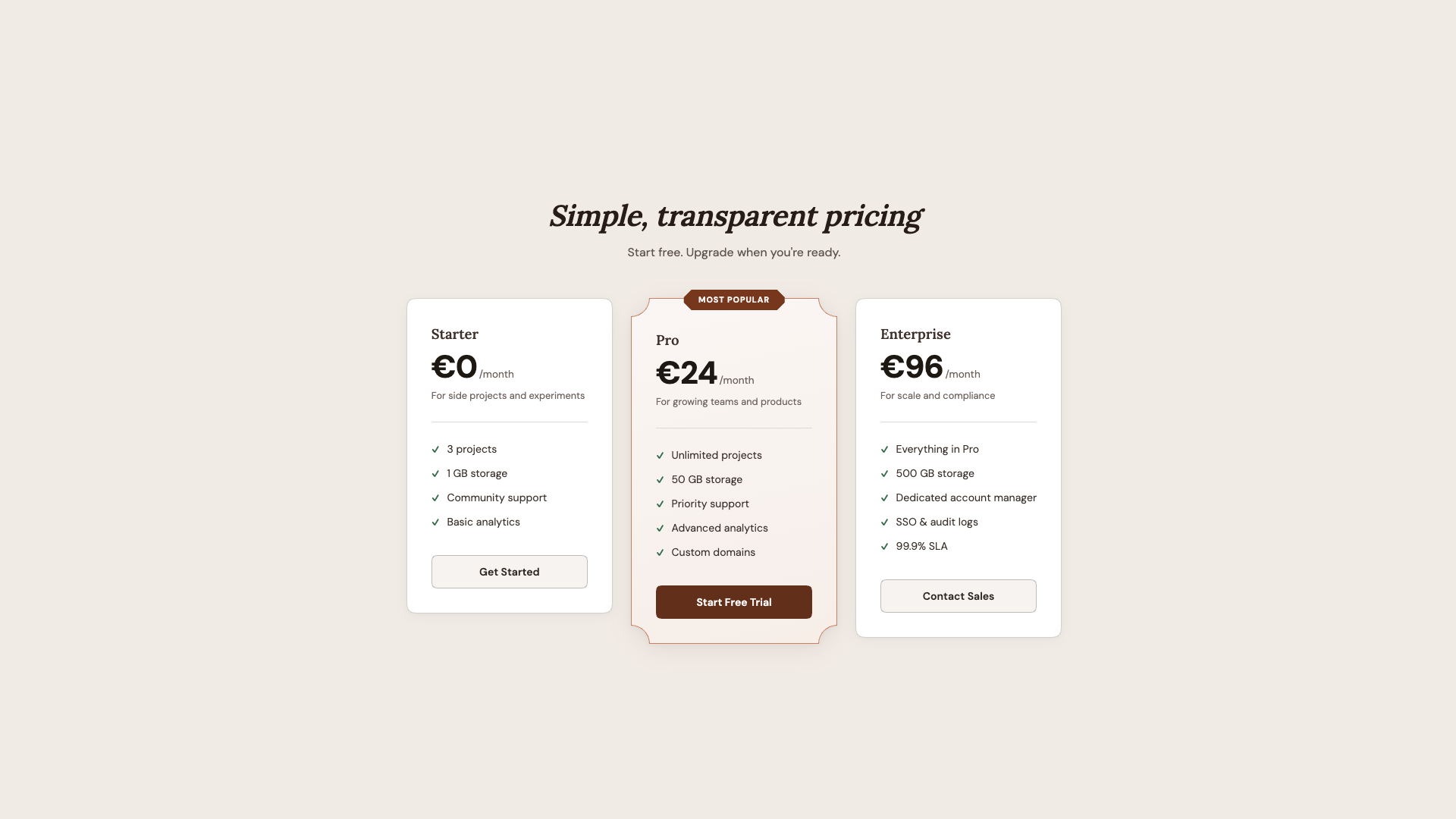

The secondary button uses superellipse(0.5), a value that is between a standard circle and a squircle, combined with a larger border-radius for a distinctive pill-like shape. The danger button gets a more prominent squircle with a generous radius. And notch and scoop each bring their own sharp or concave personality.

Beyond buttons, the status tags get corner-shape: notch, those sharp inward cuts that give them a machine-stamped look. The directional arrow tags use round bevel bevel round (and its reverse for the back arrow), replacing what used to require clip-path: polygon(). Now borders and shadows work correctly across all states.

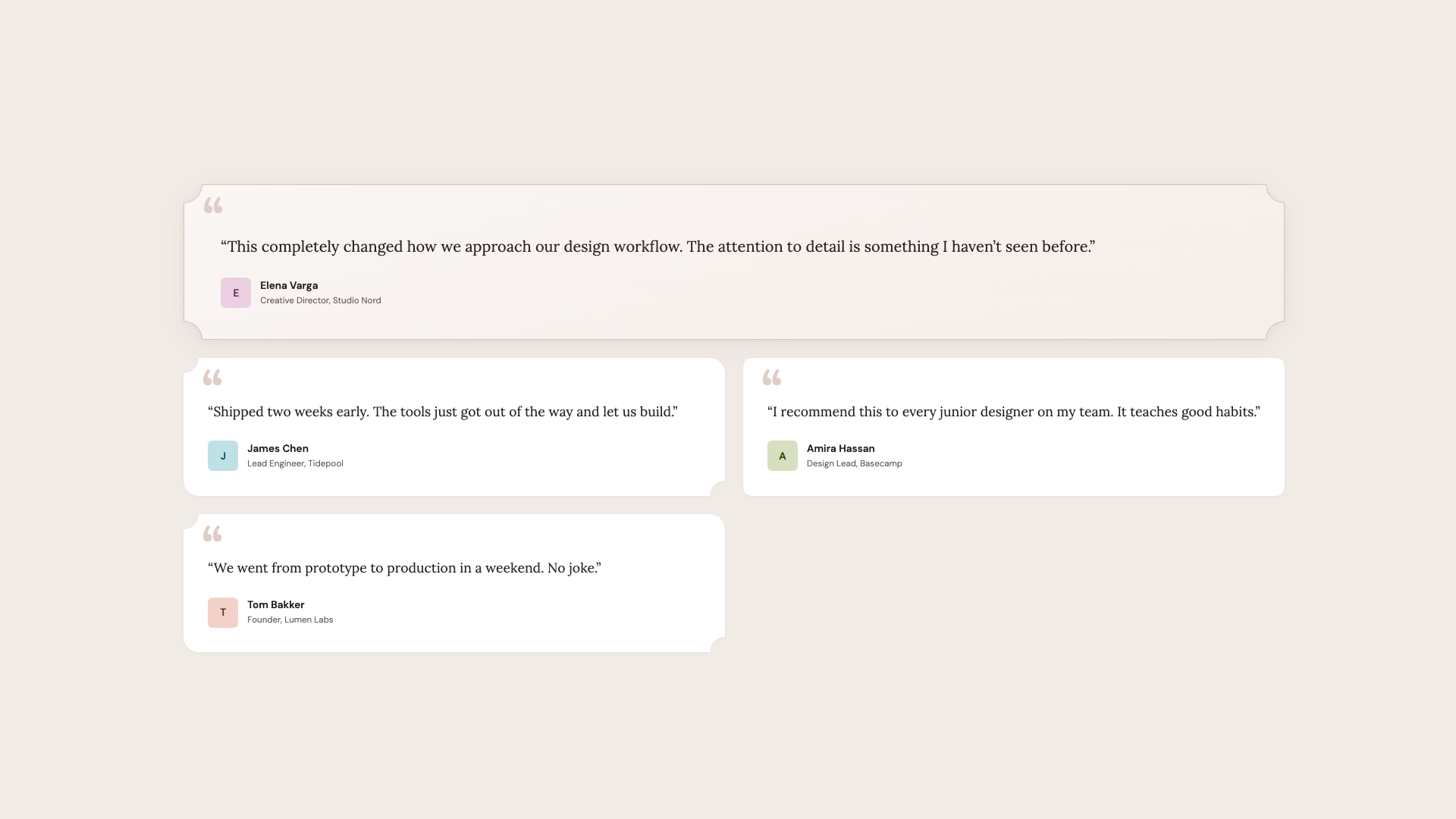

Hot tip: corner-shape: scoop pairs beautifully with serif fonts and warm color palettes. The concave curves echo the organic shapes found in editorial design, calligraphy, and print layouts. For geometric sans-serif designs, stick with squircle or bevel.

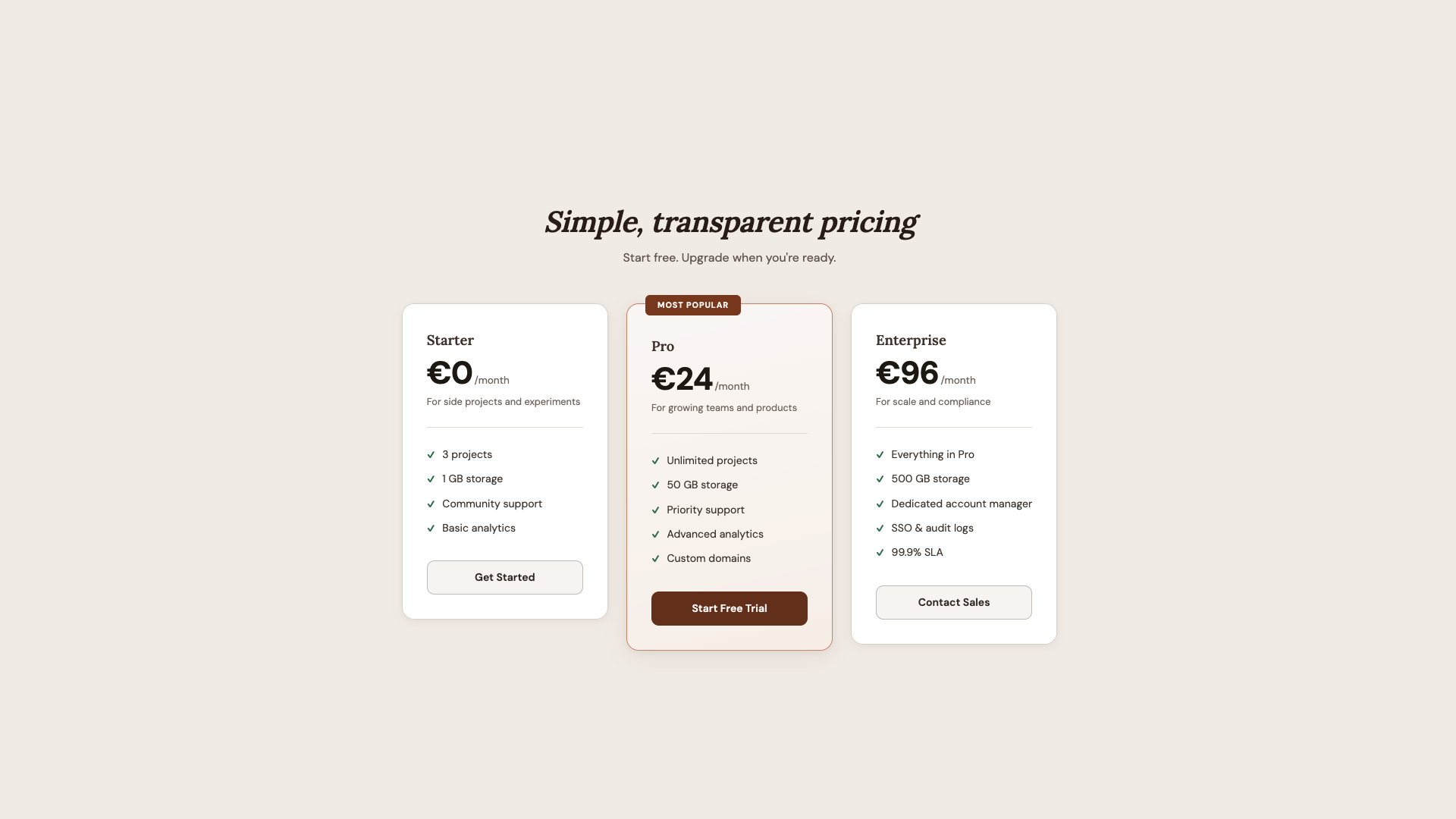

What I like about this demo is how the shape hierarchy mirrors the content hierarchy. The most important element (featured plan) gets the most distinctive shape (scoop). The badge gets the sharpest shape (bevel). Everything else gets a simpler upgrade (squircle). Shape becomes a tool for visual emphasis, not just decoration.

Browser Support

As of writing, corner-shape is available in Chrome 139+ and Chromium-based browsers. Firefox and Safari don’t support it yet. The spec lives in CSS Borders and Box Decorations Module Level 4, which is a W3C Working Draft as of this writing.

For practical use, that’s fine. That’s the whole point of how these demos are built. The presentation layer delivers a polished, complete UI to every browser. The demo layer is a bonus for supporting browsers, wrapped in @supports (corner-shape: ...). I lived through the time when border-radius was only available in Firefox. Somewhere along the line, it seems like we have forgotten that not every website needs to look exactly the same in every browser. What we really want is: no “broken” layouts and no “your browser doesn’t support this” messages, but rather a beautiful experience that just works, and can progressively enhance a bit of extra joy. In other words, we’re working with two tiers of design: good and better.

Wrapping Up

The approach I keep coming back to is: don’t design for corner-shape, and don’t design around the lack of it. Design a solid baseline with border-radius and then enhance it. The presentation layer in every demo looks intentionally good. It’s not a degraded version waiting for a better browser. It’s a complete design. The demo layer adds a dimension that border-radius alone can’t express.

What surprises me most about corner-shape is the range it offers — the amazing powerhouse we have with this single property: squircle for that premium, superellipse feel on cards and avatars; bevel for directional elements and gem-like badges; scoop for editorial warmth and visual hierarchy; notch for mechanical precision on tags; and superellipse() for fine control between round and squircle. And the ability to mix values per corner (round bevel bevel round, scoop round) opens up shapes that would have required SVG masks or clip-path hacks.

We went from five background images to border-radius, to corner-shape. Each step removed a category of workarounds. I’m excited to see what designers do with this one.

Further Reading

corner-shape (MDN)- “What Can We Actually Do With

corner-shape?”, Daniel Schwarz

- CSS Borders and Box Decorations Module Level 4 (W3C specification)

- A fun demo for “eco-labels”, Sebastian on CodePen