Building a true culture of digital accessibility in a company is a mission of resilience and perseverance. It’s not difficult for the discourse on accessibility to fall into the usual clichés. Accessibility is very important for people. The accessibility of digital products and services promotes inclusion. Or even, all professionals on the teams should be involved in accessibility work. Of course. No one in their right mind will dispute any of these statements (I hope).

However, the second part of this conversation, which very few companies reach, is “how?” How do we make this happen in the midst of the day-to-day work of digital transformation teams, which, as we all know, are immersed in demanding scripts, often with a very limited number of people available? Most of the time, the choice ends up being between “we do this” and “that.” And it shouldn’t, because, in these cases, I never saw accessibility winning in this equation.

It shouldn’t be this way. You don’t need to be this way. First of all, because choosing between accessibility and anything else isn’t the right choice. Accessibility is no longer just another feature to be added to the others. It’s an added value for the business and, currently, a legal obligation that can have serious consequences for companies. On the other hand, there are intelligent, optimized, and impactful ways to incorporate accessibility principles into the natural dynamics of teams. It’s possible to work on accessibility without turning team operations upside down. In essence, that’s what AccessibilityOps does. Empowering people and providing teams with simple processes so they can integrate accessibility work into their daily routines without disproportionate effort.

Accessibility And Design

Working on digital accessibility in design can involve several actions. It’s clear that we need to pay particular attention to color and how it’s used to convey meaning. Of course, the interaction sizes of elements must be comfortable. But, most importantly, we must think about design from a versatile perspective. An interface isn’t a poster. We can control many aspects of that design, but how users interact with the interface is subject to an endless number of variables. The type of device, context, purpose, network quality, etc. All of this greatly affects each person’s experience and interaction. Along with all this, when digital accessibility concerns are brought into the design process, it adds even more variables.

People often use what are called assistive technologies and strategies. Basically, these are technological tools or, at the very least, “tricks” that people resort to in order to find more comfortable usage models. The famous screen readers, commonly associated with the use of blind people (but which are not only useful to them), for example, are an assistive technology. Changing colors or color contrasts between different elements is also an assistive technology. Increasing the font size (which we discussed in this text) is another example. There are countless assistive technologies and strategies. Almost as many as the different contexts of use for each person.

We Don’t Control Everything

In other words (and this is the “bad news” for us designers), “our design” is subject, from the users’ perspective, to transformations that we don’t control. It will be “transformed” by the user, ensuring that they can interact with the application and everything it offers in the most comfortable way possible. And that’s a good thing. If this happens and everything goes well, we will have surely done our accessibility work very well, and we all deserve congratulations. If the user applies any of these support technologies and strategies and still cannot use the digital application, it’s a sign that something is not working as it should.

Oh, and speaking of which. Don’t even think about blocking the use of these technologies or support strategies. They may be “destroying” your beautiful design, but they are allowing more and more people to actually use the app. In the end, wasn’t that exactly what we promised we wanted to do? Design for (all) people. Without exception?

Increase Font Size

How many times have we heard someone — friends, family, or even colleagues — complaining that this or that text is too small? Text plays a very important role in the digital experience. Much information is conveyed through text: instructions for use, button captions, or interactive elements. All of this uses text as a communication tool. If reading all these elements is difficult, naturally, the experience is severely impaired.

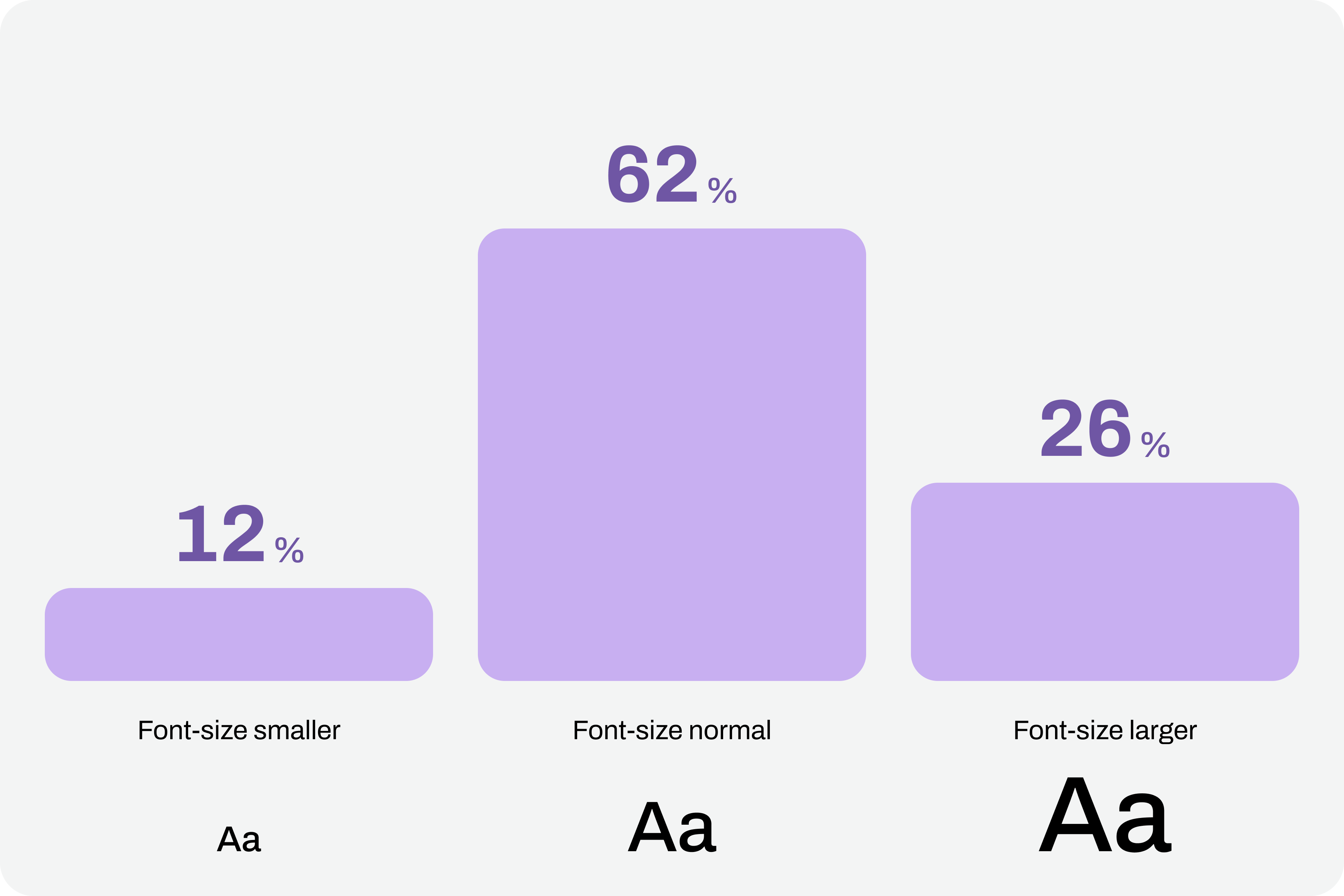

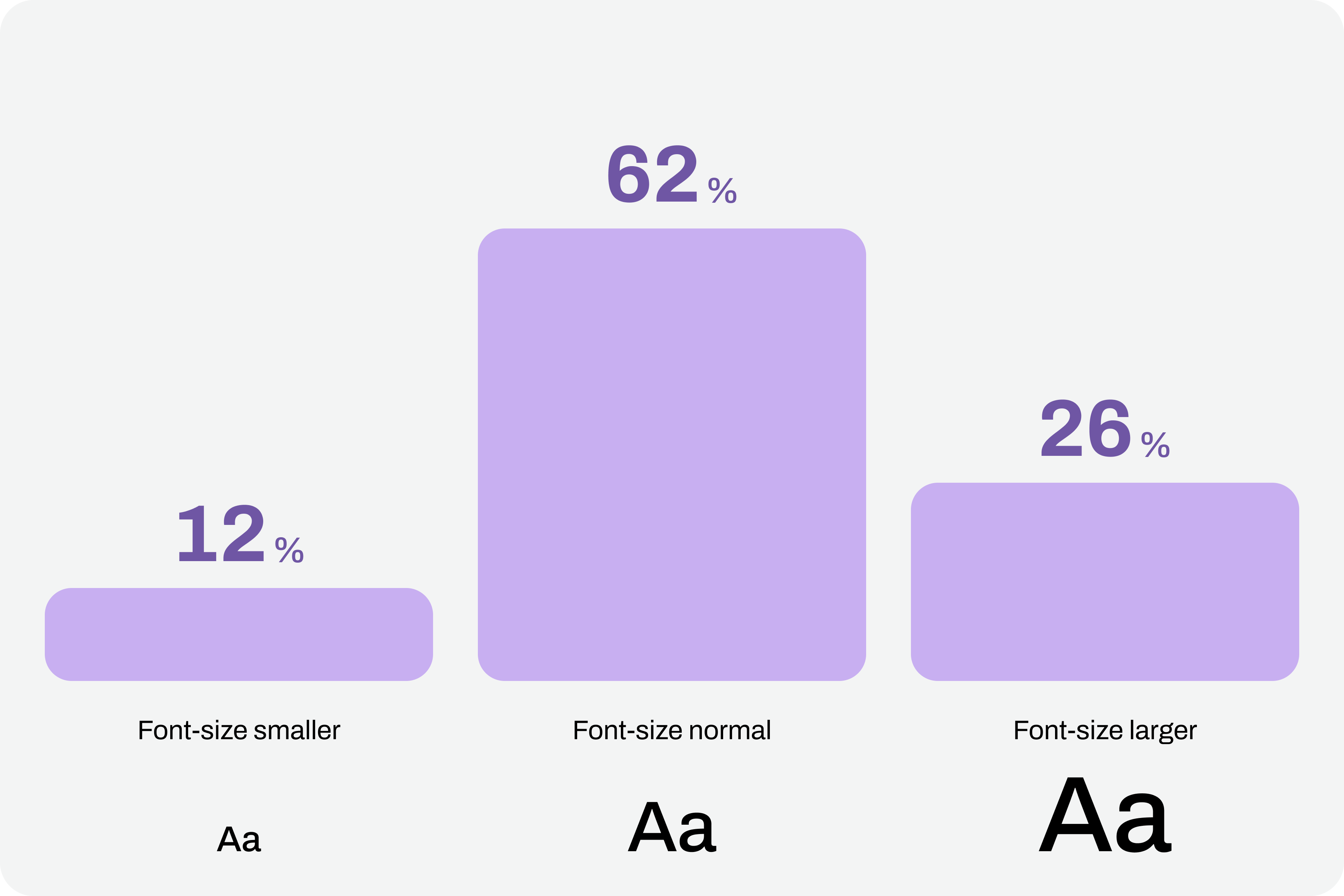

Comfortable text reading, regardless of its function, is a non-negotiable principle. This reading can be facilitated by using comfortable sizes in the design. However, supporting technologies and strategies, through the functionality of increasing font size, can also help improve readability. According to APPT data, 26% of Android and iOS mobile device users increase the default font size (data from February 2026). One in four users increases the font size on their smartphone. This is a very significant sample of people, making this functionality unavoidable in design processes.

Compliance With Guidelines

Increasing font size in interfaces can represent a huge design challenge. It’s important to understand that, suddenly, some text elements, due to user actions, can double in size from their initial size.

“With the exception of captions and text images, text can be resized without assistive technology up to 200% without loss of content or functionality.”

— Success criterion 1.4.4, “Resizing Text” of the Web Content Accessibility Guidelines (WCAG), version 2.2

This success criterion is at the AA compliance level, meaning this is an absolutely mandatory feature according to any legal framework.

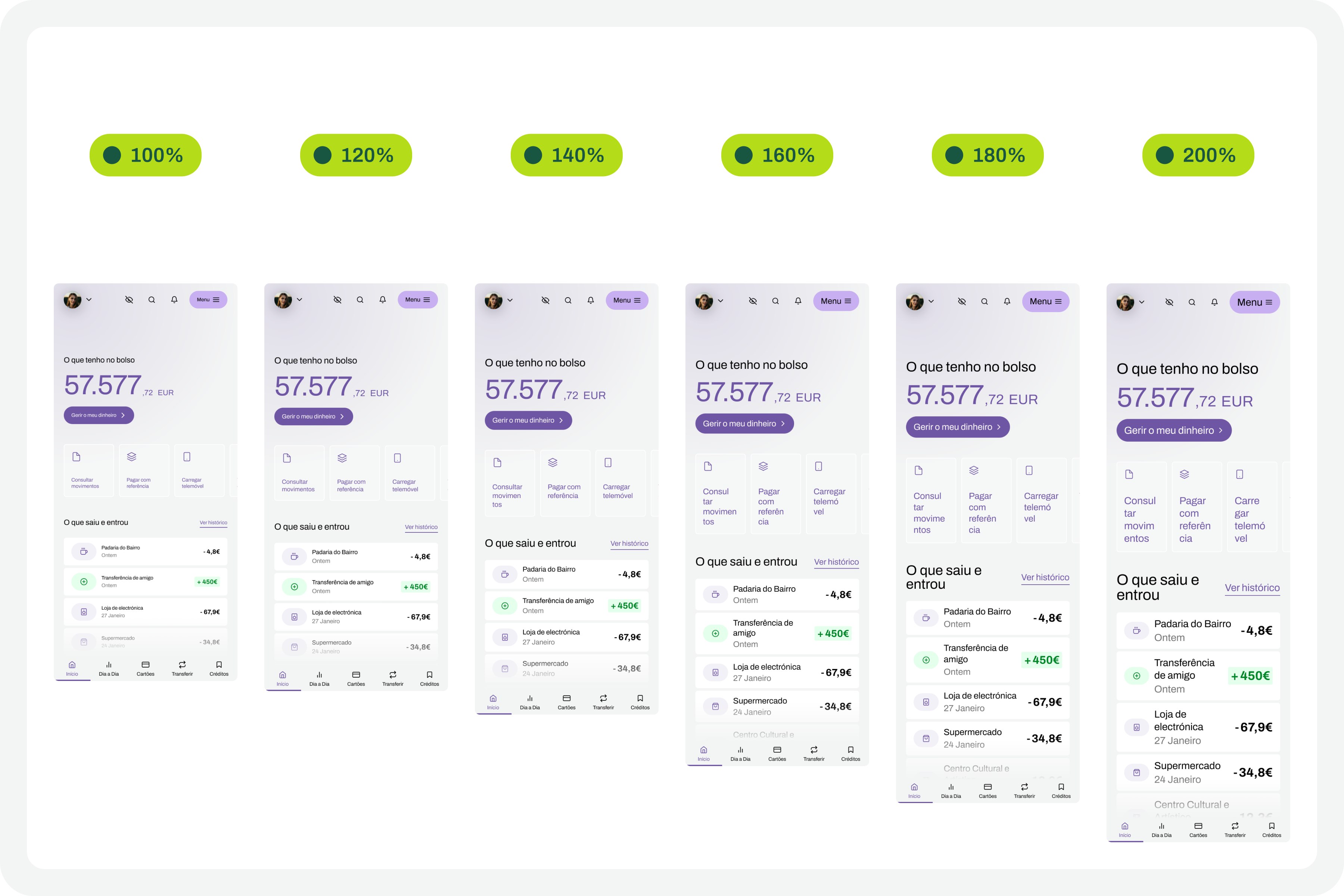

It’s easy to understand the 200% in this success criterion. If we assume we design the interfaces at a 100% scale, meaning the element size is the initial size, then increasing the text by up to 200% will correspond to doubling the initial size. Other enlargement scales can also be used, such as 120%, 140%, and so on. In other words, we have to ensure that users can increase the text to double its initial size through supporting technologies or strategies (and this is not a minor detail).

To comply with this standard, we don’t need to provide text size increase tools in the interfaces. In practice, these features are nothing more than redundancy. Devices already allow this to be done in a standardized way. Users who really need this setting know it (because, without it, their lives would be much more difficult). Well, they already have this setting applied across their device. And that means we can eliminate these additional interface elements, simplifying the experience.

Standardized Access

An important concept to remember about assistive technologies, particularly in this case regarding increasing font size, is that most devices already have many of these tools installed by default. In other words, in many cases, users don’t need to purchase their own software or buy a specific type of device just to have this functionality.

Whether on mobile devices or even in web browsers, in the vast majority of cases, it’s easy to find installed features that allow you to increase the default font size we’re using throughout the interface. This principle of increasing font size can be applied to digital products, such as apps, or even to any type of website running on the standard web browsers used today.

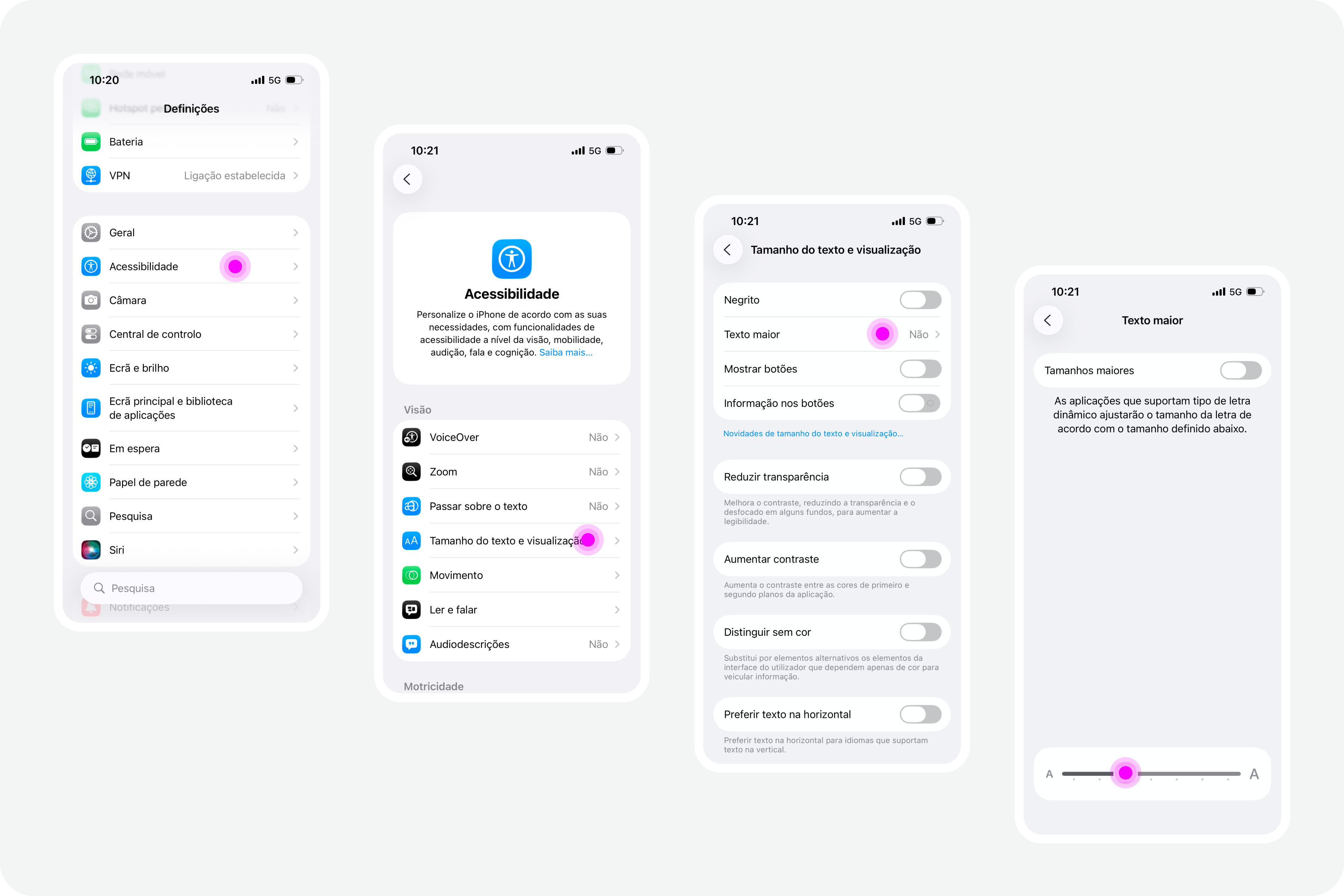

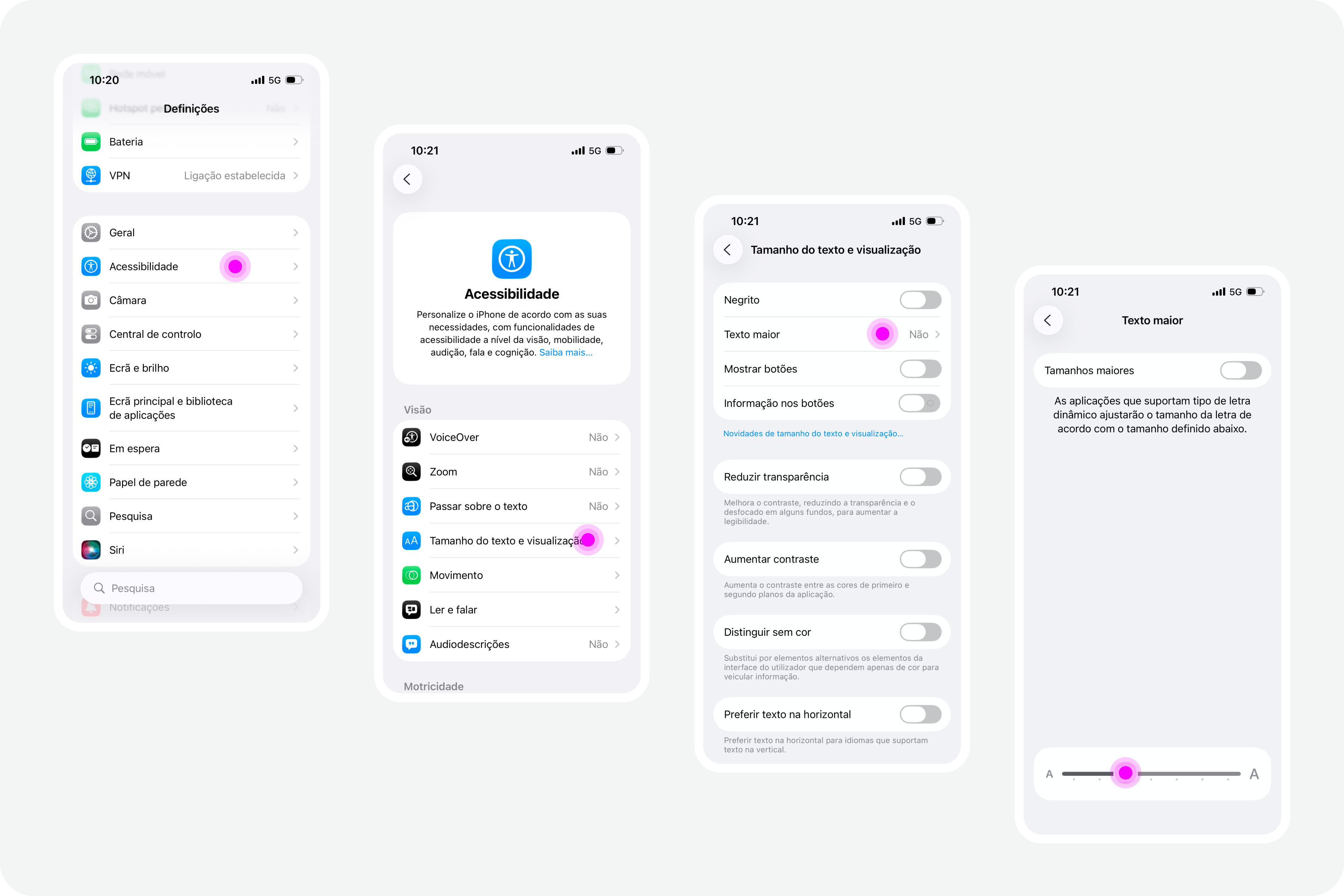

iPhones

On iPhone devices, the font size increase feature is integrated by default. To use this feature, simply access the “Settings” panel, select “Accessibility,” and within the “Vision” options group, access the “Text Size and Display” feature and configure the desired font size increase on that screen.

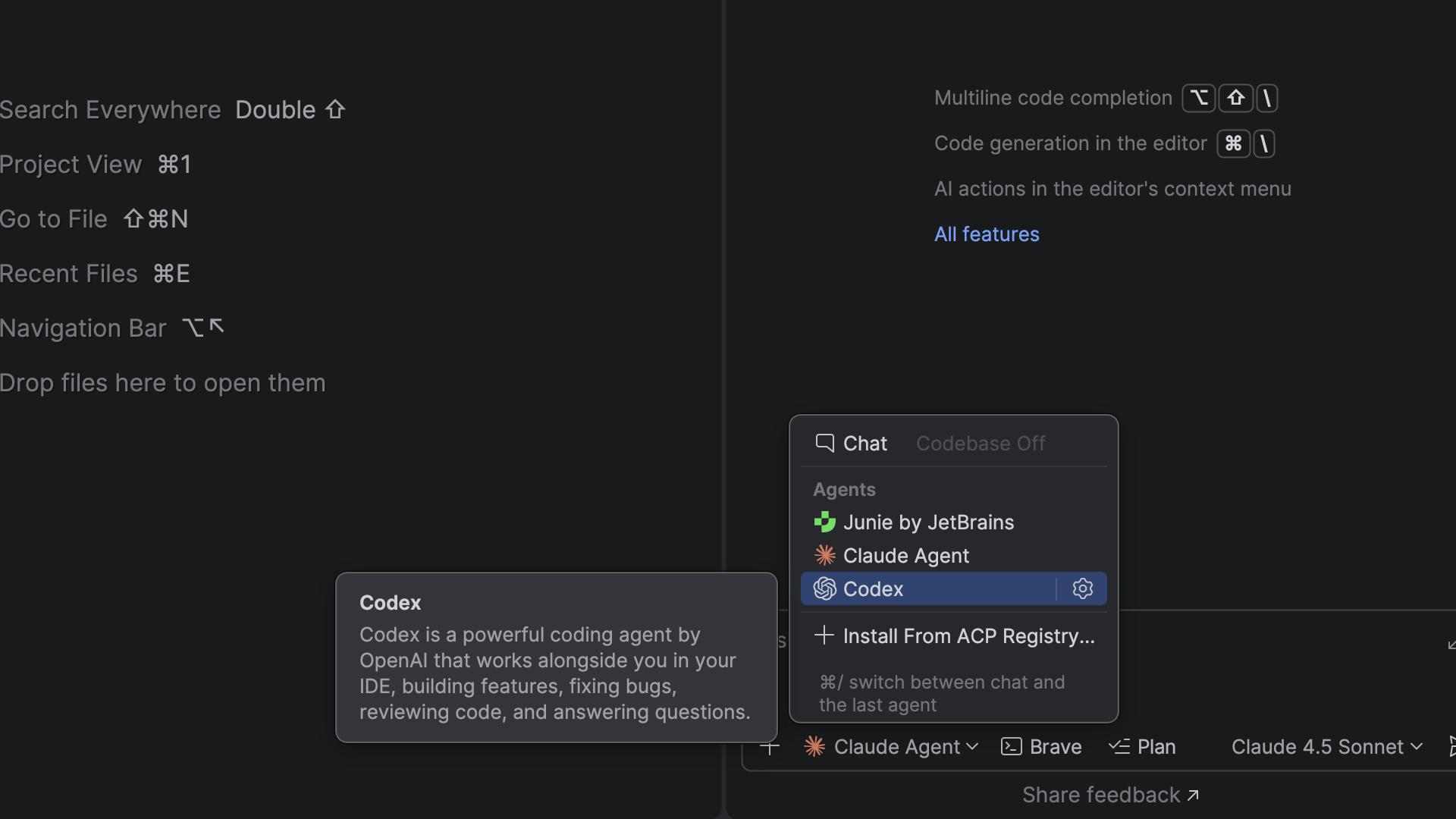

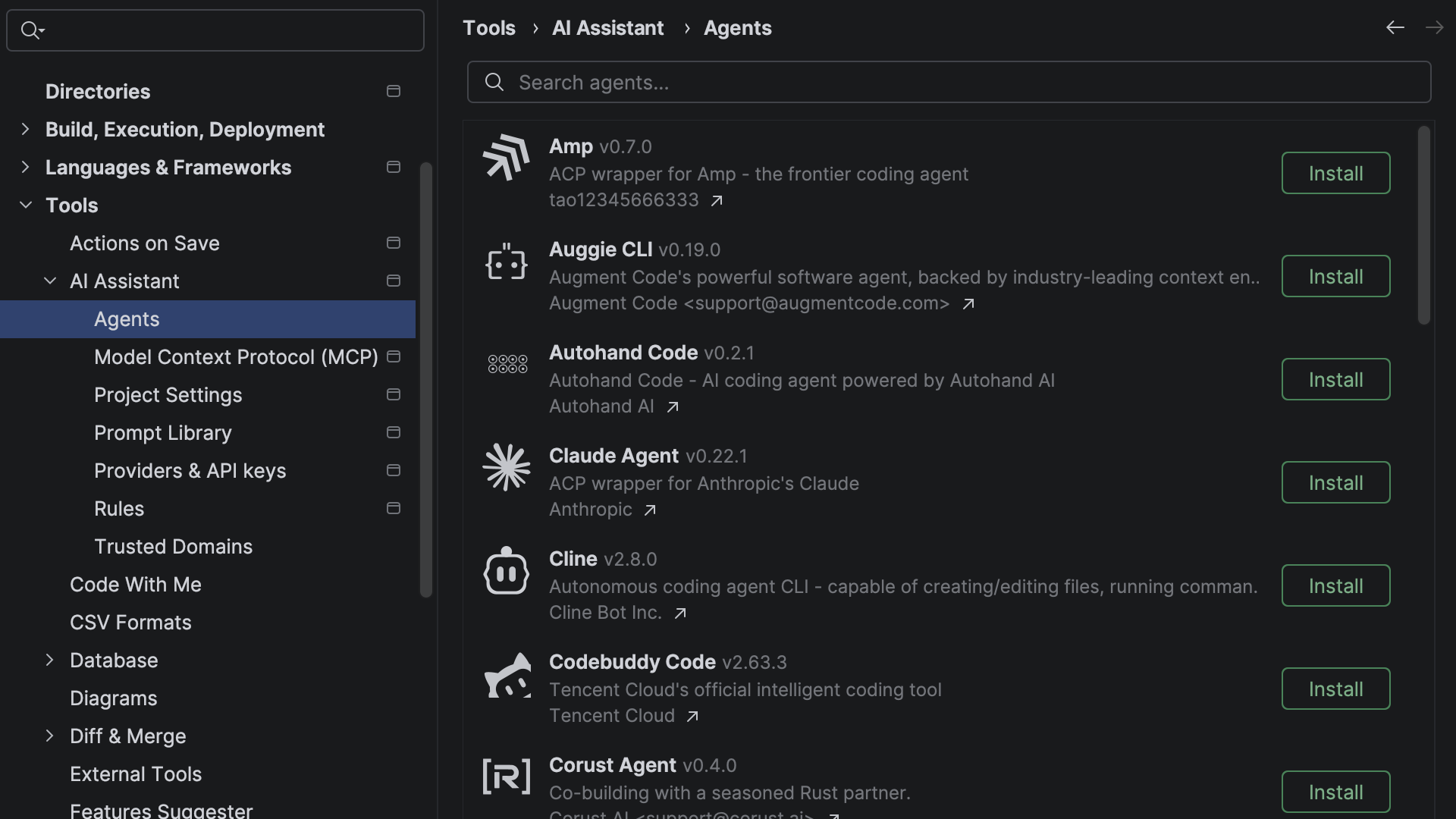

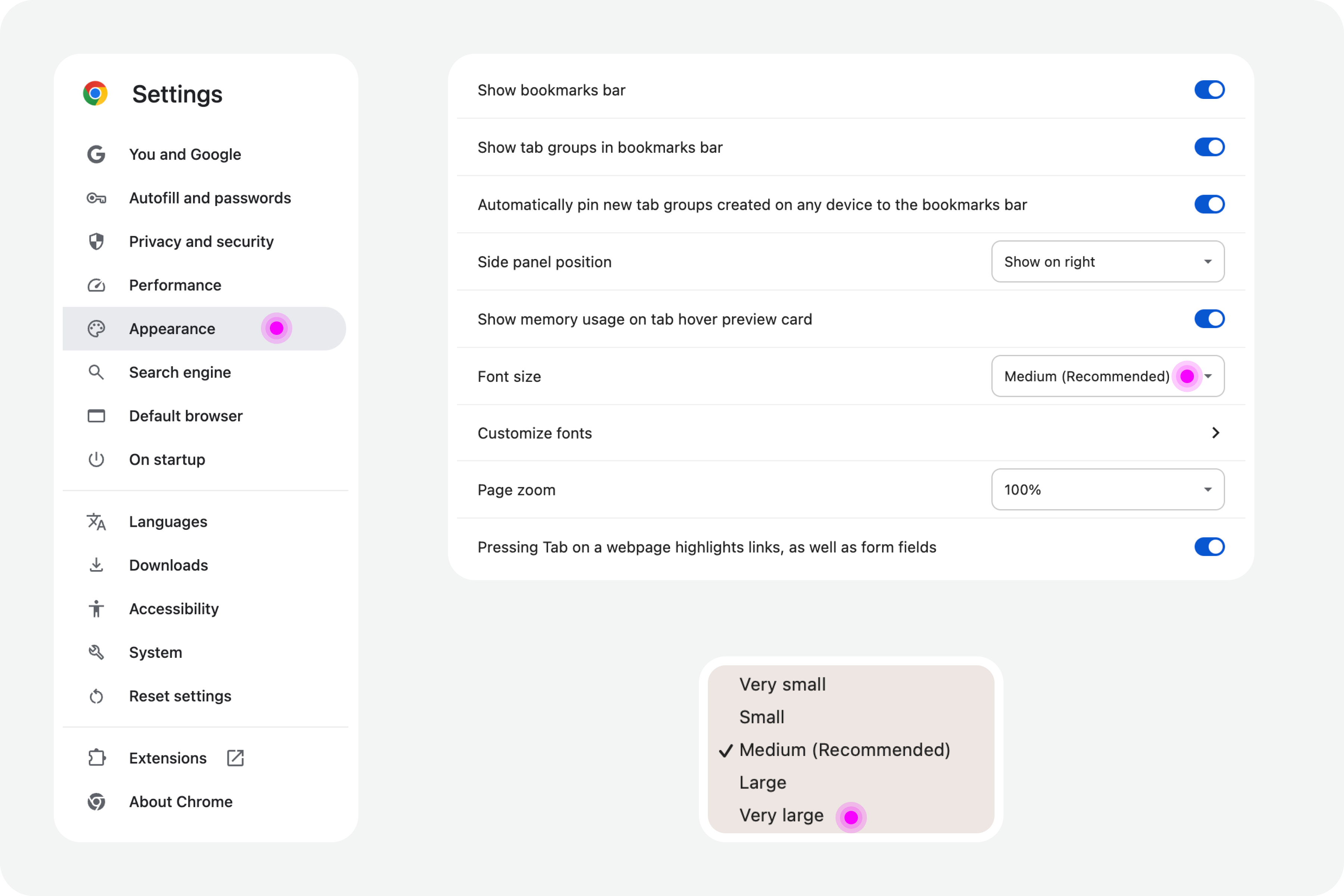

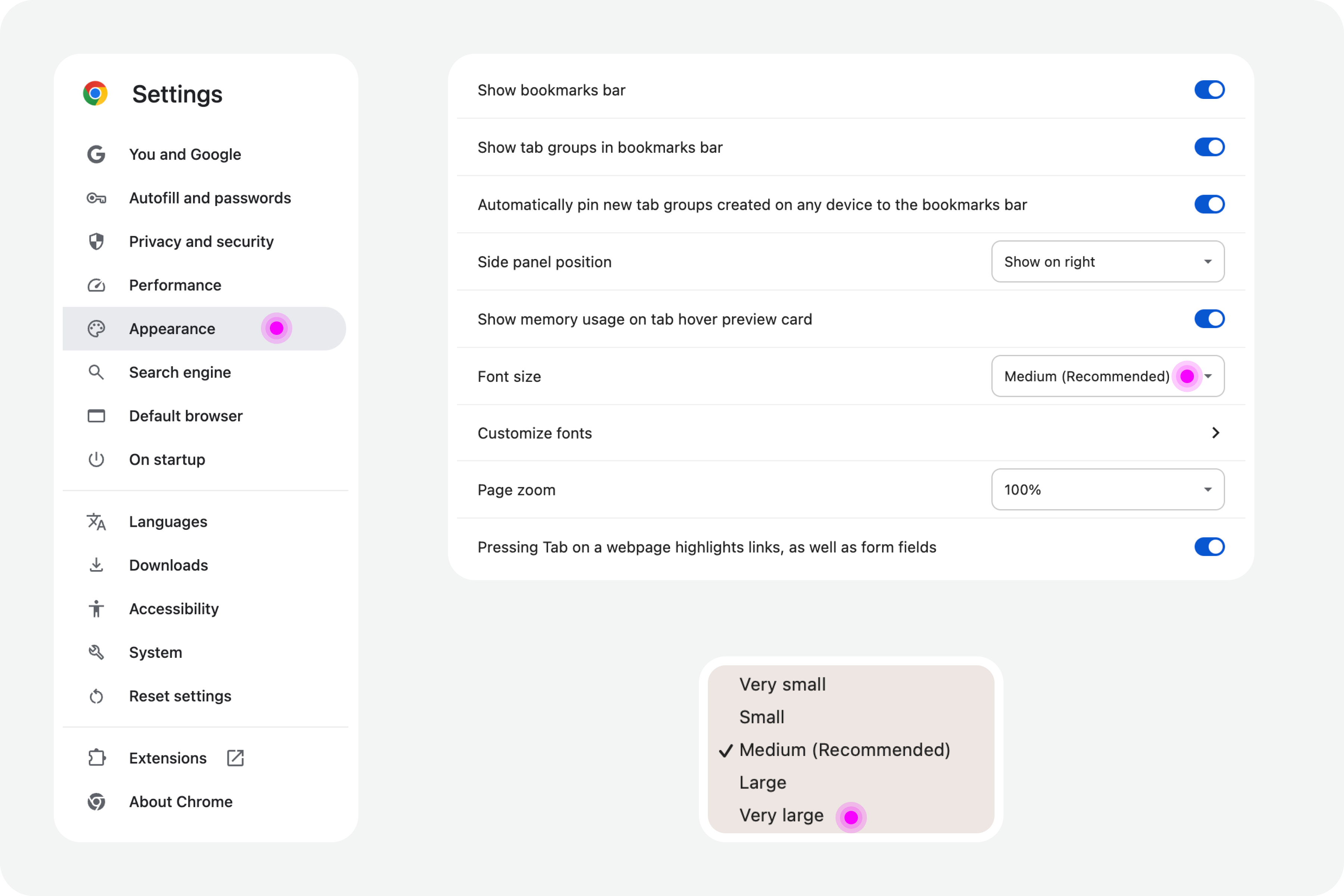

Google Chrome

Web browsers also offer, by default, the functionality to increase font size. For example, in Google Chrome, this feature is available in the “Options” panel, specifically in the “Appearance” area. In the list of options that appear in this group, simply select the “Font size” option. Normally, the “Medium — Recommended” option will be selected. You can change this setting to any other available font size. Try, for example, the “Very large” option.

Test In Figma

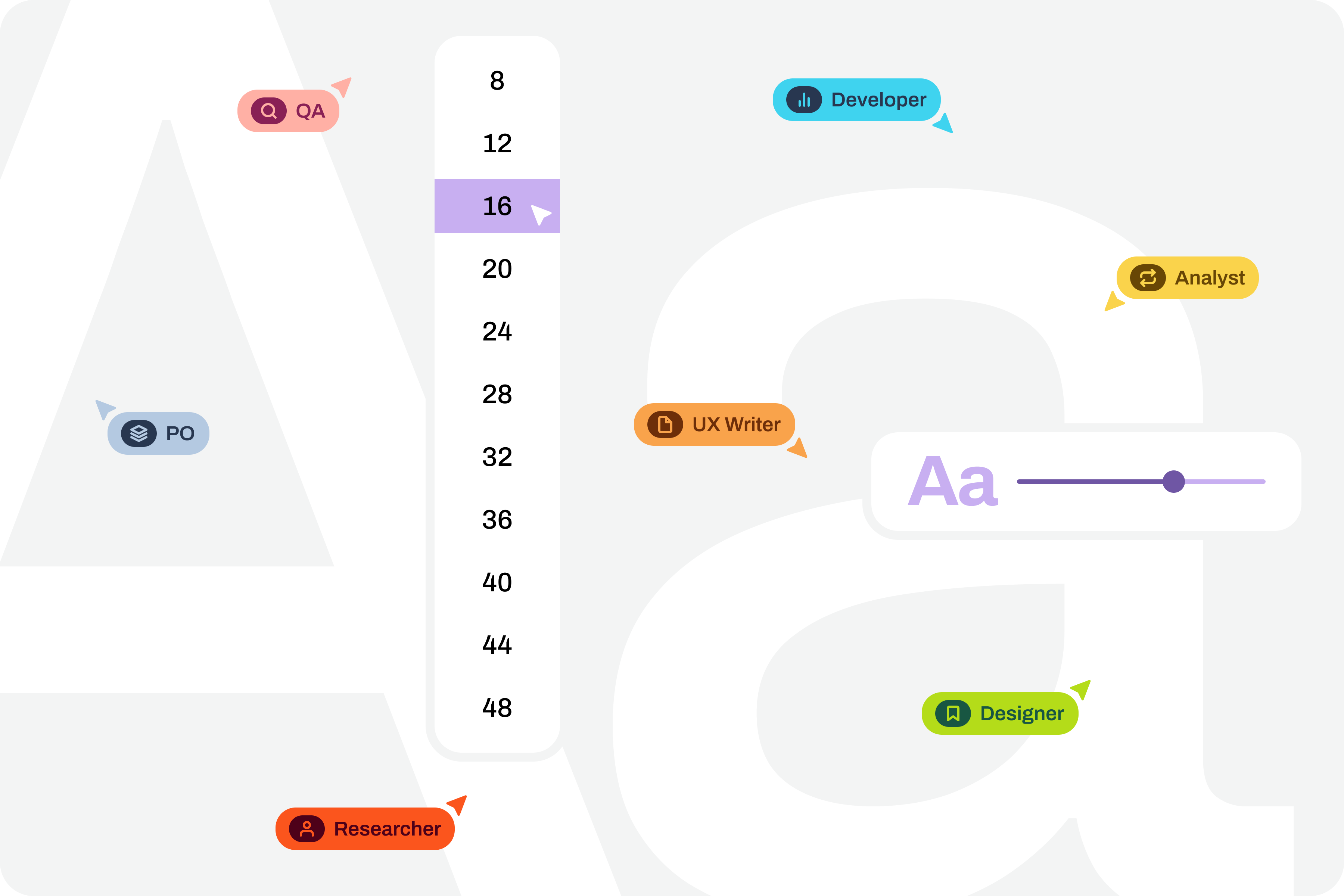

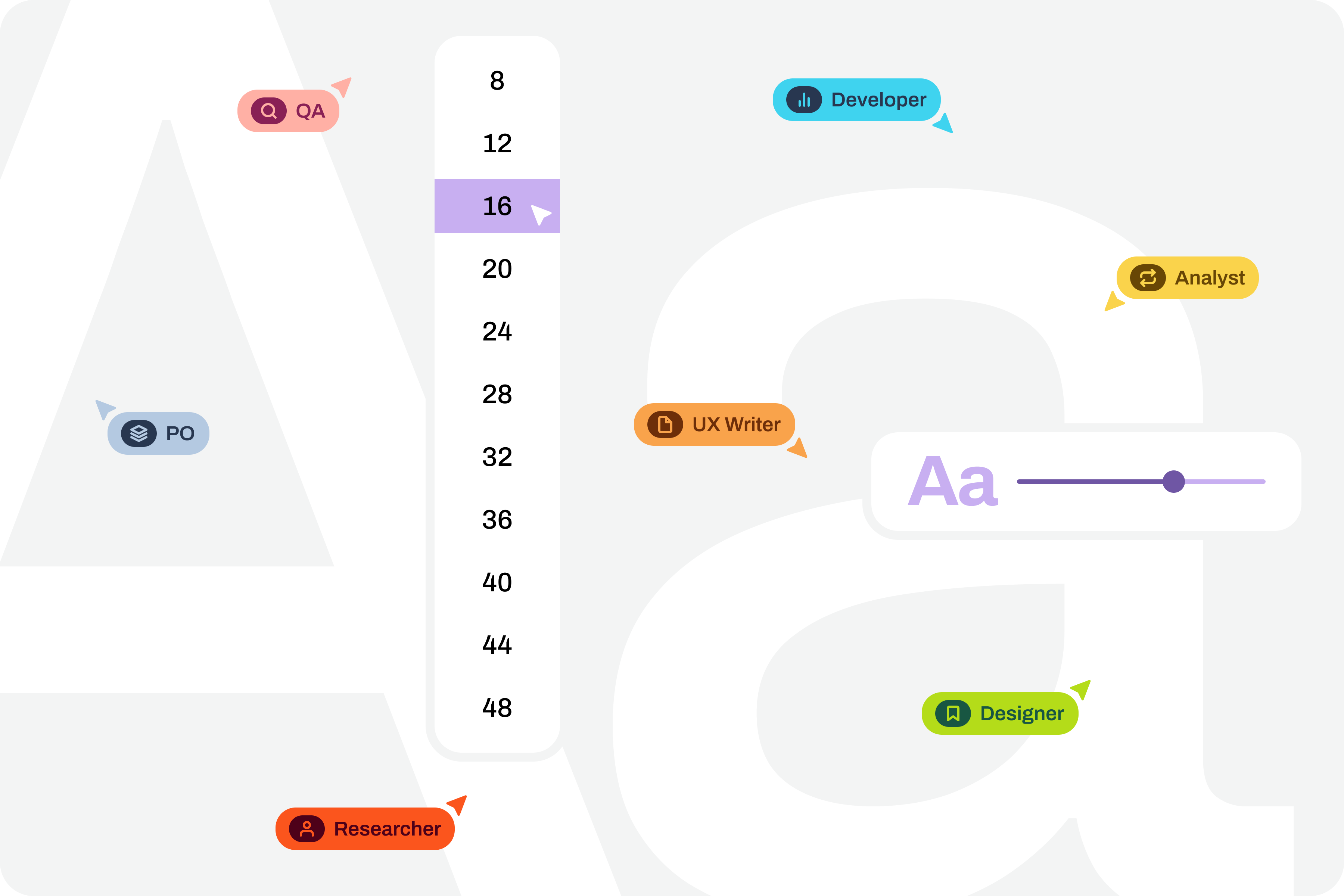

To ensure that digital accessibility work becomes effective in the daily lives of teams, it is essential to find simple work processes. Actions or initiatives that can be integrated into the team’s routine, that address accessibility in an integrated way, and do not require a dramatic transformation of the current reality. If that were necessary, he believes, it wouldn’t happen most of the time. Therefore, designing simple work processes is half the battle for accessibility to truly happen, in this case, also within a design team.

Regarding testing font size increases in design, we have extraordinary tools at our disposal today. Those who remember the days of designing complex interfaces in Adobe Photoshop will recognize the differences in the tools we have today (and thankfully so). It’s now possible, through tools like Figma, to create such dynamism in design that testing font size increases for accessibility becomes almost unavoidable for the team.

Note: To take this test, you need to have a strong grasp of Figma’s text styles, auto layouts, and variables. These three are fundamental tools for success without much extra effort. If you haven’t yet mastered these features, it’s highly recommended that you start there. Don’t skip steps. Learning is a gradual process that must be followed in a structured, step-by-step manner.

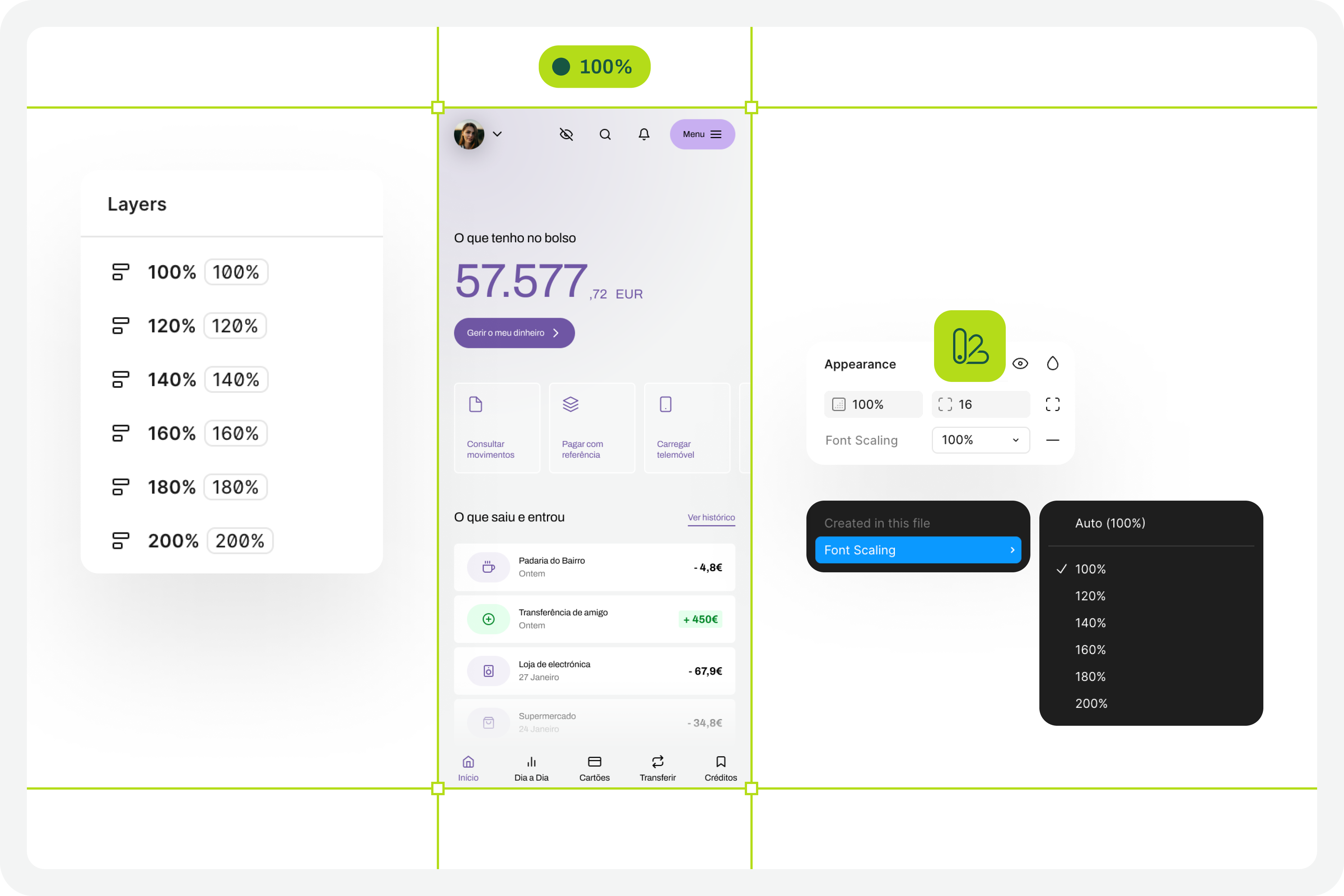

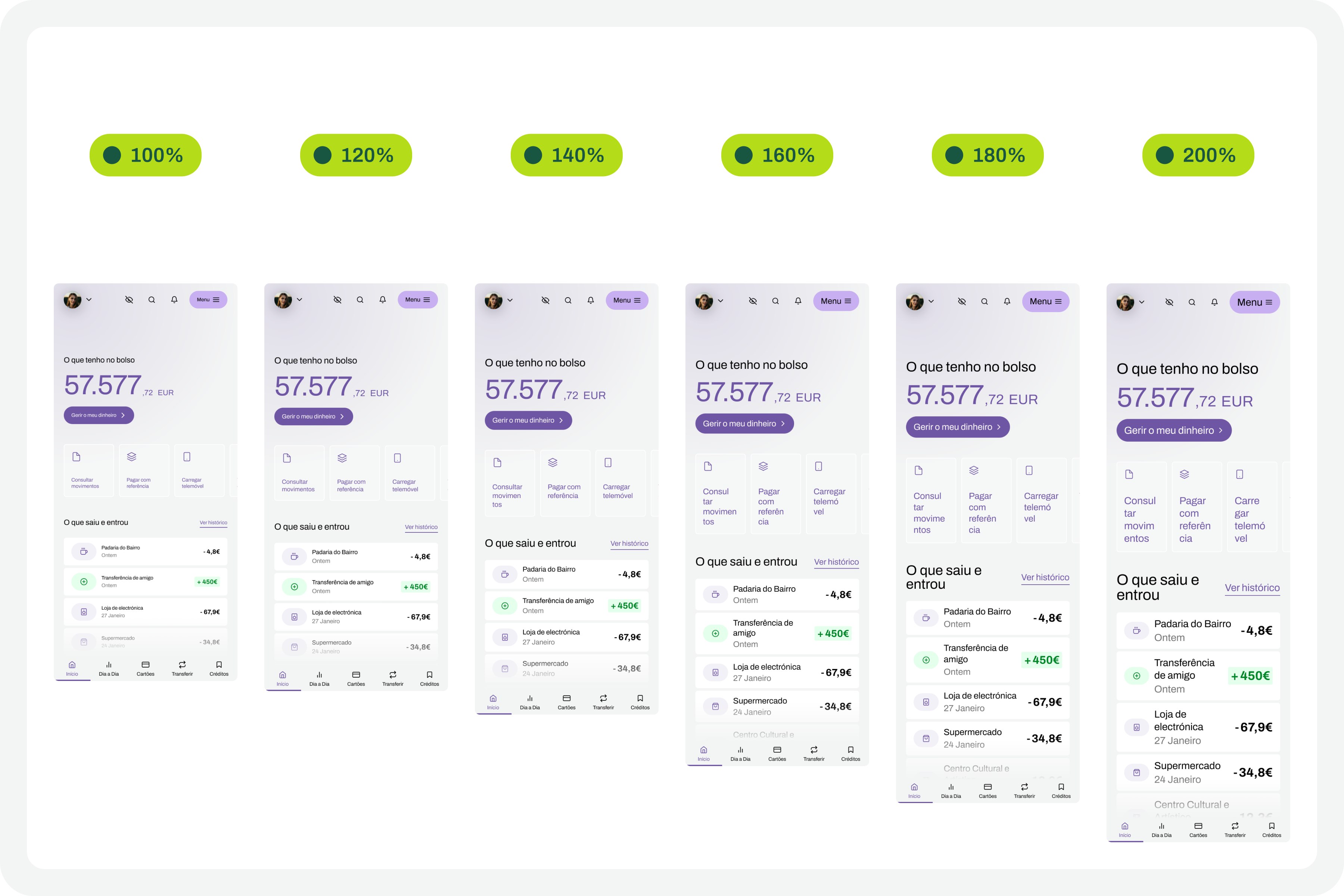

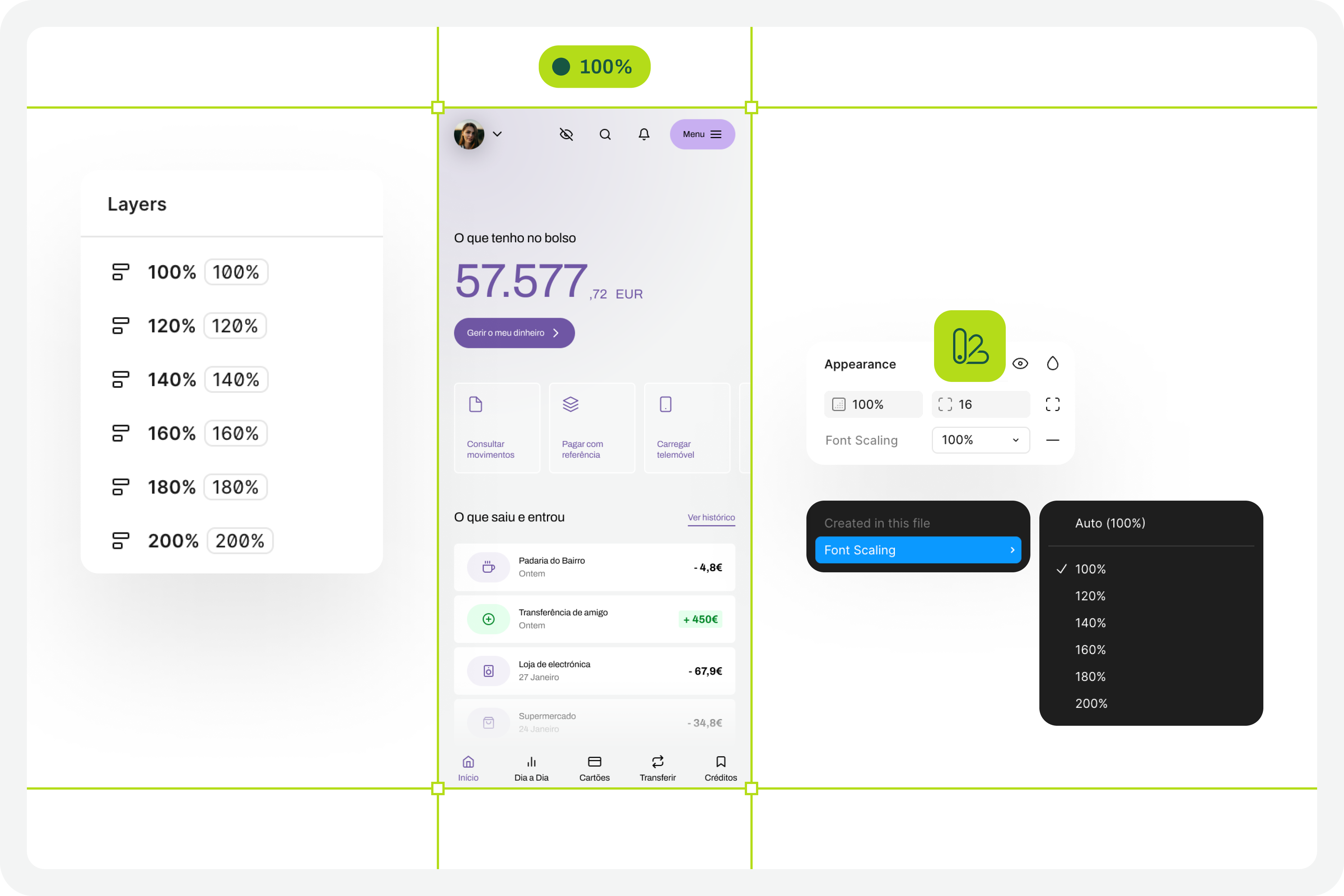

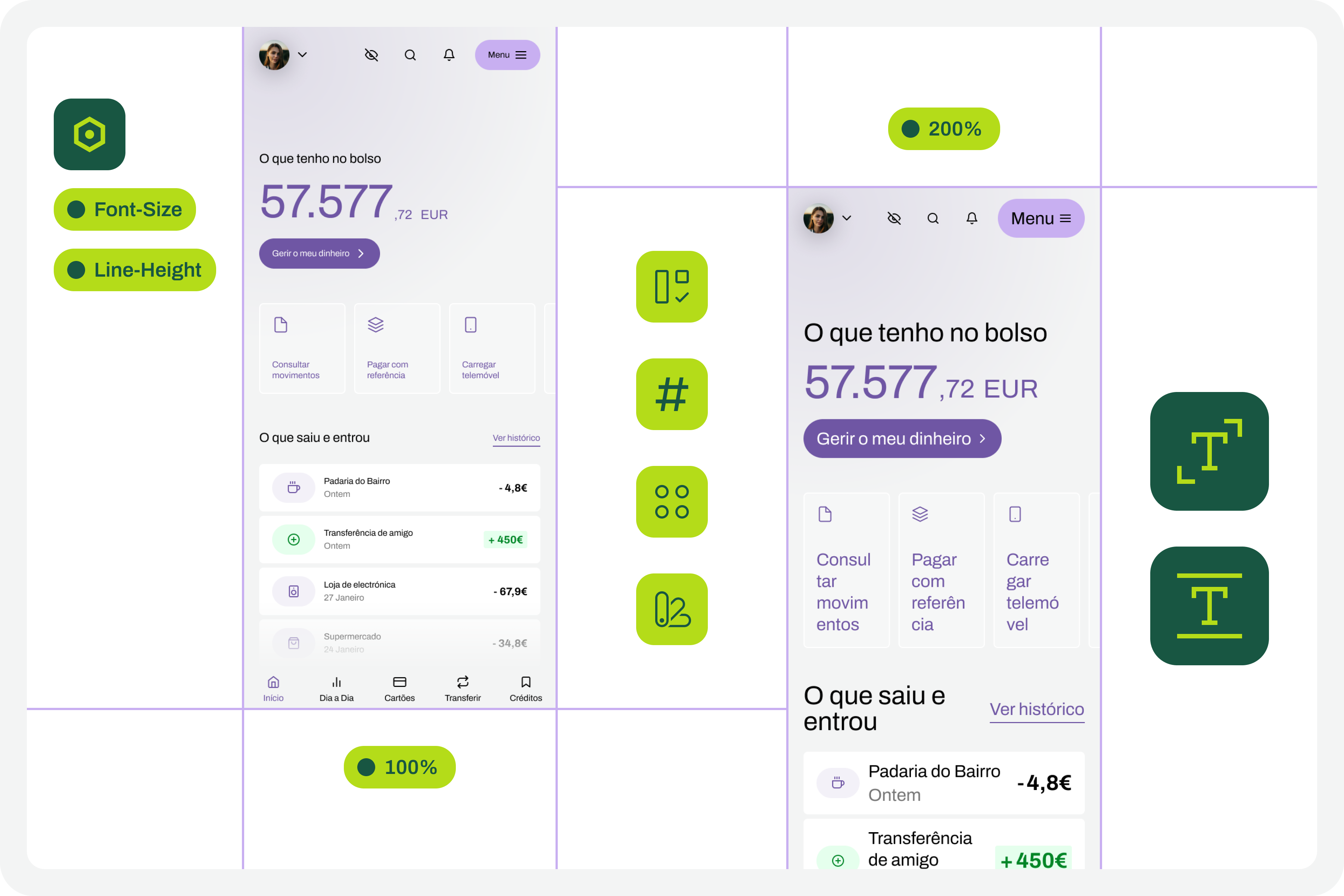

Where Do We Want To Go?

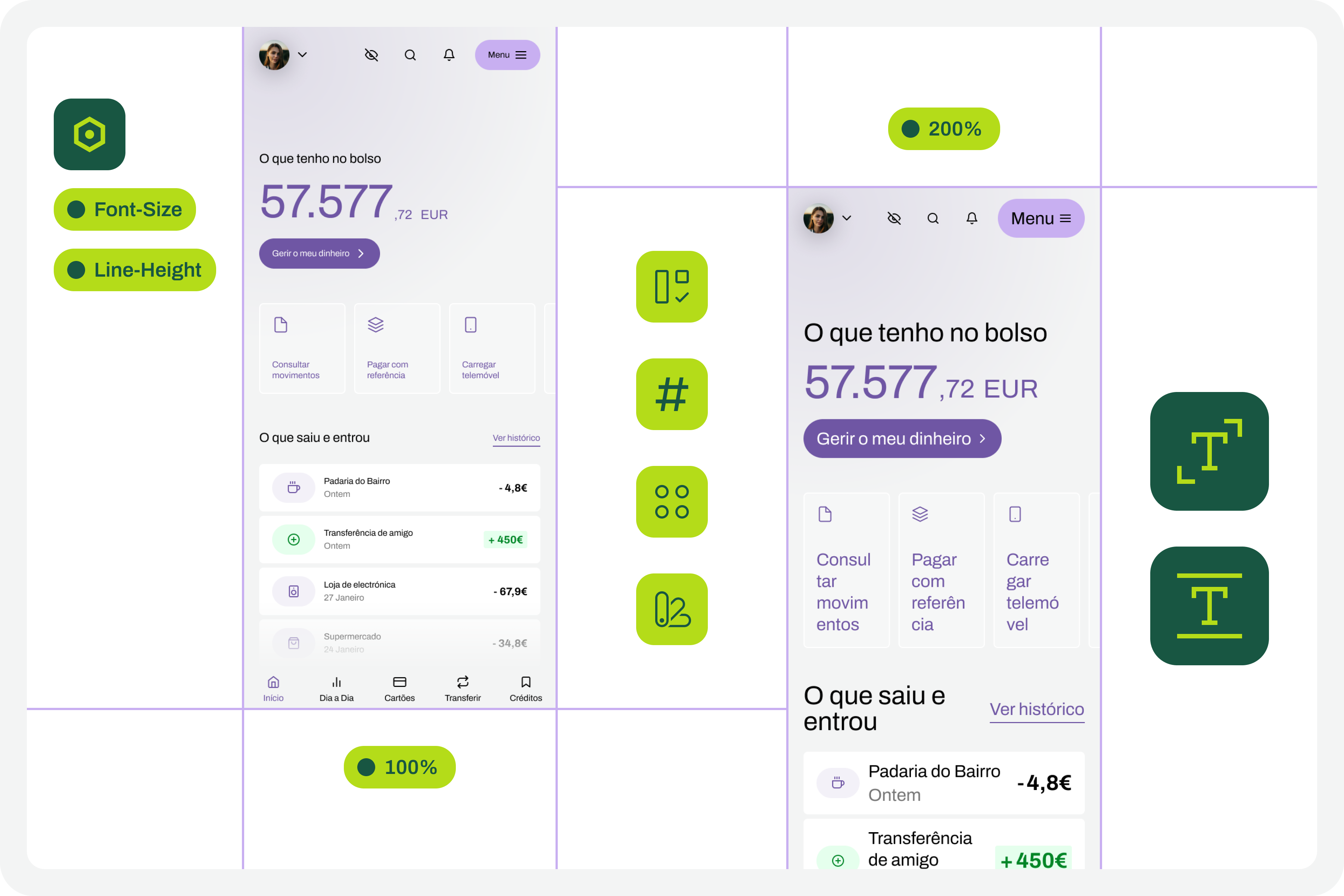

The font size increase test in Figma that we want to perform is simple. We want to have a set of variables available for all the text styles we use in the interface, allowing us to choose whether we want to see the interface with the text at a scale of 100%, 120%, 140%, 160%, 180%, or 200%. As we apply this set of variables (much like applying variables for light and dark mode), we observe the transformations of the text in the interface and understand to what extent adaptations are needed in each version of the interface with different typographic scales.

How Do We Make This Happen?

For this test to go so smoothly, you need to do some groundwork. Design systems can greatly help optimize much of this initial work. But I won’t lie to you. For the test to work well, your design needs to have a very serious level of organization and systematization.

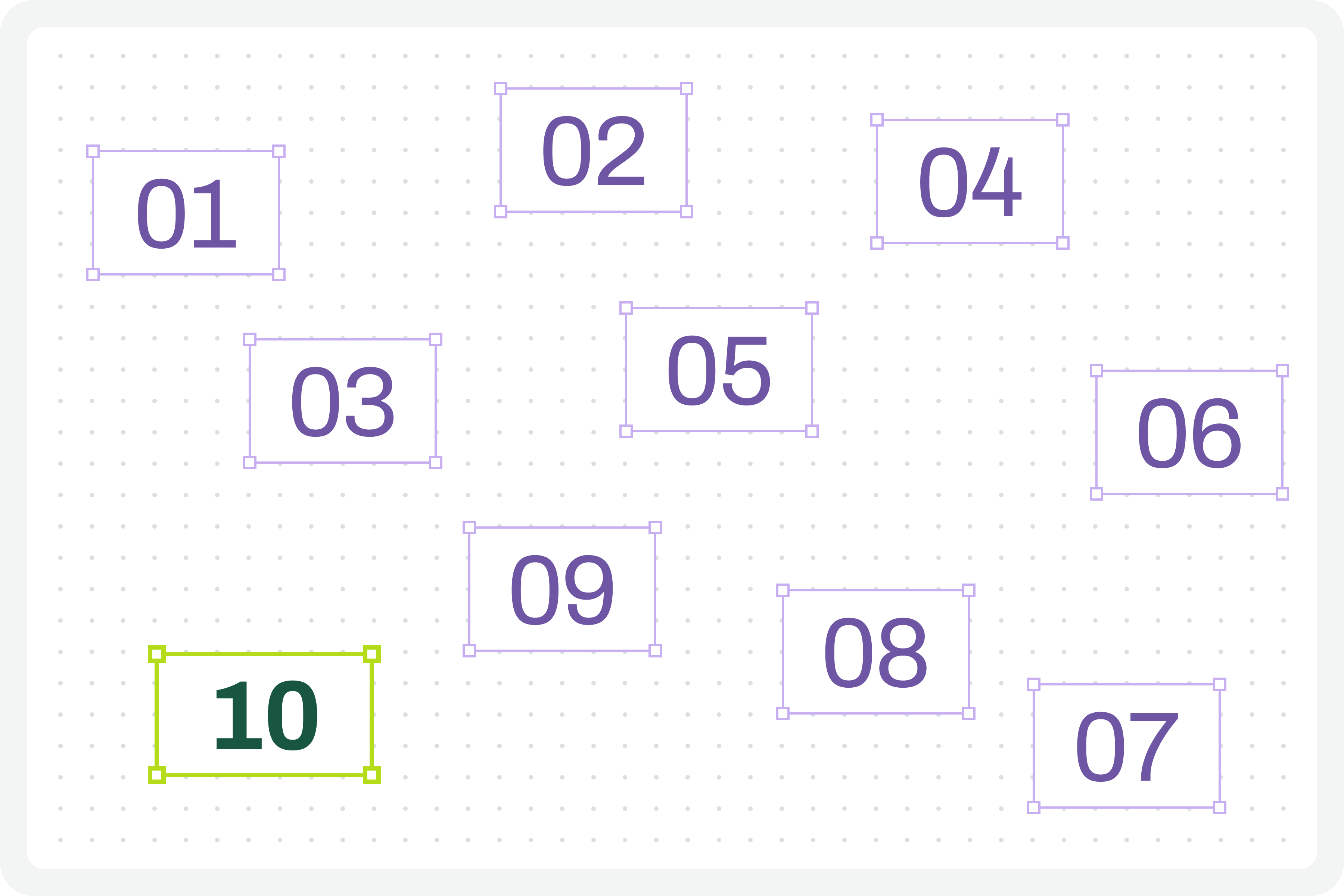

This isn’t really a guide, because each team will have its own work model, and these recommendations can be applied in different ways (and that’s okay). However, for this test to work, it’s important to ensure certain assumptions in the design. To help you phase the implementation of this test model, here are some steps to follow. Step-by-step instructions to guide you in organizing your files and ensuring you can fully execute this test in the simplest and most practical way possible.

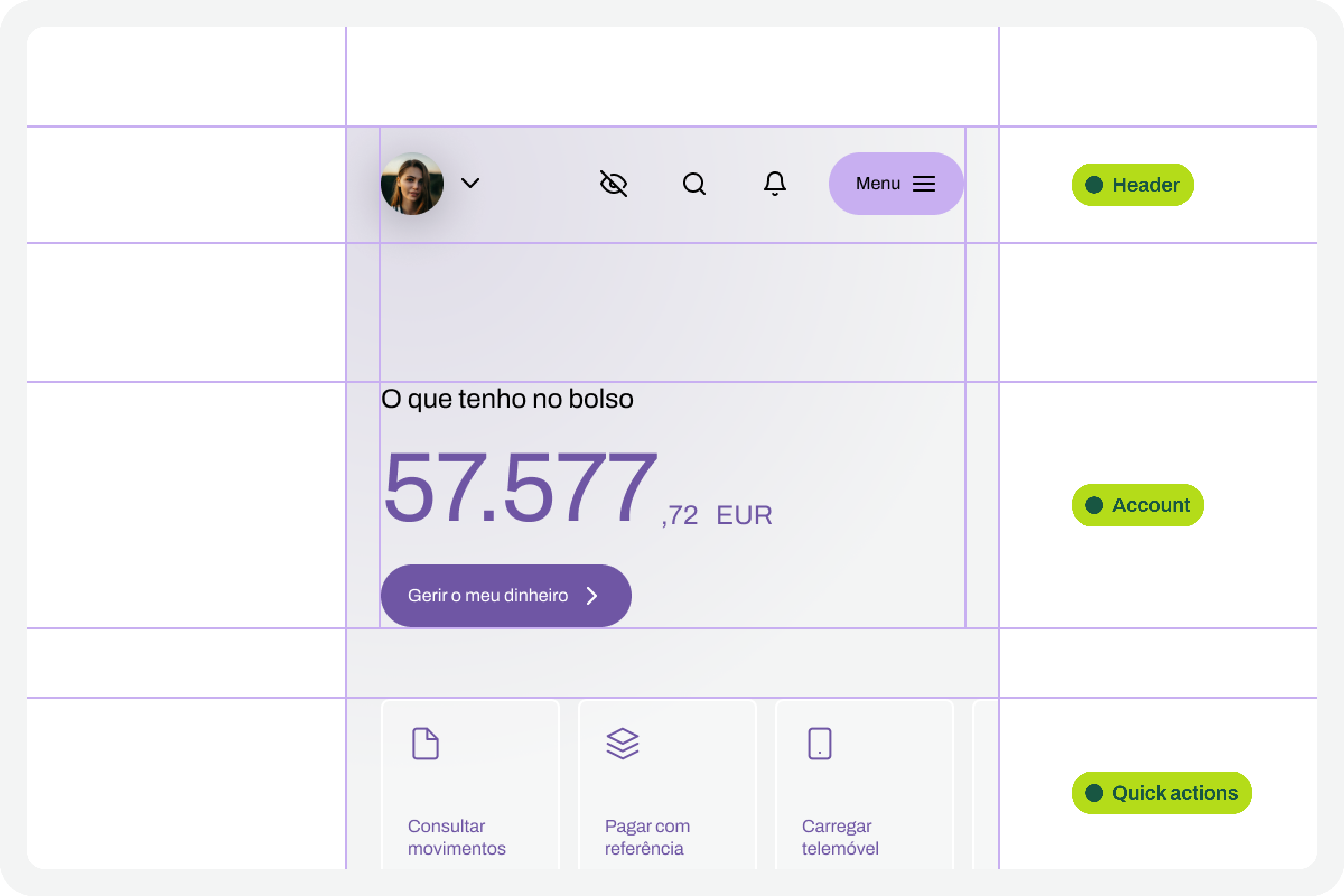

1. Designing The Interfaces

It all starts with the design. Before any testing, the focus should, as it should, be on the design of each interface that we will want to test later. At this stage, there is still no specific concern with the font size increase test that we will perform later. Naturally, all interface design should, from the outset, follow the most basic accessibility recommendations applied to design.

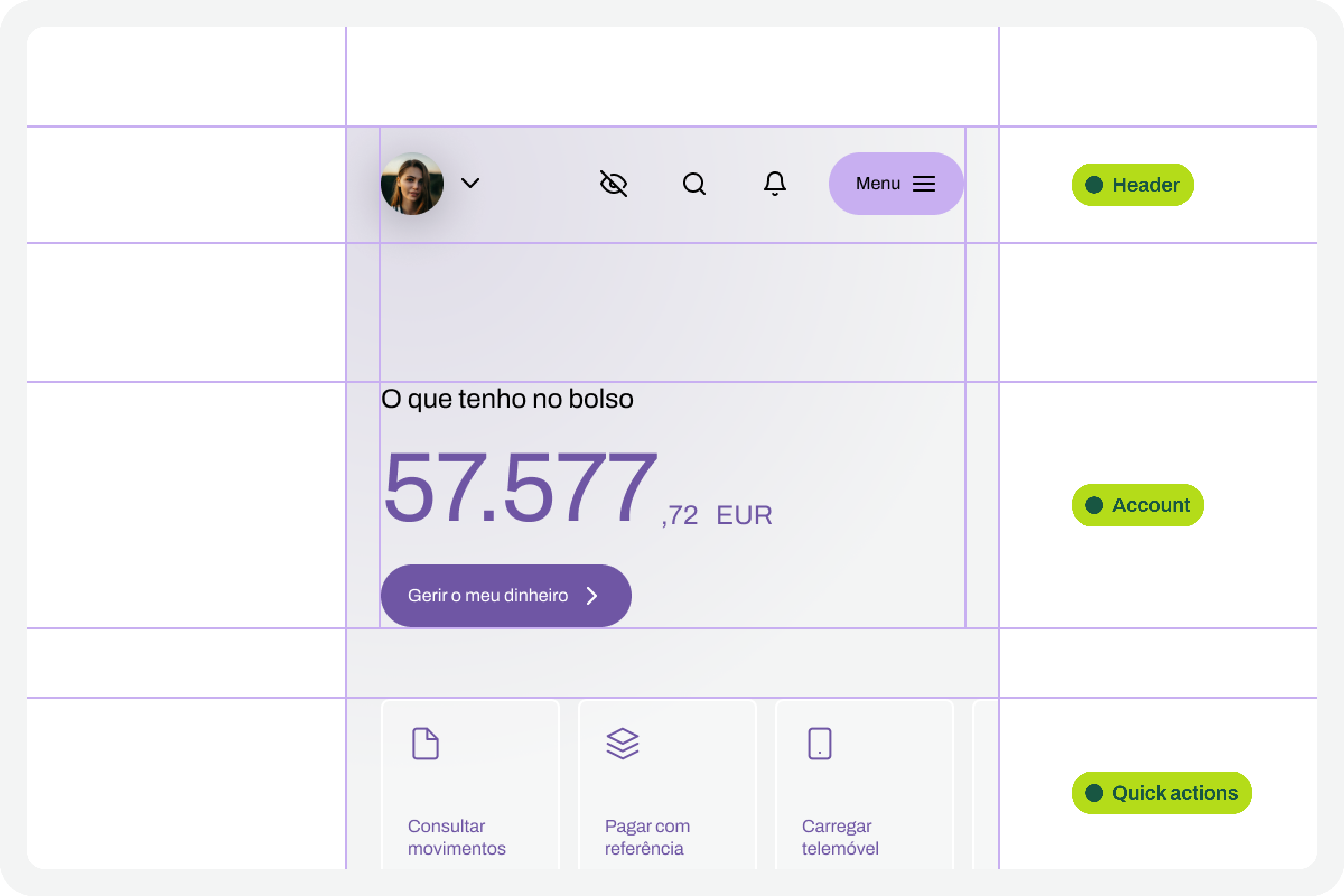

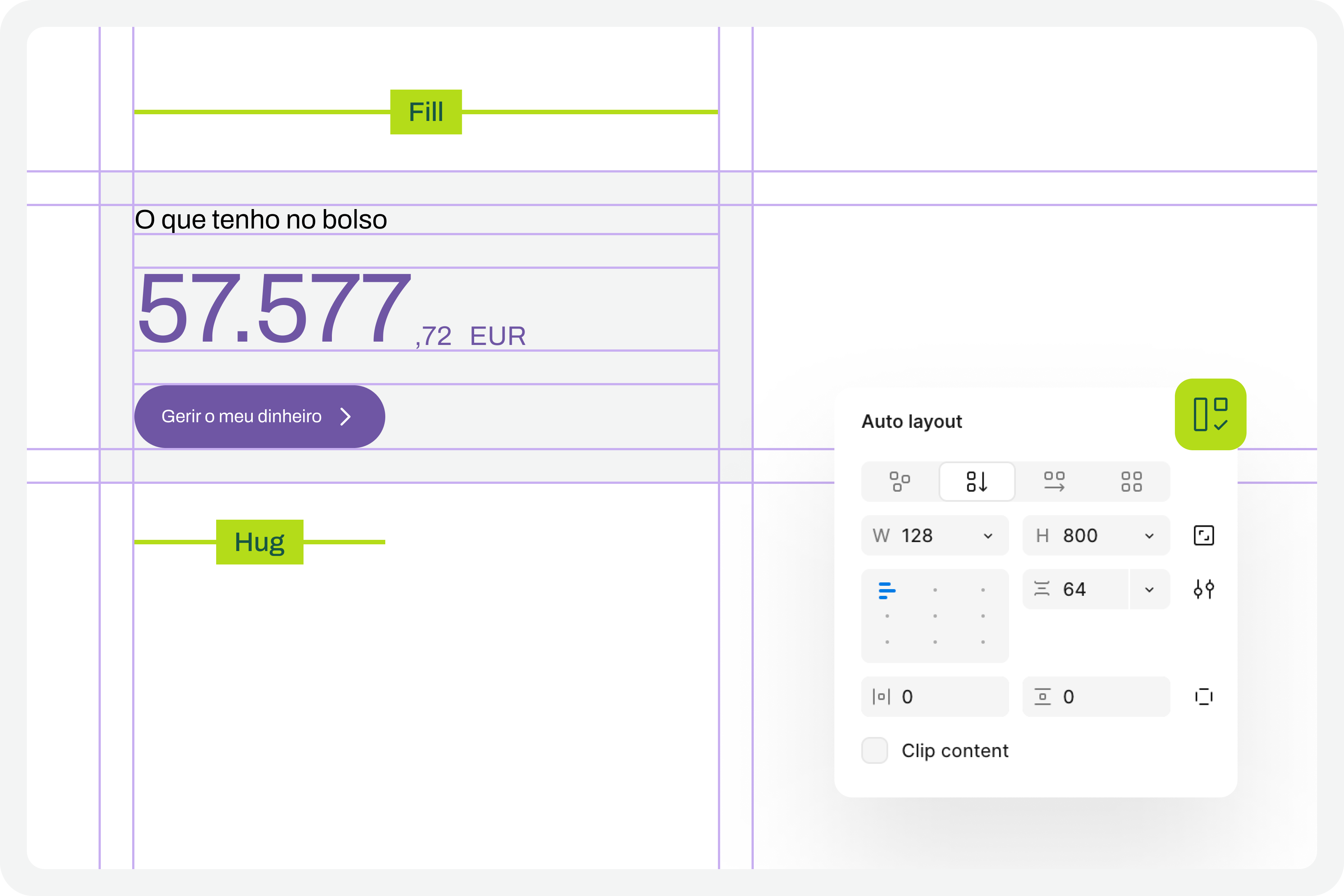

2. Apply Auto Layouts To All Elements

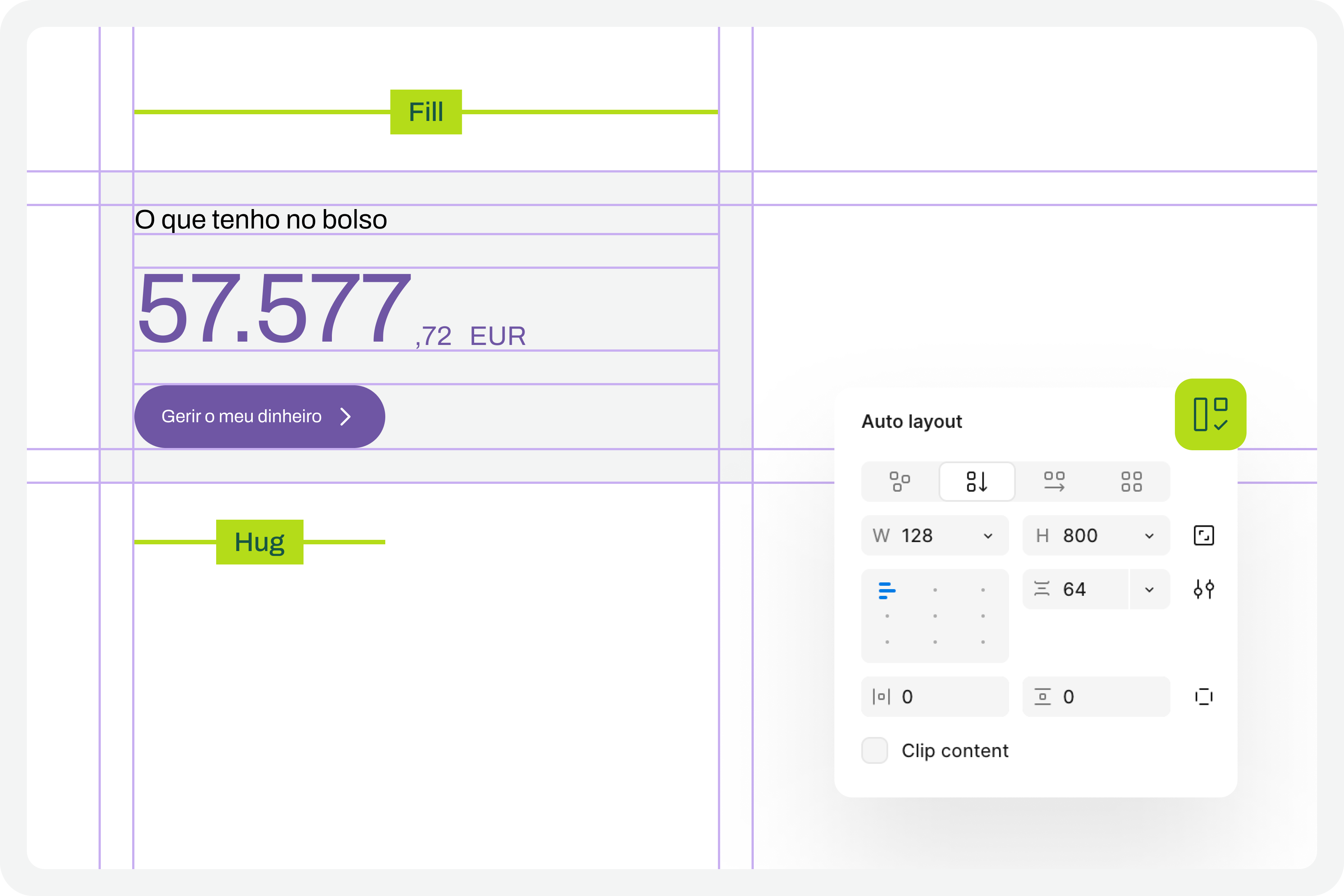

In every screen design you create, you’ll need to ensure you apply auto layouts perfectly. This is a very important step. It’s this consistent application of auto layouts to the entire structure and design elements that will later guarantee the scalability of the interface when we start testing font size increases. You really can’t underestimate this step. If you don’t pay it the attention it deserves, you’ll see when we test typographic scaling in the interfaces, everything breaking down like an elephant in a china shop.

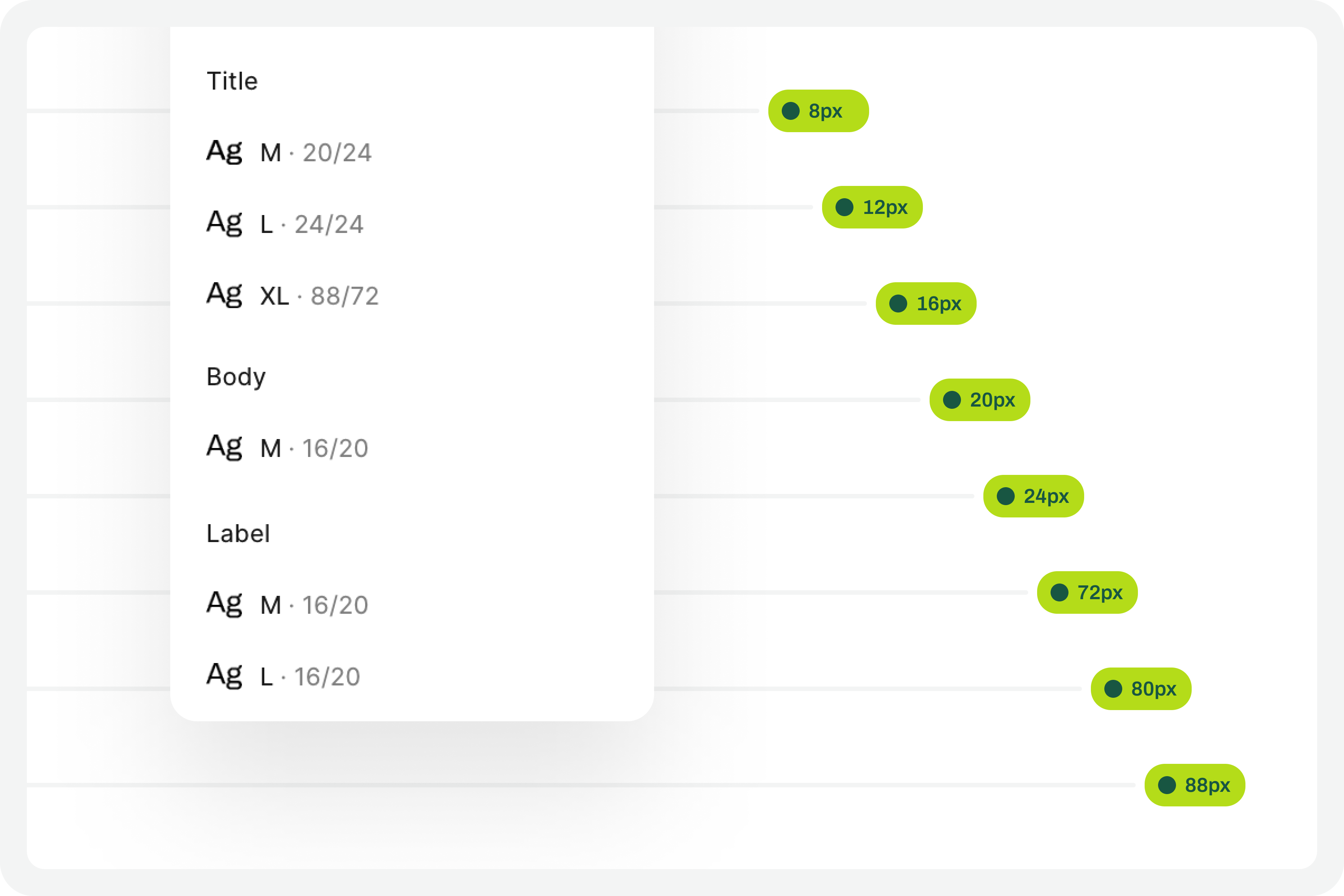

3. Structuring And Applying Text Styles

To perform our font size increase test, we’ll also need you to have applied text styles to each interface design. You probably even started creating them as you were drawing. Great. If you haven’t done so, it’s important that you do it now. For the test to work perfectly, we really need this. Don’t leave any text element in the design without a text style applied.

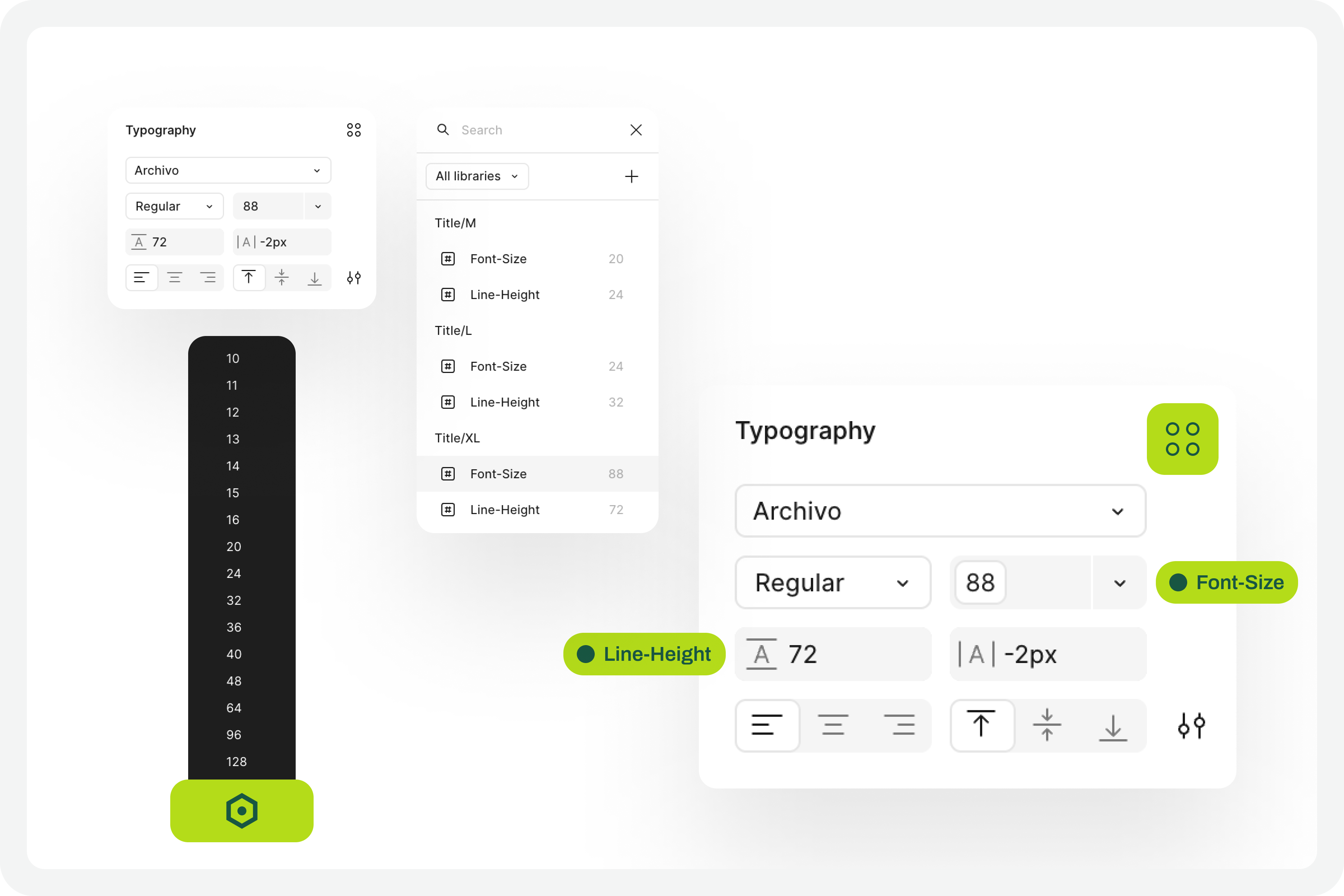

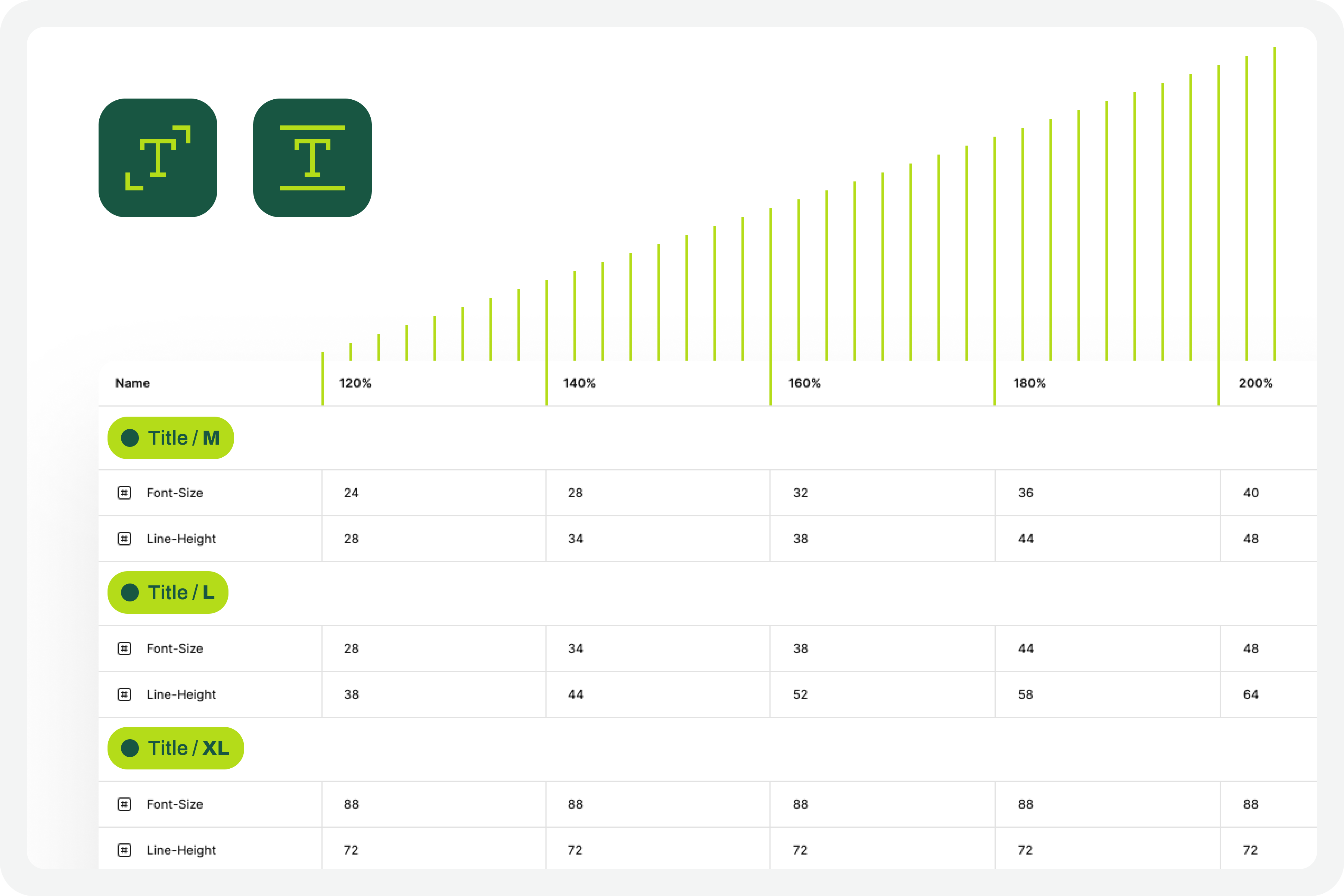

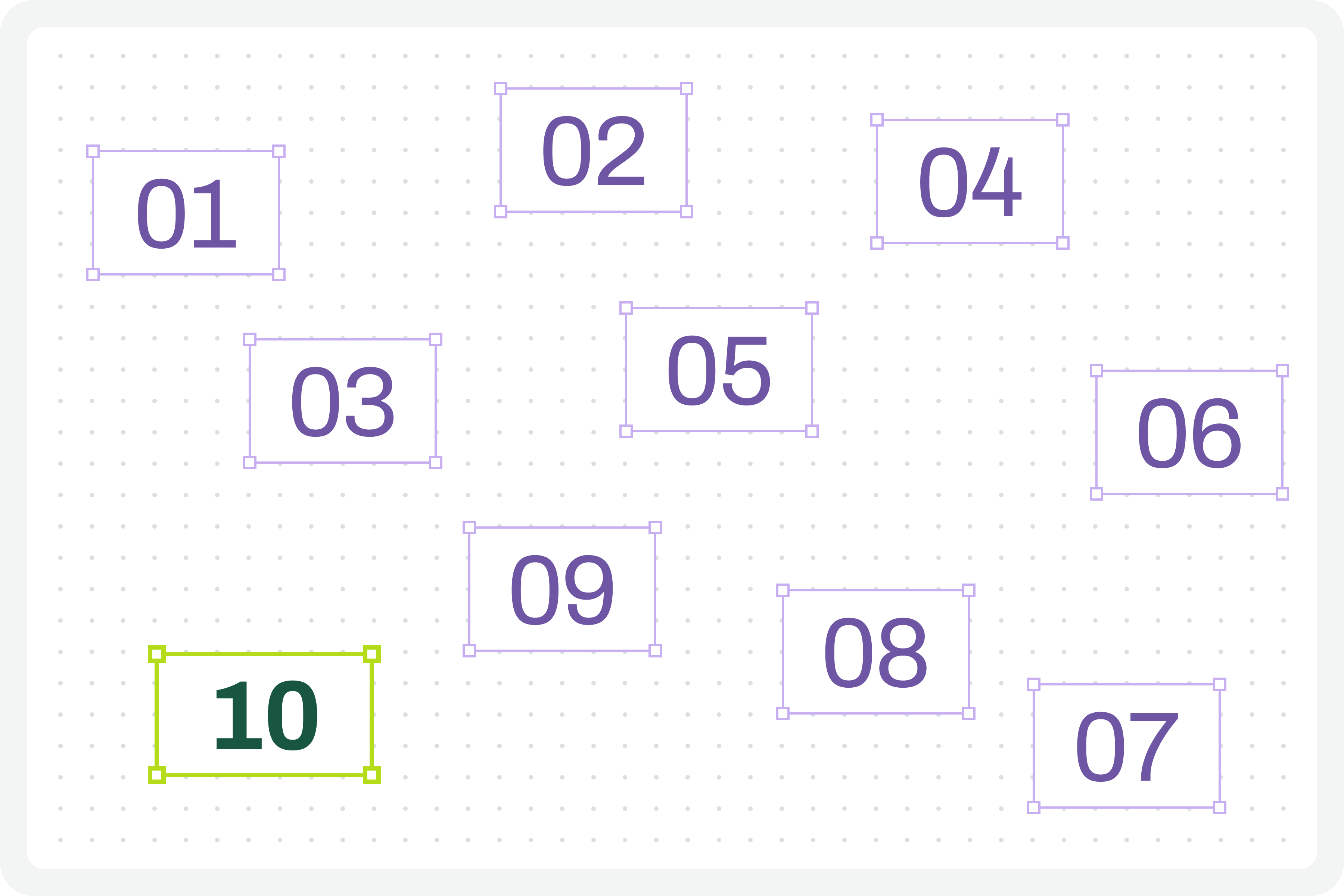

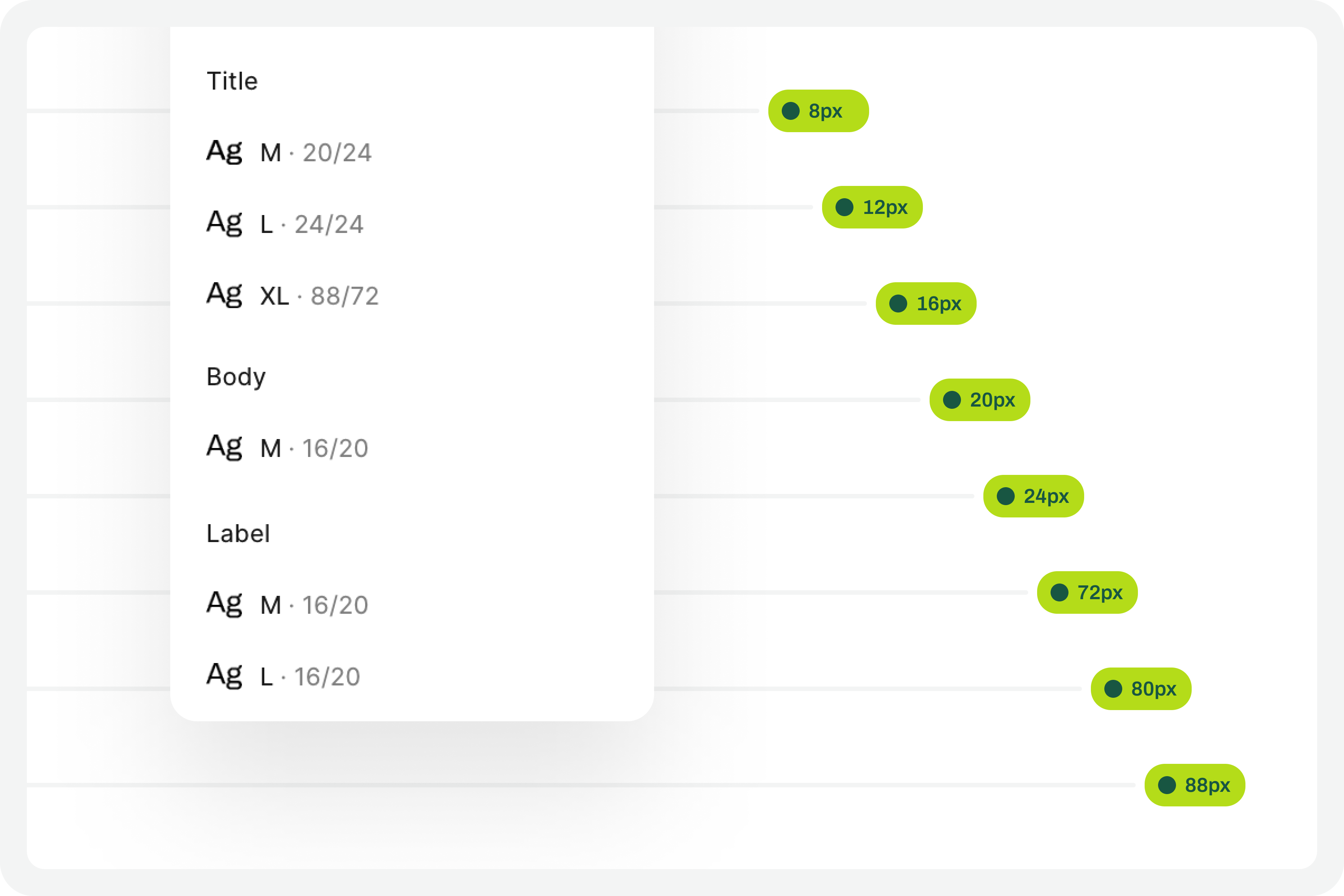

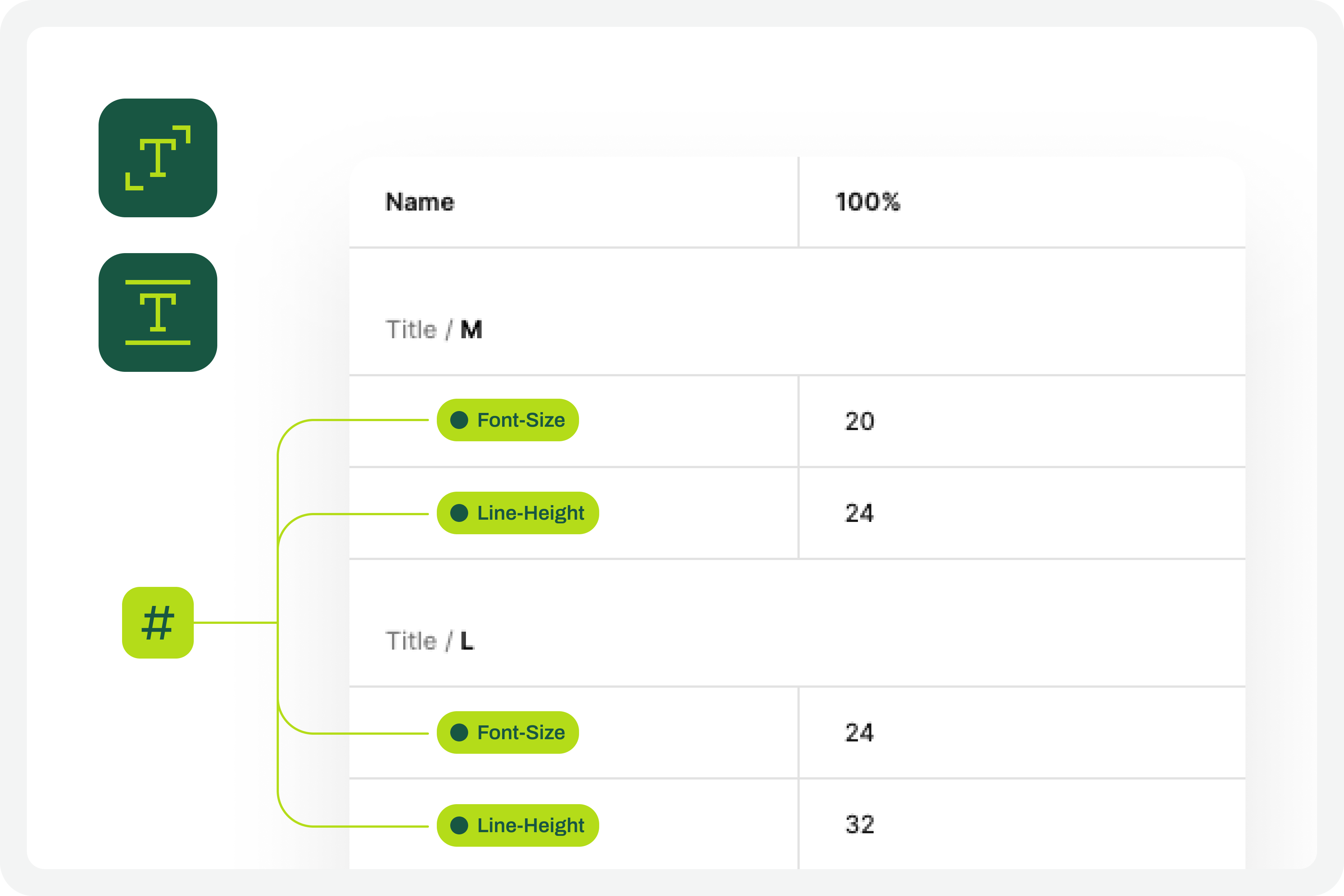

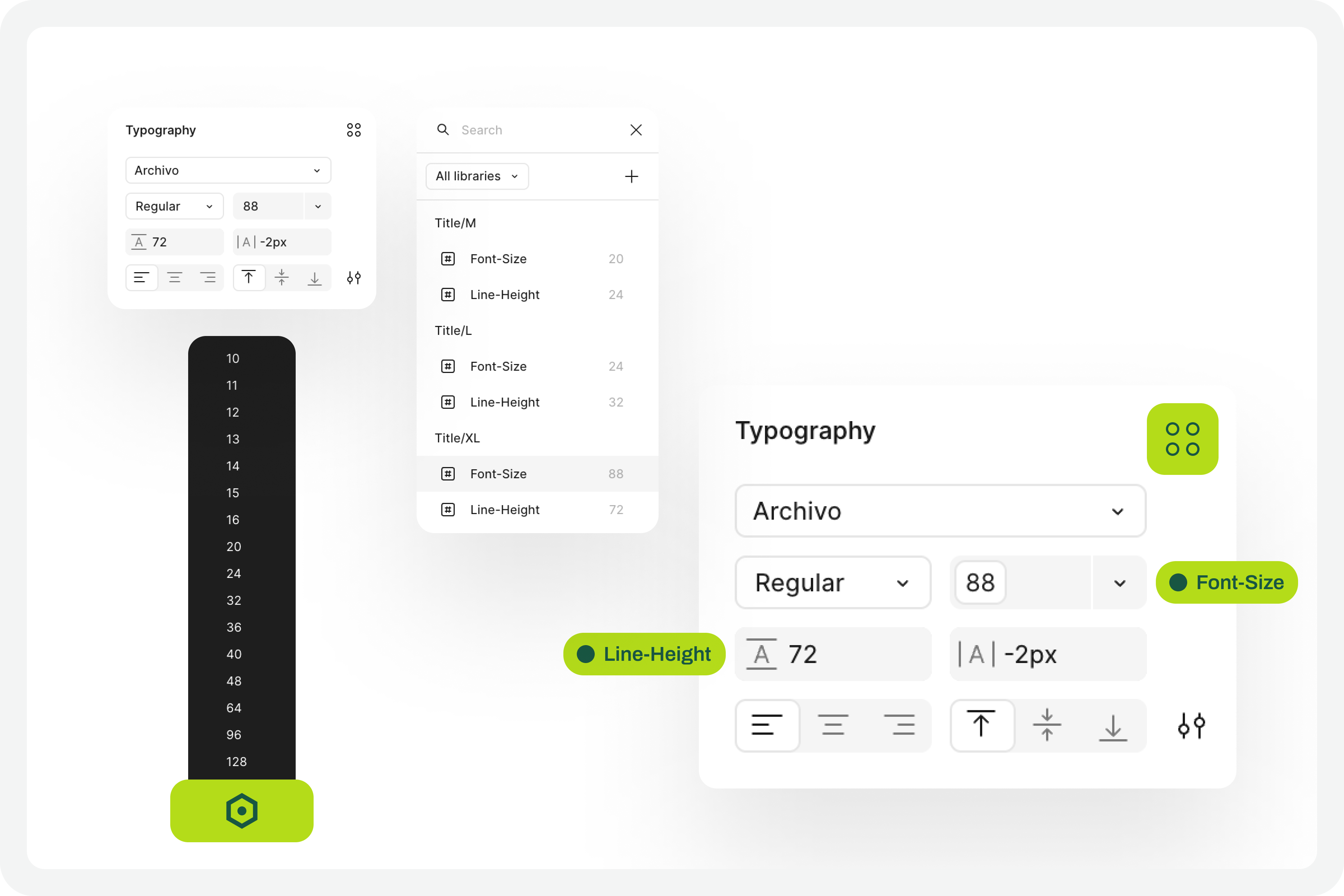

4. Define The Set Of Variables 100%

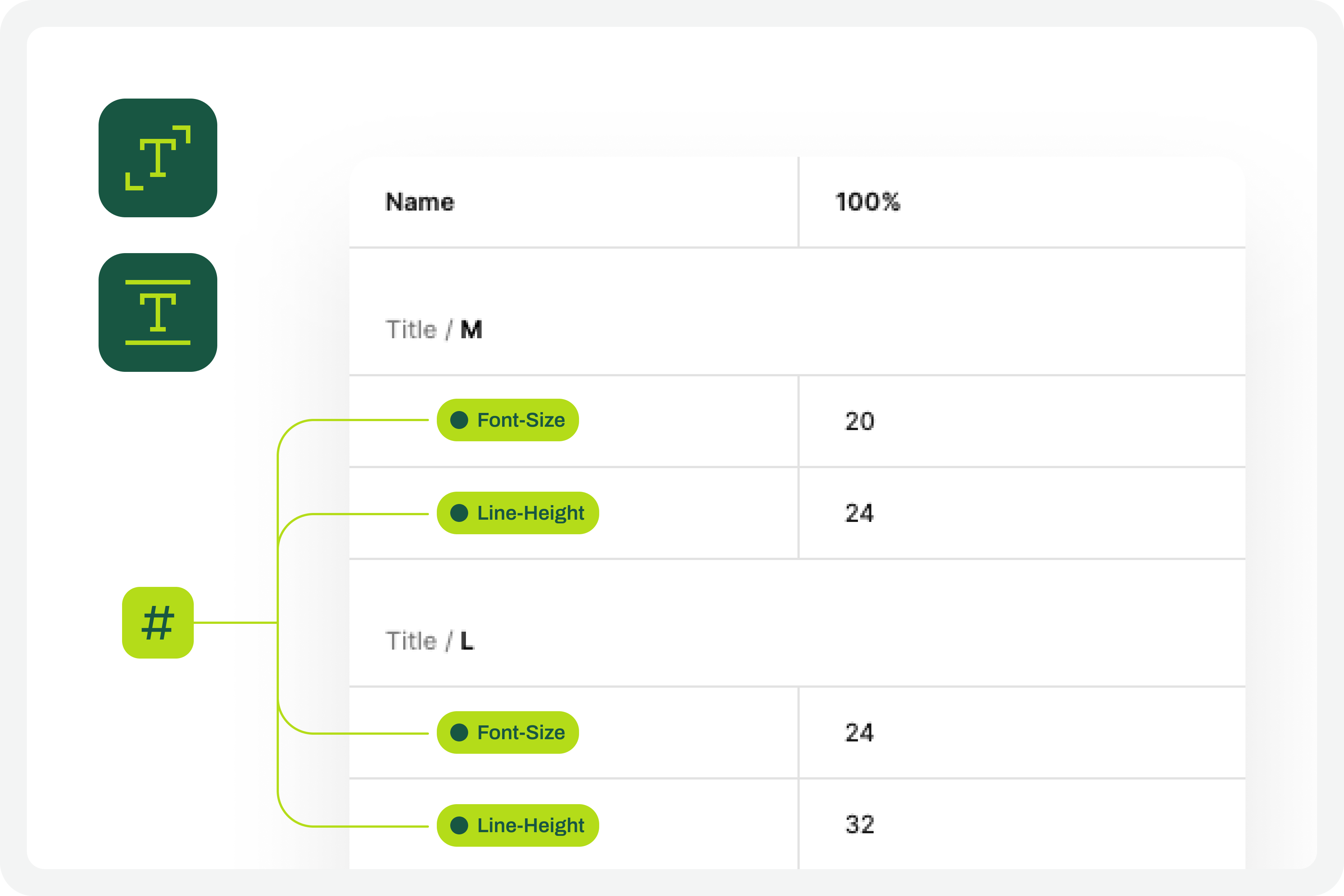

This test forces a fairly high degree of optimization. In practice, this means we will have to use Figma variables for all the characteristics of the text styles we have in the interface. At this stage, you must define Figma “number” variables for at least the font-size and line-height of the text styles you applied to the drawing. With this step, you are defining the font size increase scale values for a 100% visualization model, that is, the initial and reference version of the drawing. It is important that you structure these variables for each text style in the drawing because, subsequently, we will have to consider the enlargement scale of each of these text elements.

5. Apply The Variables To The Text Styles

Having defined the variables for the 100% scale text styles, you must now apply them to the elements of the text styles already created. Don’t forget to apply variables at least to the font-size and line-height characteristics. If you have more typographical variables, that’s fine. But you should at least have variables applied to font-size and line-height. This is really very important.

6. Define The Variables For Increasing The Text Size

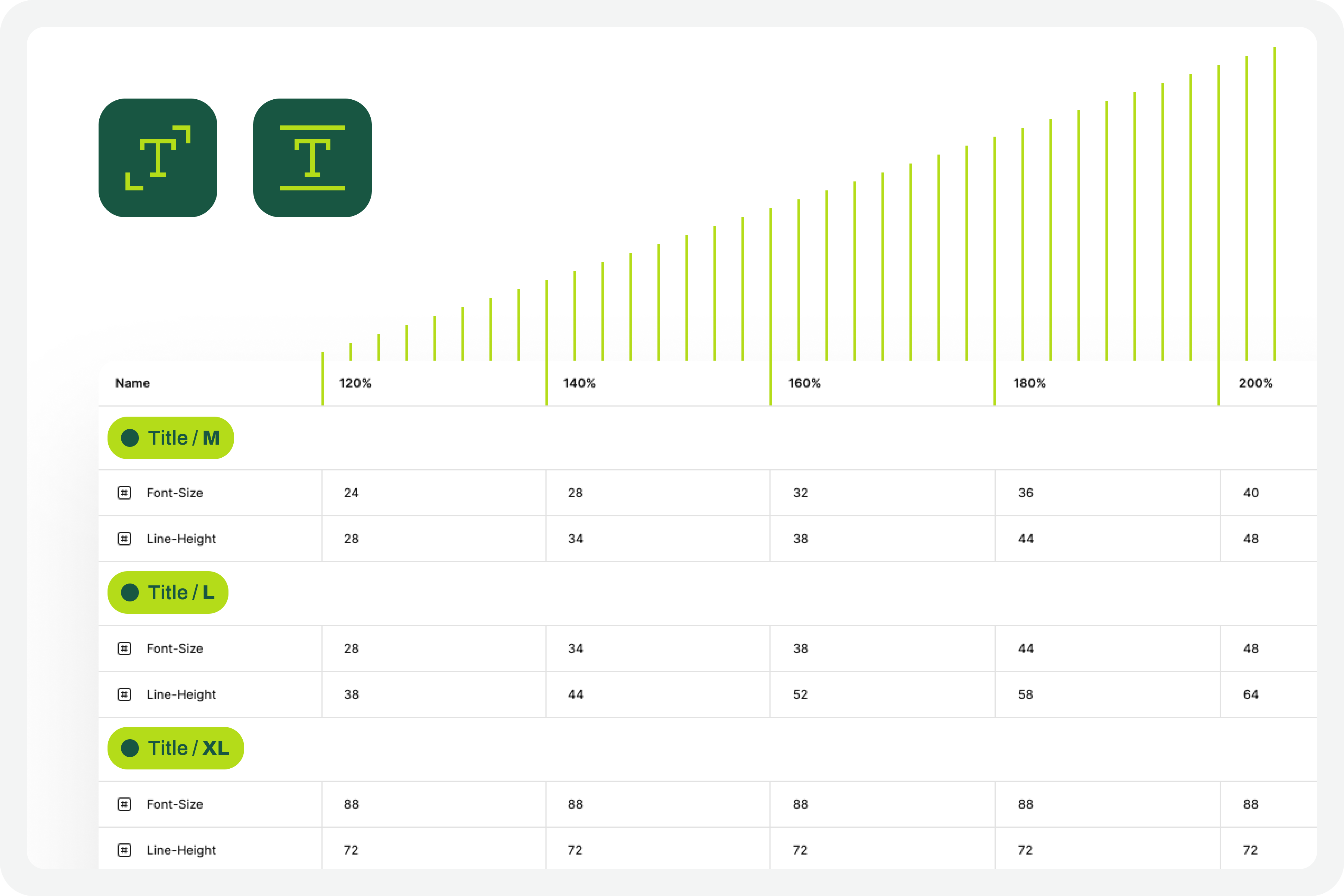

Now that you have the variables applied to the 100% scale text styles, the next step is to create the variables for the other font size increase scales. In practice, you have to create the variables that will tell the system what font size each text style will grow to when the increase scale is 120%, 140%, 160%, etc.

To define the font-size and line-height values, simply multiply the initial value by the scale percentage. For example, if a text style has a font-size of 16px, the size for the 120% scale will be 16 multiplied by 1.2, which gives a result of 19.2. Repeat this calculation for all font-size and line-height values of the font size increase scale percentages you choose.

You can also choose whether or not to apply rounding to the final values. This is an approximate test, and therefore any differences that may arise from rounding will not affect the final perception of the test result.

7. Apply Variables To Different Scale Versions

The moment of truth has arrived. The next step is to understand if we have everything working so that the test runs perfectly. Therefore, you should copy the original interface and apply the set of variables for each of the font size increase rates that make sense to you. Repeat this process for all the font size increase percentages you have defined.

As a suggestion, you can use the 120%, 140%, 160%, 180%, and 200% increase percentages as a reference. If you want to simplify, you can reduce the number of scaling percentages you are working with. Regardless of the number of percentages you are working with, you should always work with the minimum of 100% and 200% scales.

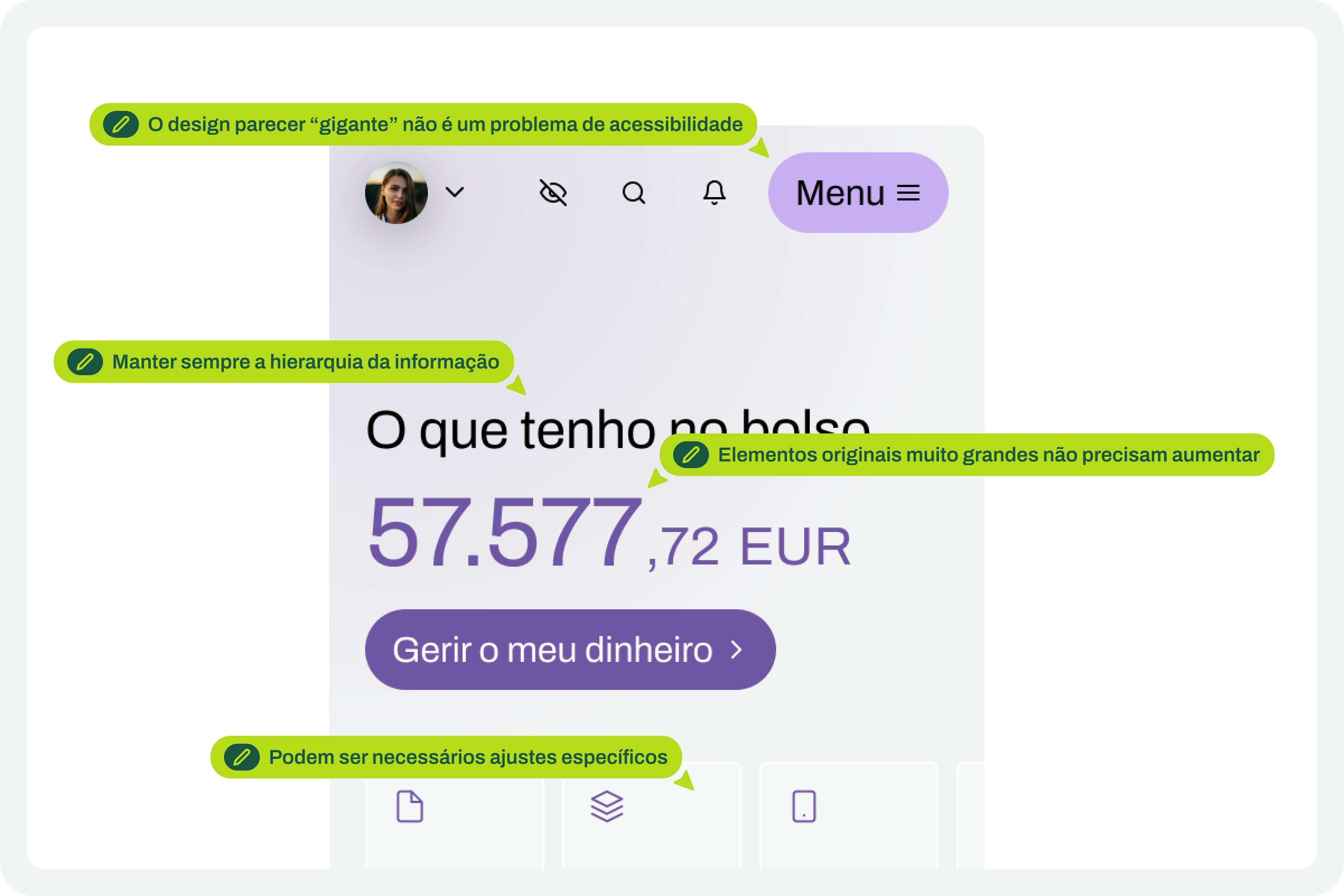

8. Identify Areas For Improvement

By applying different font size increase scales to the same screen, it’s easy to understand where improvements might be needed. This is where the real test of increasing font size in interface design and the most interesting accessibility work begins.

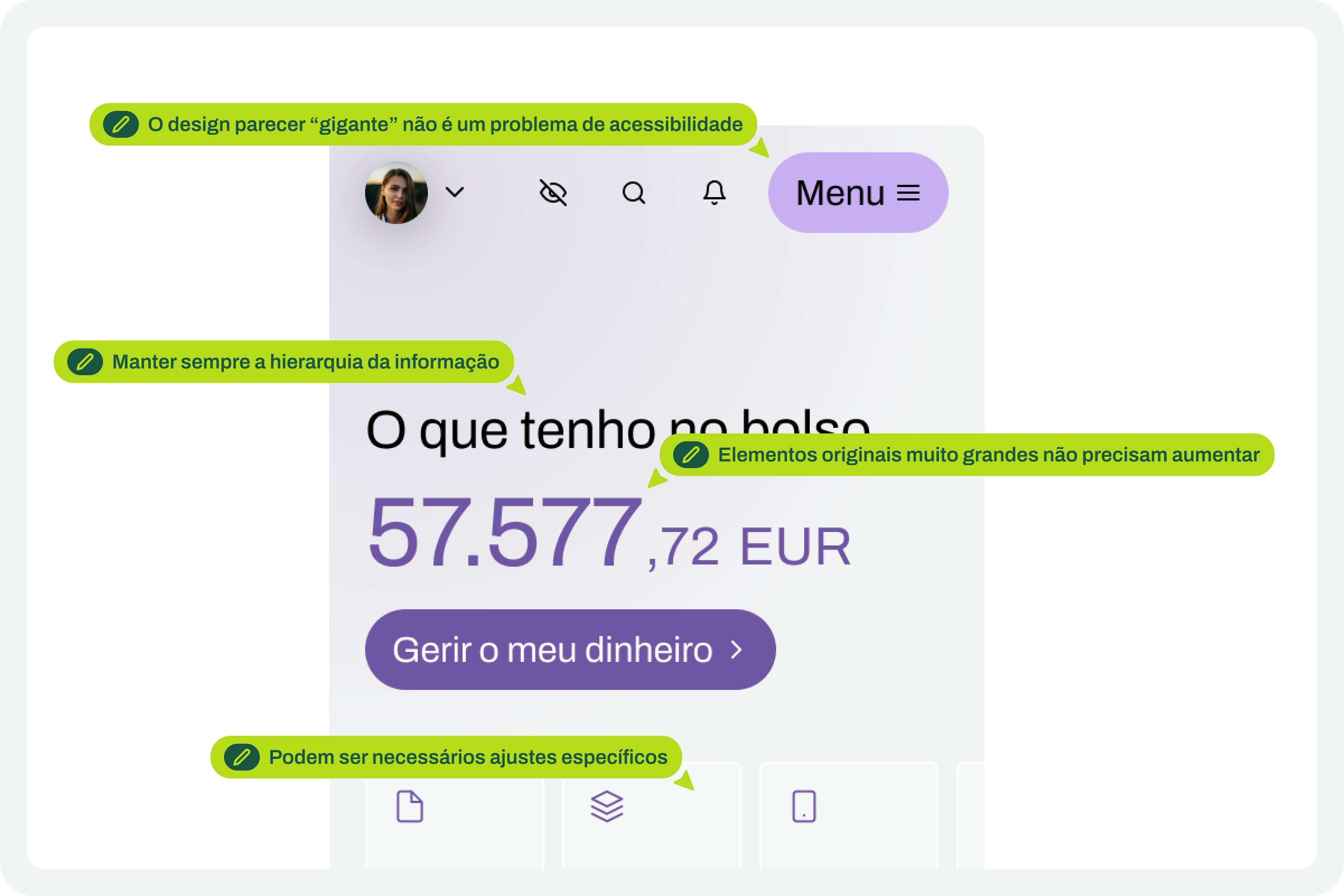

In your analysis of the various screens, keep some important aspects in mind:

- The fact that the text appears gigantic isn’t a problem and doesn’t “ruin” the design. Remember that this can mean the difference between someone being able to use a particular product or service or not.

- An accessibility problem exists when increasing the font size makes it impossible for the user to read certain texts or to activate certain controls.

- For text elements that are already very large, increasing the font size might not make sense. Doing so could make those elements disproportionate, which wouldn’t improve readability (since they are already a good size) and would occupy completely unnecessary space.

- If there are elements that appear to be popping out of the screen, the first step is to confirm how you are applying auto layout. Many design aspects can be easily resolved with the proper use of auto layout.

- Regardless of the scale of font size increase, it is essential to maintain the visual hierarchy of the typography, as this readability is important for perceiving the different levels of information present on the screen.

- This test can help identify elements that may need adjustments directly in the code to function well at a given scale of increase. Not everything can be solved through design alone, and that’s perfectly fine. Accessibility is essentially a team effort.

9. Make Corrections And Adjustments To The Design

Finally, based on the various screens with different text enlargement scales applied, you can make the design changes that make sense. Some of these adjustments may only be necessary in code. In these cases, you document all these suggestions and pass them on to the development team. It is also crucial to reinforce (again) that some of the problems you may encounter in the design can be quickly resolved in the design process, with the simple and correct application of auto-layout properties.

10. Go Back To The Beginning And Repeat The Process

This is a cyclical approach. This means you should repeat these steps, or variations thereof, as many times as necessary throughout the project. It’s natural that, over time and with process optimization, some of these steps will cease to make sense. That’s absolutely not a problem. But the most important thing to realize here is that accessibility and this process of testing font size increases shouldn’t be done just once, and that’s it. It’s a test to be done many, many times throughout the day-to-day work of each project and team.

The Role Of Design Systems

At first glance, this list of steps might seem like a complex exercise. But it’s not. This is because the vast majority, if not all, of these steps are easy to execute in any context where a design system exists. In fact, design systems have become an unavoidable standard in the Product Design industry. We can discuss what each team calls a design system, but the truth is that it’s very difficult today to find a Product Design team that doesn’t have, at the very least, a minimally structured library of components and styles.

With this foundation, whether more or less documented, it’s very easy to apply this type of font size increase test using Figma variables. Furthermore, if your design system already has, for example, structured variables for light and dark mode, it means you’re already applying the exact same principles we used to perform this test. So, nothing new.

Working with design systems involves a level of structuring and organization that is also very useful for creating this type of test. There’s a myth that design systems limit creativity. This is not true. Design systems help solve the “bureaucratic” part of design, so we can actually have more time for what matters: in this case, testing accessibility and building more and more products and services that are truly accessible to the greatest number of people.

Example File

It’s always easier to see an example than just read a description of a process. If this is true in many disciplines of knowledge, in design, this premise makes even more sense. Therefore, in this Figma file, freely published and openly available to the community, you’ll find a practical example of the entire testing process described here. Remember that this is just an example. There may be countless ways to perform this type of test within the context of a Figma file.

Be sure to look at this approach with a critical eye. It’s a suggestion for testing font size increases that follows a specific process. Despite this, the approach should be adapted to your team’s specific reality, processes, and level of maturity. Simply copying formulas from other teams without understanding if they make sense in our own context is a sure way to make accessibility efforts disproportionate. Every situation is unique. This approach attempts to simplify accessibility work as much as possible in this specific context. And remember: if something happens, however small, it’s a step forward, not a step backward. And that should be celebrated by everyone on the team.