Experienced plugin developers read IntelliJ Platform source code 240% more frequently than new developers do.

This was one of the most striking findings from our 2025 survey of plugin developers, and it’s the starting point for understanding how developers’ needs evolve as they gain experience with the IntelliJ Platform SDK.

Note: This survey captured historical usage patterns. References to the community Slack channel and Plugin Development Forum reflect resources that have since been replaced by the JetBrains Platform Discourse forum.

The experience gap revealed

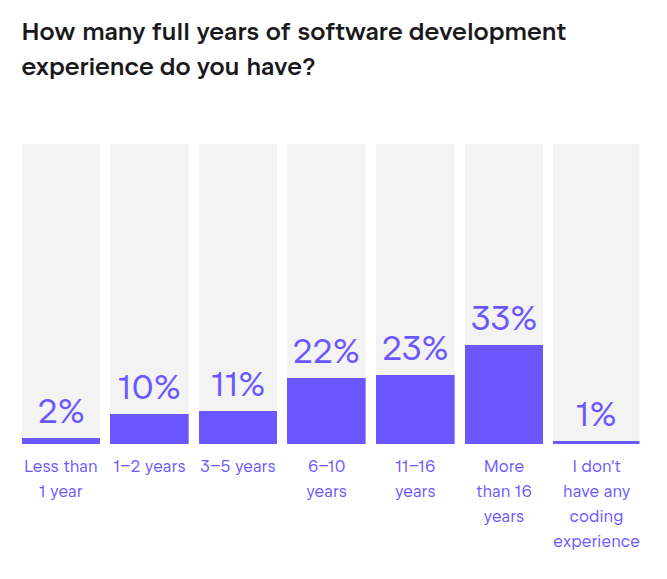

In March 2025, we surveyed plugin developers about their experiences with the IntelliJ Platform SDK. The results revealed a community that is both highly skilled and remarkably diverse. 77% of respondents have six or more years of general software development experience.1

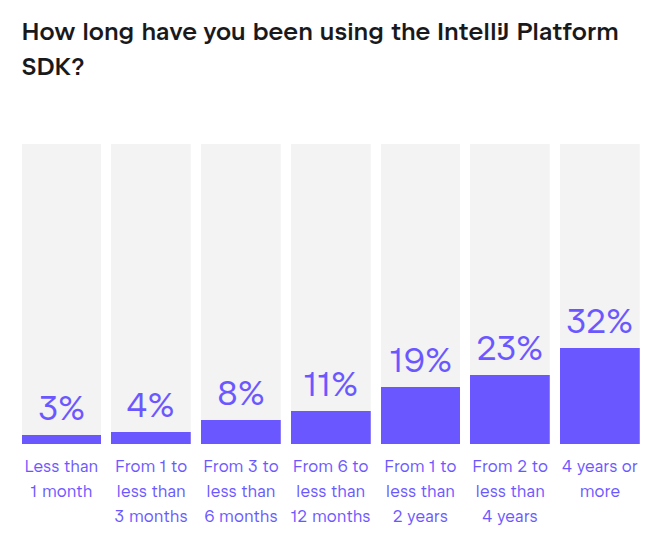

Yet 26% have less than one year of experience with the IntelliJ Platform SDK specifically. This combination creates an interesting dynamic: seasoned developers encountering a new and complex platform.

Experience levels play a big role in how developers approach plugin development. The contrast was especially striking when we compared developers with four or more years of SDK experience to those with less than one year. Experienced developers reported using Platform source code “very often”, at a rate of 54%, compared to just 16% of new developers, suggesting that these two groups operate in fundamentally different ways.

The gap extends beyond source code. Experienced developers were 460% more likely than new developers to use the community Slack channel “often” or “very often” (28% vs. 5%). Experienced developers are also 140% more likely to prioritize improvements to API documentation comments than new developers are (41% vs. 17%). These are not minor variations – they point to fundamentally different workflows, different challenges, and different needs.

The challenges new developers face

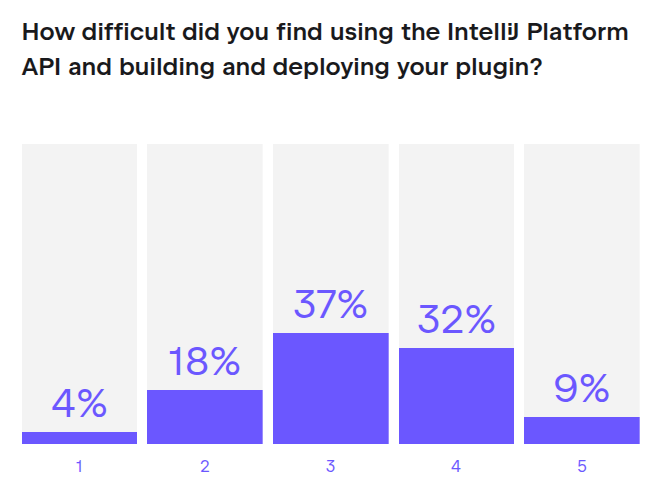

For developers new to the IntelliJ Platform SDK, the learning curve is notably more challenging than for their experienced counterparts. When asked to rate the difficulty of the Platform API on a scale of 1 to 5, 46% of new developers chose “4” or “5”, compared to 36% of experienced developers. The difference may seem modest, but it compounds across every activity. The chart below shows the difficulty of using the IntelliJ Platform API and building and deploying plugins across all respondents. With “1” and “2” accounting for only 22% combined, it’s clear that most users find the platform complex and challenging.

The most significant challenge is navigating a large codebase. 78% of new developers find this challenging or very challenging, compared to 49% of experienced developers. One respondent captured the frustration well:

“Documentation is poorly structured… around 30 docs each, 20 lines long… each one pointed to another. I wanted to have a single doc with all the information required from top to bottom.”

Understanding Platform-specific terminology presents another hurdle. Terms like EDT (Event Dispatch Thread), PSI (Program Structure Interface), and others are unfamiliar to newcomers. Overall, 49% of developers find this terminology challenging, and the percentage is higher among those with less experience. As one new developer put it:

“I still don’t really understand EDT and threading.”

Maintaining compatibility across multiple target versions adds yet another layer of complexity. 52% of developers overall find this challenging, and for new developers the burden is especially heavy. They are learning the API while simultaneously trying to understand how it has changed across versions.

How do new developers cope with these challenges?

They turn to different resources. New developers use Stack Overflow 4 times more frequently than experienced developers do (24% use it often or very often, compared to 6%), and YouTube 5.5 times more frequently (11% vs. 2%). Offering beginner-friendly content, step-by-step tutorials, and answers to common questions, these platforms fill a gap that the official documentation, by its nature, cannot fully address.

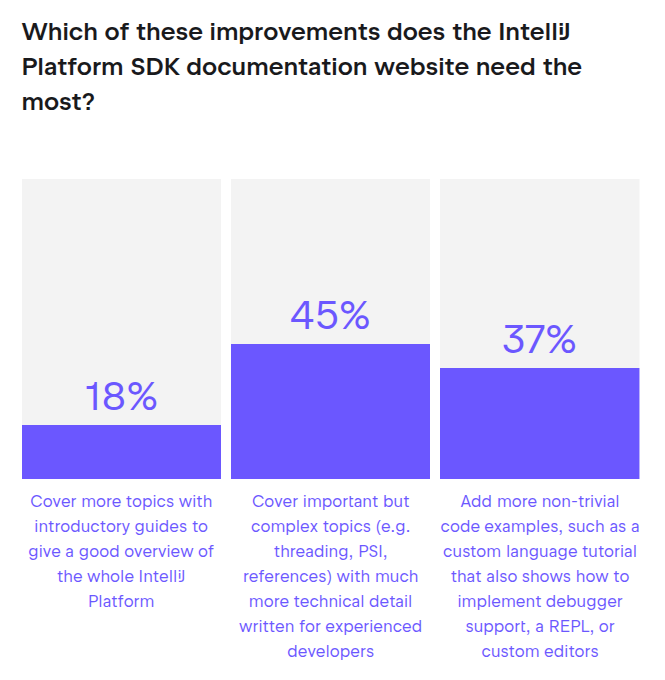

When asked what type of documentation improvements they would prioritize, 36% of new developers chose introductory guides, compared to just 10% of experienced developers. This is a 3.6x difference. New developers need onboarding. They need context. They need to understand the big picture before diving into technical details.

The chart below shows how these different priorities combine across the full community:

The difficulties new developers face are real, but they are also surmountable. Understanding that 78% of new developers struggle with codebase navigation helps normalize the experience. It is not a personal failing. It is a shared challenge inherent to learning a large and complex platform. One respondent noted:

“Things are still occasionally rough, but they are much, much better than they used to be!”

That progress is ongoing, and comments like this make us proud that the day-to-day experience already is meaningfully better for (at least some of) the community.

What experienced developers do differently

Experienced developers do not avoid the challenges that new developers face. They have simply developed different strategies for addressing them. Chief among these strategies is reading the Platform source code directly. 54% of experienced developers do this very often, compared to only 16% of new developers. Only 2% of experienced developers rarely read the source code, compared to 16% of new developers.

This difference reflects a shift in how experienced developers think about documentation. They no longer need introductory guides or high-level overviews. Instead, they want technical detail on complex topics. 55% of experienced developers prioritize this type of content, compared to 31% of new developers. The source code and API comments themselves become a form of documentation, one that provides the most accurate and complete picture of how the Platform works.

Experienced developers also engage more actively with the community. 28% use the Slack channel often or very often, compared to 5% of new developers. They ask tough questions, share solutions to complex problems, and contribute to discussions about API design. This higher level of engagement reflects both greater confidence and deeper investment in the Platform.

When it comes to improvement priorities, experienced developers focus on different areas than new developers do. 41% want better API documentation comments, compared to 17% of new developers. If you are reading the source code regularly, the quality of inline comments matters a great deal. Comments that explain the “why” behind design decisions, clarify edge cases, or point to replacement APIs when something is deprecated become essential tools.

One experienced developer made this point explicitly:

“If some API is deprecated, please always add Javadoc pointing to the new API.”

Another echoed the sentiment:

“More clarity for how to replace usages of @Deprecated interfaces.”

These are not requests for more introductory material. They are requests for more precision and more context within the code itself.

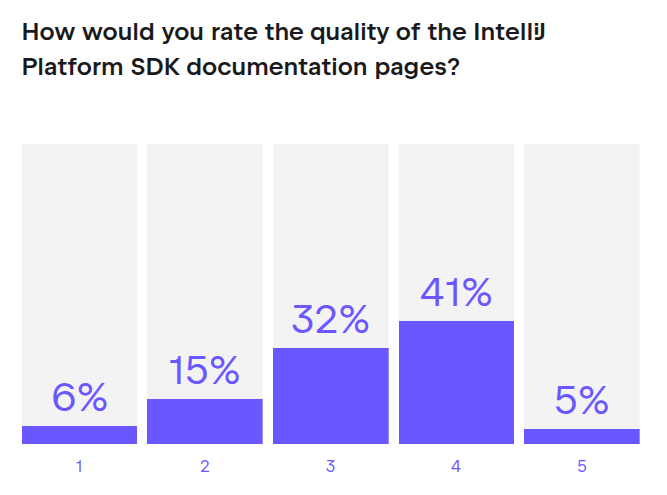

The overall quality ratings for SDK documentation pages show a mixed picture. 46% rate it “4” or “5”, while another 33% place it in the middle.

Interestingly, experienced developers rate documentation quality higher than new developers do. 62% of experienced developers rate it “4” or “5”, compared to just 27% of new developers. This might seem counterintuitive. If experienced developers are more critical and more engaged, why would they rate quality higher?

The answer likely lies in what each group is looking for. New developers need comprehensive onboarding, clear structure, and step-by-step guidance. When they do not find it, they rate quality lower. Experienced developers, by contrast, are looking for technical depth and precision. The existing documentation, while imperfect, provides enough of this to be useful. They have also learned where to look and how to fill in gaps by reading source code and engaging with the community.

The resources that matter most

Across all experience levels, two resources dominate: the official SDK documentation and the IntelliJ Platform source code. 69% of developers use the SDK documentation frequently (often or very often), and 74% use the Platform source code frequently. These are the foundation of plugin development, regardless of experience level.

Open-source plugin code is also important, with 50% of developers using it frequently. This makes sense. Seeing how others have solved similar problems is one of the most effective ways to learn. It provides concrete examples and demonstrates best practices in context.

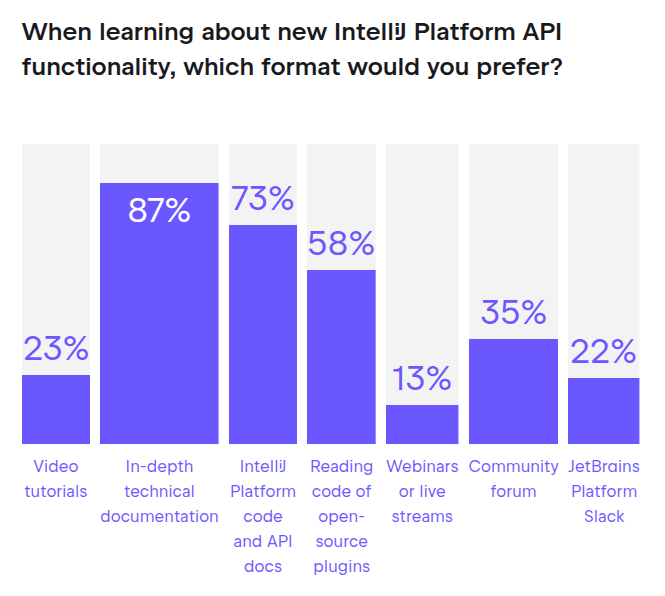

When we asked about preferred learning formats, the results were clear. 87% of developers prefer in-depth technical documentation, compared to just 23% who prefer video tutorials. This is a nearly 4-to-1 preference. Reading Platform code and API documentation is preferred by 73%, and reading open-source plugin code is preferred by 58%.

Video tutorials, despite their popularity in other domains, are rarely used for IntelliJ Platform plugin development. 58% of developers never use YouTube as a resource, and only 6% use it frequently. This is not necessarily a criticism of video content. It may simply reflect the nature of the work. Plugin development requires deep technical understanding, precise implementation, and frequent reference to documentation. Written content is easier to search, skim, and revisit.

Some resources have surprisingly low adoption. The IntelliJ Platform Explorer, a tool designed to help developers discover APIs and understand Platform structure, is used frequently by only 15% of developers. 59% use it rarely or never. We do not have data on why adoption is low. It could be a discoverability issue, a usefulness issue, or simply that developers have found other workflows that meet their needs.

Community support channels (Slack, forums, Stack Overflow) have moderate but not dominant usage. Between 17% and 21% of developers use these channels frequently. This suggests that while community support is valuable, it is not the primary way most developers learn or solve problems. The documentation and source code remain central.

What this means for the community

The diversity of needs within the plugin developer community is not a problem to solve. It is a reality to understand. No single documentation approach will satisfy everyone, because the audience is fundamentally diverse. New developers need onboarding and structure. Experienced developers need technical depth and precision.

This diversity explains why feedback about documentation can seem contradictory. Some developers say there is too much detail and not enough high-level guidance. Others say there is not enough detail and too many gaps. Both groups are right, from their perspective. They are simply at different points in their journey.

For new developers, the key insight is this: the struggle is normal. 78% of new developers find navigating the codebase challenging. 46% rate the overall difficulty as “4” or “5” out of 5. You are not alone, and it does get easier. Use the resources designed for beginners (introductory guides, Stack Overflow, YouTube). Do not expect to understand everything immediately. Focus on building one feature at a time, and let your understanding grow incrementally.

For experienced developers, the key insight is different: Your desire for deeper technical detail and better API comments is valid. 55% of experienced developers prioritize technical detail, and 41% want better API documentation comments. These are not unreasonable requests. They reflect a sophisticated understanding of the Platform and a need for precision that introductory materials cannot provide.

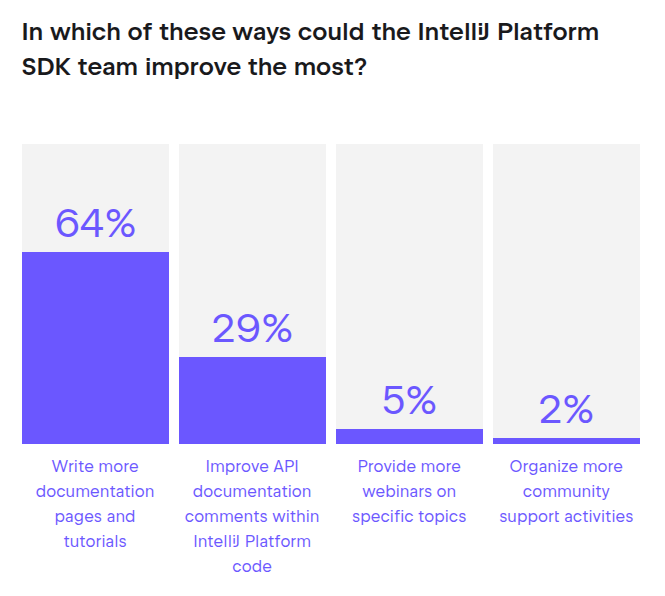

For the JetBrains Platform team, the challenge is serving both audiences simultaneously. When 64% of developers say “more documentation pages and tutorials” is the top improvement priority, they are not all asking for the same thing.

The breakdown reveals the specifics. 45% want technical detail on complex topics, 37% want non-trivial code examples, and 18% want introductory guides. Balancing these needs requires careful prioritization and clear segmentation of content.

One encouraging finding is that many developers appreciate the work already being done, as evidenced in these respondents’ comments:

“Honestly, I have no complaints. This team has been a wonderful and supportive resource.”

“You are doing a great job. It’s much easier to develop plugins for IntelliJ than VS Code.”

“I feel like the team is doing a great job and has been making some great progress.”

This feedback doesn’t erase the challenges developers face, but it provides important context. The IntelliJ Platform team is operating in a complex space with diverse needs, and many developers recognize this. Improvement is possible, and it is happening, but it will always involve trade-offs.

Where you start depends on where you are

If you are new to plugin development and feeling overwhelmed, you’re not alone. 78% of new developers find navigating the codebase challenging. The terminology is unfamiliar, the structure is complex, and the learning curve is steep. This is normal. Start with the introductory guides, use Stack Overflow and other beginner-friendly resources, and give yourself time to build understanding incrementally.

If you are experienced and frustrated that documentation does not go deep enough, that is also normal. 55% of experienced developers want more technical detail on complex topics, and 41% want better API documentation comments. You have moved beyond the basics, and your needs have changed. The source code is your friend. Read it often, and consider engaging with the community more actively. Understanding where you are on this journey helps you find the right resources and set realistic expectations. Where you start depends on where you are.

The diversity of the plugin developer community is one of its strengths. New developers bring fresh perspectives and energy. Experienced developers bring deep expertise and institutional knowledge. Serving both groups well is the ongoing challenge and opportunity for the IntelliJ Platform team. This survey takes us one step closer to understanding and overcoming that challenge.

The insights from this survey are already shaping how we approach documentation and community support. We have shared these findings with the IntelliJ Platform leadership team, and the response has been encouraging. The challenges you have identified, from navigating the codebase to understanding complex APIs, are now informing our priorities and planning. We are committed to addressing these pain points systematically, and we are building processes to ensure that community feedback continues to guide our work. The path forward is clear, and we are looking forward to the improvements ahead.

Limitations and caveats

This survey provides valuable insights, but it also has limitations that are important to acknowledge. First, it captures a snapshot in time. We surveyed developers in 2024, and their responses reflect the state of the Platform and documentation at that moment. Without trend data, we cannot yet say whether things are getting better or worse over time.

Second, we do not know why some resources have low adoption. The IntelliJ Platform Explorer is used frequently by only 15% of developers, but we do not know if this is because developers are unaware of it, because they tried it and found it unhelpful, or because they have other workflows that meet their needs. Low usage does not necessarily mean low value.

Finally, response bias is likely. Developers with strong opinions, whether positive or negative, are more likely to respond to surveys. Developers who are struggling may be especially motivated to provide feedback. The 201 responses we received may not fully represent the broader plugin developer population.

Despite these limitations, the survey provides a solid foundation for understanding the plugin developer community. The patterns are clear, the differences between experience levels are substantial, and the feedback is actionable. Future surveys can build on this foundation by addressing the gaps we have identified.

Footnotes

1 Chart percentages are rounded for presentation purposes, while the analysis used exact values. As a result, totals may not always equal 100%, and small discrepancies may occur between the charts and the text.