If you build products for a living, you have felt the last year’s shift. Teams can generate apps in hours using AI assistants, prompt-to-UI builders, and other ai software development tools. The surprise is not that prototypes are faster. It’s that the gap between a convincing demo and a reliable system is getting wider.

That gap is where most startups burn time. You can ship a front end fast, but you still have to answer investor and customer questions about auth, data integrity, background processing, auditability, and what happens when a launch spike hits. AppGen is absolutely real. The risk is believing prompts replace platforms.

The pattern we see in practice is simple. App generation compresses the “build” phase, but it does not eliminate the “operate” phase. If you want to develop software that survives production traffic, you need a sane operating model that prevents unmanaged sprawl while keeping iteration speed.

Low-Code Compressed UI And Workflows. AppGen Compresses Everything

Low-code’s big win was letting more people ship internal apps and workflows without waiting on a full engineering cycle. It reduced hand-coding for common UI patterns, CRUD screens, and automations. It also quietly created a new job for engineering leaders. Deciding what was safe to build outside the main product codebase, and how to keep it governable.

AppGen takes that same direction and turns the dial up. Instead of assembling prebuilt components, you can often generate a working application skeleton, adapt it through iteration, and even get drafts of tests and documentation. That changes the day-to-day of product teams because the bottleneck moves.

When creation is cheap, coordination becomes expensive. You spend less time writing the first version and more time answering questions like:

- Where does user identity live, and what is the source of truth?

- Who owns data access rules when five generated apps all touch the same dataset?

- How do you prevent “zombie deployments” that keep running, consuming resources, and exposing risk?

Those are not theoretical. They are the same failure modes we saw with shadow IT, RPA sprawl, and untracked API integrations. The tools changed. The operational problem did not.

How AppGen Changes The Way You Develop Software

AppGen is best understood as an acceleration layer over application development. It can draft a working app, propose database tables or collections, scaffold endpoints, and create workflow logic from patterns. That makes it a powerful ai development platform capability, even when the tool is packaged as a “prompt experience.”

The key detail is what AppGen is actually optimizing. It is optimizing initial assembly and iteration. That is why it feels magical on day one.

Production success is optimized by different forces. Reliability under load, least-privilege access, predictable cost curves, safe deployments, and observability are not “first draft” problems. They show up once you have real users, real data, and real consequences.

A practical way to frame AppGen is:

- AppGen helps you get to a useful slice of product faster.

- Engineering judgment and platform choices determine whether that slice can be shipped, secured, and operated.

If you are a startup CTO or technical co-founder, this is the moment to set guardrails. Not to slow people down, but to keep the speed from turning into rework.

Vibe-Coding Is Fast. Unmanaged Sprawl Is Faster

Tools that generate code locally or in a lightweight hosted environment are great for momentum. They are also where teams accidentally recreate the problems AppGen claims to solve.

The common failure pattern looks like this. A generated app ships with a pile of credentials, unclear permission boundaries, and a backend that is “good enough” until it is not. Then the team starts bolting on essentials one by one. Auth this week. File storage next week. Rate limits after the first scrape. Background jobs after the first time a webhook retries for hours.

Each bolt-on is reasonable in isolation. Collectively, it turns into operational debt.

Two external references are worth keeping in mind as you evaluate the risk:

First, the OWASP Top 10 is a blunt reminder that many production incidents are not exotic. They are access control mistakes, injection issues, insecure design, and security misconfiguration. Generated code can include these issues just as easily as hand-written code, especially when you iterate quickly.

Second, shadow IT is not just an enterprise buzzword. The UK NCSC guidance on shadow IT describes the core problem plainly. Untracked services create blind spots in asset management and security, which becomes painful when you need incident response or compliance answers.

AppGen does not automatically fix these. It can actually amplify them if you treat every generated artifact as shippable production.

The Platform Move: Let AppGen Create. Let A Backend Platform Operate

The teams that keep their speed without drowning in sprawl usually separate two concerns.

They use AppGen and other application development tools to generate UIs, flows, and even bits of server logic quickly. Then they standardize the backend runtime on a platform that can handle the boring but critical parts. Identity, data access, file storage, background work, realtime, push notifications, environments, and monitoring.

This is where “backend app development” becomes less about writing endpoints and more about choosing a stable operating surface area.

If you want a concrete shortcut, we built SashiDo – Backend for Modern Builders for exactly this split. You generate and iterate where speed matters. Then you connect to a managed backend that gives you a MongoDB database with CRUD APIs, authentication, storage, realtime, jobs, and functions without standing up DevOps.

That does not mean you stop coding. It means the code you do write is aimed at product differentiation, not rebuilding commodity plumbing.

Where AppGen Is Strong Today (And Where It Still Breaks)

App generation is strongest when the problem is pattern-based.

It excels at producing a first version of an admin panel, a CRUD workflow, a simple onboarding funnel, or an internal tool that needs to exist by Friday. It also helps engineers move faster when the goal is to explore multiple approaches quickly.

It breaks when you need deep context and accountability. “Context” here is not just business logic. It includes your organization’s constraints, your data classification, regulatory obligations, and your acceptable risk profile.

A useful test is to ask what happens after the app is “done.”

If the answer includes any of these, you are in platform territory:

- You need fine-grained access control with predictable defaults.

- You need to store files safely and serve them globally.

- You need scheduled or recurring jobs that do not silently fail.

- You need realtime sync where clients share state.

- You need push notifications at scale.

- You need cost predictability as usage grows.

That is also why “prompts replace platforms” is the wrong mental model. Prompts can assemble. Platforms make the result operable.

The Production Checklist Most Teams Discover Too Late

When teams move from prototype to product, the missing pieces tend to cluster. You can use this as a readiness checklist before you cross a few hundred active users, or before you sign a contract that implies uptime expectations.

Identity And Access Control

You want one consistent identity system, a clear token story, and predictable rules for who can read and write what. If you are bolting auth on after the fact, you usually end up with inconsistent permission logic across endpoints.

In our world, every app includes a complete user management system with social login providers ready to enable. If you want to see how this maps to the Parse ecosystem, our developer docs are the fastest way to align SDK behavior with your access rules.

Data Model And CRUD Boundaries

AppGen will propose schemas quickly. The hard part is deciding what must be stable, what can evolve, and how you prevent “schema drift” across generated apps. MongoDB makes iteration easy, but you still want explicit ownership of collections and write paths. MongoDB’s own CRUD documentation is a good baseline for thinking about safe read and write patterns.

Background Work And Scheduling

Retries, webhooks, recurring tasks, and long-running jobs are where production systems quietly fail. If you do not standardize job visibility and alerting, you find out about failures from customers.

We run scheduled and recurring jobs with MongoDB and Agenda, and you can manage them through our dashboard. Agenda’s official documentation is worth reading even if you never touch it directly, because it clarifies the failure modes you need to plan for.

Storage And Delivery

Most generated apps treat file uploads as an afterthought. Production systems cannot. You need permissioned uploads, predictable URLs, and fast delivery. We use an AWS S3 object store with built-in CDN. If you care about how that impacts performance, our write-up on MicroCDN for SashiDo Files explains the architecture choices.

Realtime And Push

Realtime features and push notifications are often “version two” items in prototypes. In production, they are the retention engine. If you add them late, you also add late-stage risk.

We send 50M+ push notifications daily, and we have seen the scaling pitfalls. Our engineering notes on sending millions of push notifications are helpful if you want to understand the operational edge cases.

Uptime, Deployments, And Self-Healing

The moment you have external customers, downtime becomes a product feature. If your generated app runtime cannot do zero-downtime deploys or self-heal common failures, your team becomes the pager.

If you want a practical tour of what “high availability” means at the component level, read our guide on enabling high availability. It is written for builders who want fewer surprises, not for people shopping for buzzwords.

Why Governance Matters Without Returning To Central IT Gatekeeping

The usual objection is that governance slows teams down. That is only true when governance is implemented as approvals and paperwork.

Modern governance is closer to platform engineering. Provide a default backend surface. Make secure paths the easiest paths. Instrument everything. Then allow people to create quickly without turning every app into a bespoke operational snowflake.

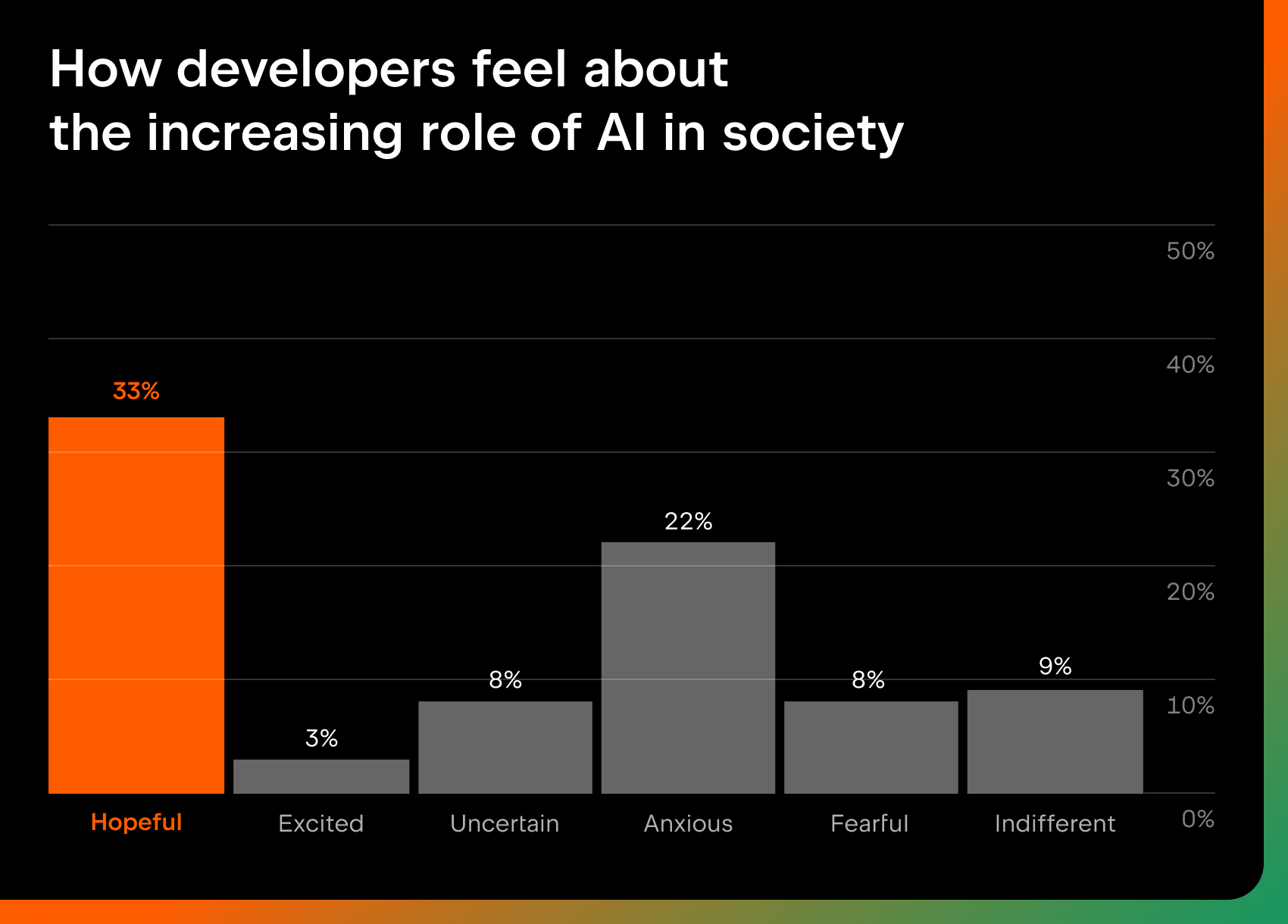

This is also where AI risk thinking is useful. The NIST AI Risk Management Framework is not a developer tutorial, but it reinforces a point that matters for AppGen. You still need humans accountable for risk decisions, even when AI accelerates implementation.

If you want your team to move fast, give them strong defaults. That is more effective than telling people to “be careful” with generated code.

What To Measure So Speed Does Not Become Fragility

If AppGen is your accelerator, your dashboard needs to keep up.

Most teams already track feature throughput. The metrics that drift during AppGen adoption are operational. Time to restore service, change failure rate, deployment frequency, and lead time for changes. Those are not vanity metrics. They tell you whether your new speed is sustainable.

The DORA 2024 Accelerate State of DevOps Report is useful here because it highlights how teams evolve delivery practices as tooling changes, including the emerging impact of AI. The takeaway is not to chase a benchmark. It is to notice when your delivery system starts producing incidents instead of features.

Cost And Lock-In: The Real Objection Behind Most Platform Debates

When a CTO says, “I’m worried about lock-in,” it often hides two separate concerns.

The first is portability. Can you move your data and logic if the business needs change. The second is cost. Will pricing surprise you the moment your product finds traction.

AppGen does not remove either concern. In fact, a pile of generated apps can be less portable if each one bakes in its own backend assumptions.

A managed backend can be a practical compromise if it is built on portable primitives, and if the cost model is transparent. We built SashiDo on Parse and MongoDB, which is a familiar stack for many teams that want flexibility.

On pricing, the only responsible way to discuss numbers is to point you to the canonical source because backend pricing changes over time. Our current plans, included quotas, and overage rates are listed on our pricing page. If you are modeling runway, treat that page as the source of truth and sanity-check your request volume, storage growth, and data transfer.

If you are comparing platform directions, it also helps to compare the operational surface area, not just the database. For example, if you are evaluating a Postgres-first stack but you want a Parse-style backend with integrated auth, push, storage, and jobs, our comparison on SashiDo vs Supabase is a useful starting point.

Getting Started: From Generated Prototype To Production In A Week

The easiest mistake is waiting too long to introduce the “real” backend. Teams often try to keep the generated backend until they hit a scaling wall, then migrate under pressure.

A calmer approach is to introduce the production backend when any of these become true: you have more than a few hundred weekly active users, you start integrating payments or sensitive data, you need scheduled jobs, or you want to ship push notifications without building infrastructure.

Here is a straightforward migration path that keeps momentum while reducing risk:

- Start by standardizing identity. Decide where users live and how tokens are issued, then align your generated app flows to that.

- Move your core domain data to one backend. Keep a single source of truth for collections, access control, and indexes.

- Add background jobs early. Even simple products need retries, cleanup tasks, and scheduled workflows.

- Attach storage and CDN. Treat files as first-class product data, not a sidecar.

- Decide on realtime and push boundaries. Make sure the backend is capable before you promise the experience.

- Add scale knobs before the spike. If you need to scale compute, plan it as a parameter, not a rewrite.

If you are doing this on SashiDo, our two-part getting started series is designed for exactly this journey. Begin with SashiDo’s Getting Started Guide and continue with Getting Started Guide Part 2 once you are ready to layer in richer features.

When you reach the point where performance or concurrency becomes the bottleneck, scale should not require a new architecture. That is why we introduced Engines. Our post on the Engine feature explains when you need it and how the cost is calculated.

Key Takeaways For Teams Adopting AppGen

-

AppGen accelerates creation, but it does not eliminate security, compliance, or operability work.

-

Unmanaged generation creates sprawl. The fix is a platform default, not more approvals.

-

Standardize the backend early if you need auth, jobs, storage, realtime, or push. These are hard to bolt on late.

-

Measure delivery health, not just feature throughput, so your new speed does not increase incidents.

Frequently Asked Questions

How Do You Develop Software?

Developing software in an AppGen world starts with tightening the loop between idea and validation, then hardening what works. Use AI to draft UI and flows, but standardize identity, data ownership, and deployment practices early. Treat security and operability as product requirements, not a later refactor.

What Is A Synonym For Developed Software?

In practice, teams use phrases like production-ready software, shipped application, or deployed system. The important nuance is that developed software implies more than written code. It includes the supporting backend services, configurations, monitoring, and the ability to operate safely under real users and real failure modes.

When Should I Move A Generated App To A Managed Backend?

Move when the app becomes business-critical, or when you cross thresholds that create operational risk. Typical triggers are a few hundred weekly active users, storing sensitive data, adding scheduled jobs, or shipping push notifications. Migrating before the spike is cheaper than migrating during an incident.

What Usually Breaks First In Prompt-Generated Apps?

Access control and background work tend to fail first because they are easy to gloss over in a prototype. You also see fragile environment handling, missing observability, and ad-hoc storage decisions. These issues compound because each new feature adds more integrations and more places for secrets and permissions to leak.

Conclusion: AppGen Raises The Floor. Platforms Still Decide The Ceiling

AppGen is not a fad. It is the next compression step in how teams develop software, and it will keep making the first version cheaper. The teams that win will not be the ones who generate the most apps. They will be the ones who can turn the right generated apps into secure, observable, and scalable products without pausing innovation.

If you are iterating fast and want a backend you can standardize on early, SashiDo – Backend for Modern Builders is designed for that reality. You can deploy a MongoDB-backed API, auth, storage with CDN, realtime, functions, jobs, and push notifications in minutes, then scale without building a DevOps team.

A helpful next step is to explore SashiDo’s platform at SashiDo – Backend for Modern Builders and map your generated app’s needs to a production-ready backend surface before you hit your next growth spike.

Sources And Further Reading

- OWASP Top 10 (2021)

- NIST AI Risk Management Framework 1.0

- DORA 2024 Accelerate State of DevOps Report

- UK NCSC Guidance: Shadow IT

- MongoDB Manual: CRUD Operations

Related Articles

- AI App Builder vs Vibe Coding: Will SaaS End-or Just Get Rewired?

- Why CTOs Don’t Let AI Agents Run the Backend (Yet)

- AI that writes code is now a system problem, not a tool

- Why Vibe Coding is a Vital Literacy Skill for Developers

- Jump on the Vibe Coding Bandwagon: A Guide for Non-Technical Founders