As of February 2026, Databao Agent ranks #1 in the Spider 2.0–DBT benchmark. This ranking measures how well agents can operate in a real dbt project, including reading the repository, understanding what’s broken, implementing the missing models, and validating everything by actually running code.

Our team ended up achieving the highest score in the benchmark, but we didn’t do it just because “we used a better model.” We got the biggest gains by treating the agent the same way you would mentor a junior colleague – providing better context, restricting chaos, and enforcing a reliable workflow.

This post is a practical account of what we changed and why it mattered. Read on to learn about the engineering decisions that made the difference, including how we reduced uncertainty, upgraded context, tightened up tool discipline, and rewrote a messy pile of prompts into a clear policy the agent could follow. The lessons we learned the hard way are that reliability beats cleverness, and prompts alone don’t buy you reliability – you have to design for it.

What is a dbt project?

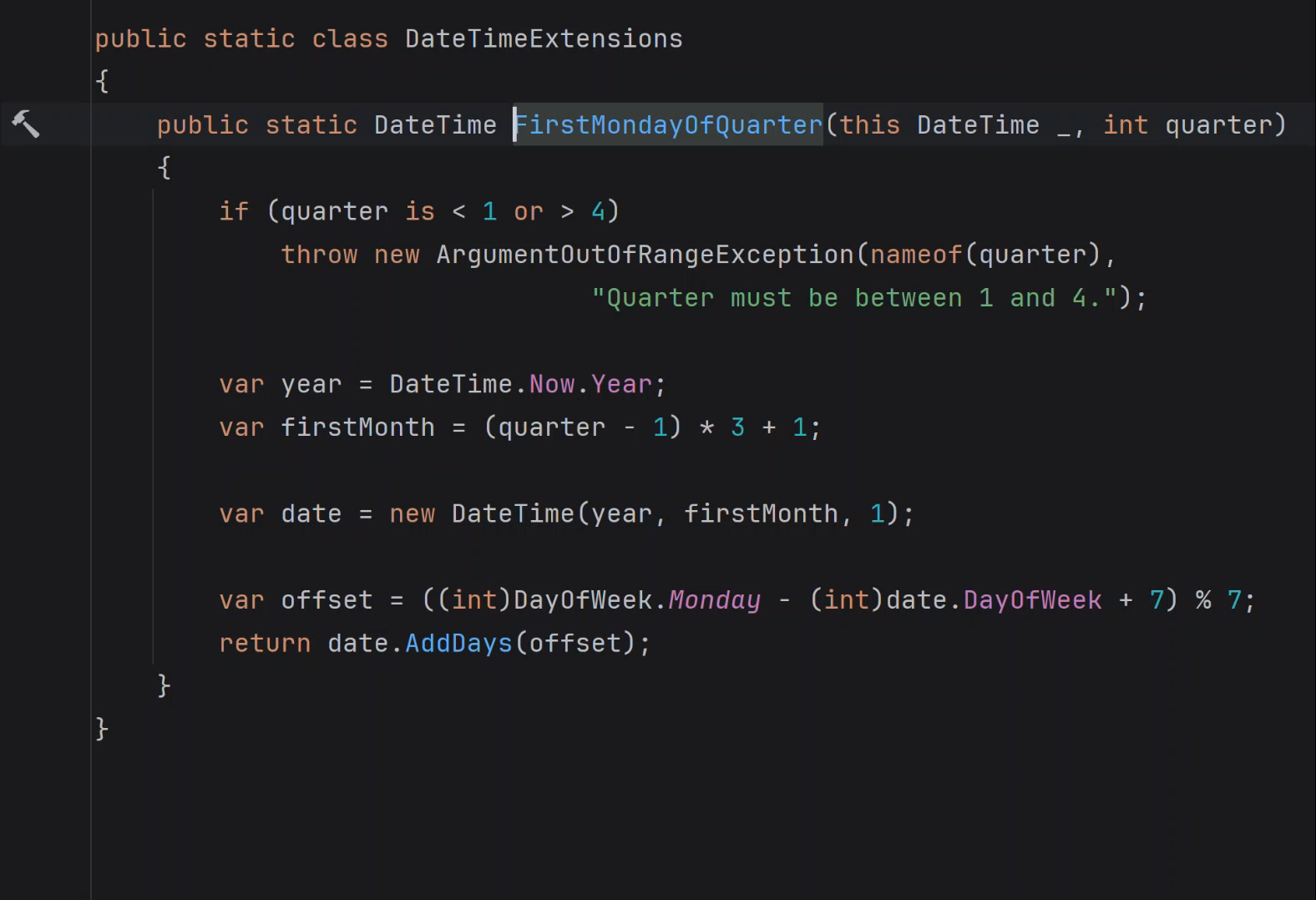

dbt (data build tool) treats analytics like software. Instead of ad-hoc SQL embedded in dashboards and notebooks, data transformations live in a version-controlled repository, are reviewed like code, and can reliably rebuild the same analytics layer.

The main unit of work in dbt is a model: an .sql file that defines a dataset (usually a table or a view) built from other datasets. Models depend on other models, and dbt builds them in dependency order, turning the project into a directed graph rather than a pile of disconnected queries.

A typical dbt repository contains the following parts:

- The

models/ directory with SQL models (often organized into layers, such as staging → intermediate → marts).

- YAML files that document the project and add tests and constraints (sources, descriptions, uniqueness tests, freshness, etc.).

- A workflow built around commands like

dbt run or dbt build. These commands materialize models, run tests, and tell you what failed, where, and why.

Working with dbt means navigating a codebase, respecting conventions and dependencies, iterating, and not declaring victory until the build is green. The Spider 2.0–DBT benchmark asks agents to do exactly that.

What Spider 2.0–DBT evaluates

The Spider 2.0–DBT benchmark turns a day-to-day dbt workflow into an evaluation. In the version we ran, the benchmark had 68 tasks. Each of them was a folder containing:

- An incomplete dbt project (models were missing or incorrect).

- A DuckDB database file with the available data.

The agent’s job was to behave like a careful data engineer:

- Read the repository to understand what the repo is trying to produce.

- Identify what’s missing or wrong.

- Implement the missing SQL models or fixes.

- Run dbt.

- Keep iterating until the project builds.

The evaluation compares the produced database with a “golden database” and checks whether the agent produced the right tables and columns.

Even though it may sound like simple SQL generations that many LLMs can do well, the hard part is operating in a repository environment. Some tasks are large – like, “data warehouse” sized – tables with 2,500+ columns, dozens of models in a single task, and thousands of lines of SQL across the project.

This scale forces the agent to behave like a real contributor. You can’t paste the entire repository and schema into a single prompt and expect consistent reasoning. The agent has to navigate the project, read selectively, build a mental map of the project, and stay oriented after each run.

Where we started: Baselines and the real enemy

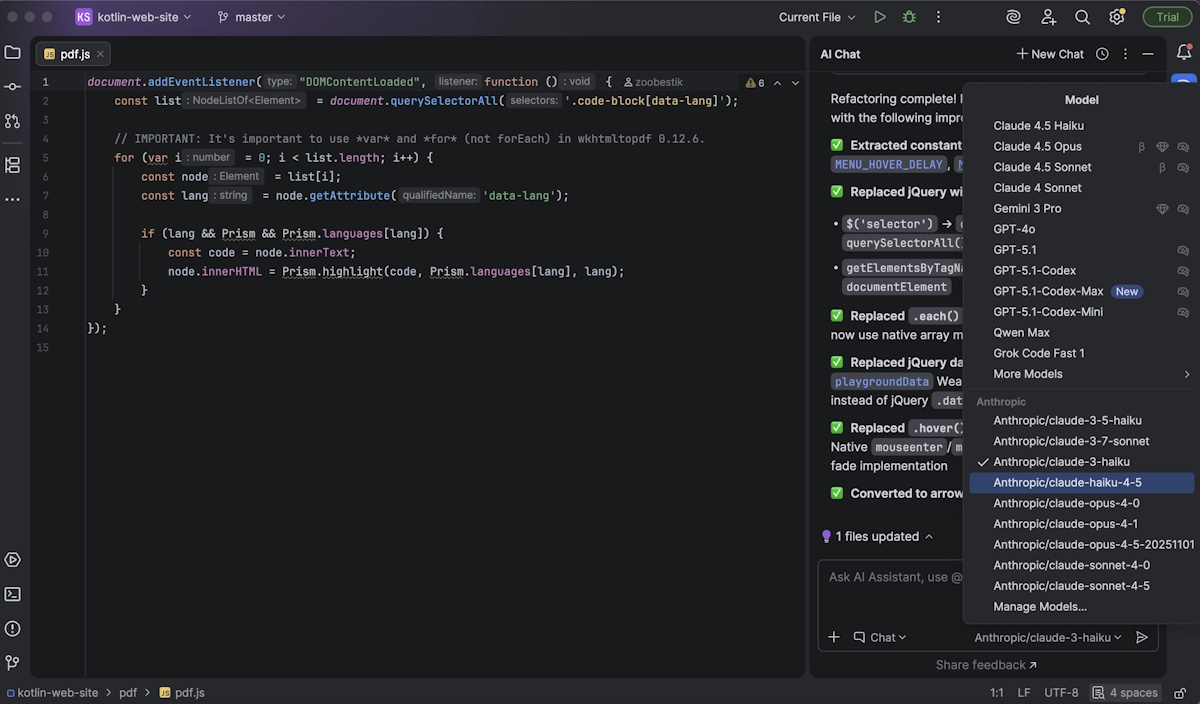

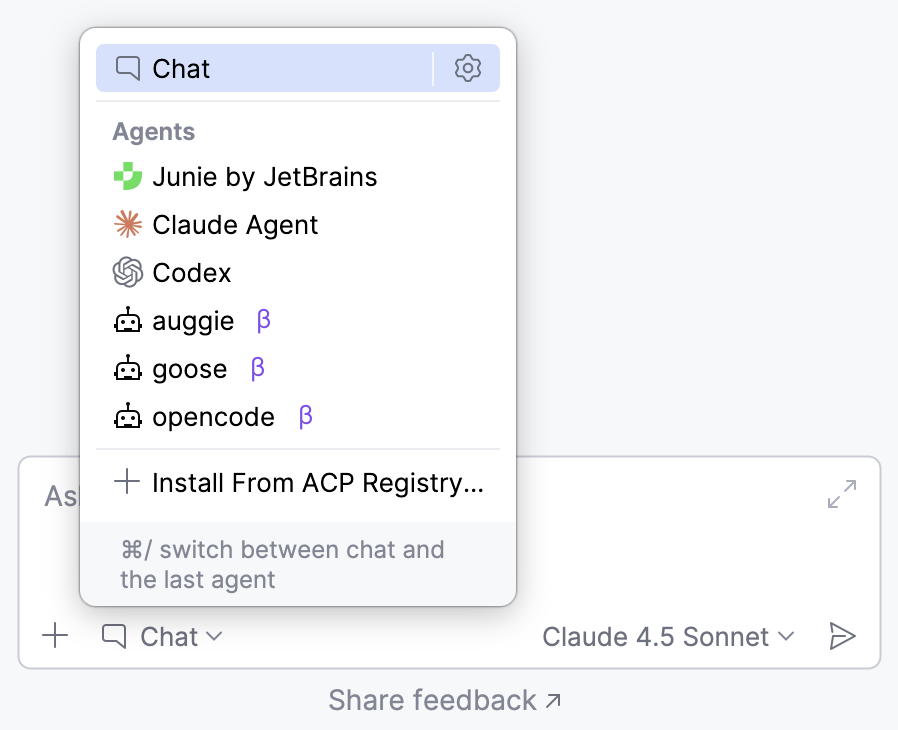

We didn’t start from scratch – our first agent was based on a popular LLM and could inspect a data project, run commands, and make edits using standard data tools. Surprisingly enough, its performance right out of the gate wasn’t too shabby – it could solve about a quarter of the tasks in our benchmark.

Encouraged, we built a more flexible version of the agent by giving it some more tools not available in default setups of other agents. This gave us more control and room to experiment. On paper, these were all improvements. But in practice, consistency was sorely lacking. The agent behaved a little differently each time we ran it. It would nail one task, then completely whiff on the next.

This inconsistency turned out to be the real enemy. When we looked closer, the issue wasn’t that the agent couldn’t write SQL or “do data stuff.” The problem was that it struggled to behave consistently and to understand what the actual task was – something a careful data or analytics engineer wouldn’t have any issues with.

Important kinds of uncertainty

As we dug deeper, we realized there were two main culprits behind the agent’s randomness.

The first was missing or unclear context. The agent often didn’t have enough visibility into how the project was structured, what tables existed, or what conventions were being followed. This uncertainty is fixable. If you provide better, targeted context, the agent stops guessing.

The second was natural ambiguity. Human language is fuzzy by nature. Even with good instructions, there can be multiple reasonable ways to solve a task, but only one of them matches the benchmark’s expected answer. You can’t fully eliminate this kind of uncertainty.

Understanding this distinction changed what we worked on. Once we did, we were able to re-allocate our efforts, focusing less on fixing the model and more on fixing the environment around it.

Our strategy shift: From model tuning to workflow engineering

Early on, we gave the agent lots of freedom and lots of tools. That felt powerful, but failed in predictable ways: the agent wandered around, tried random actions, undid its own work, and generally got lost.

So, we changed our mindset. Instead of asking, “What can this agent do?” we asked, “What would a human engineer actually do here?”

We focused on two things:

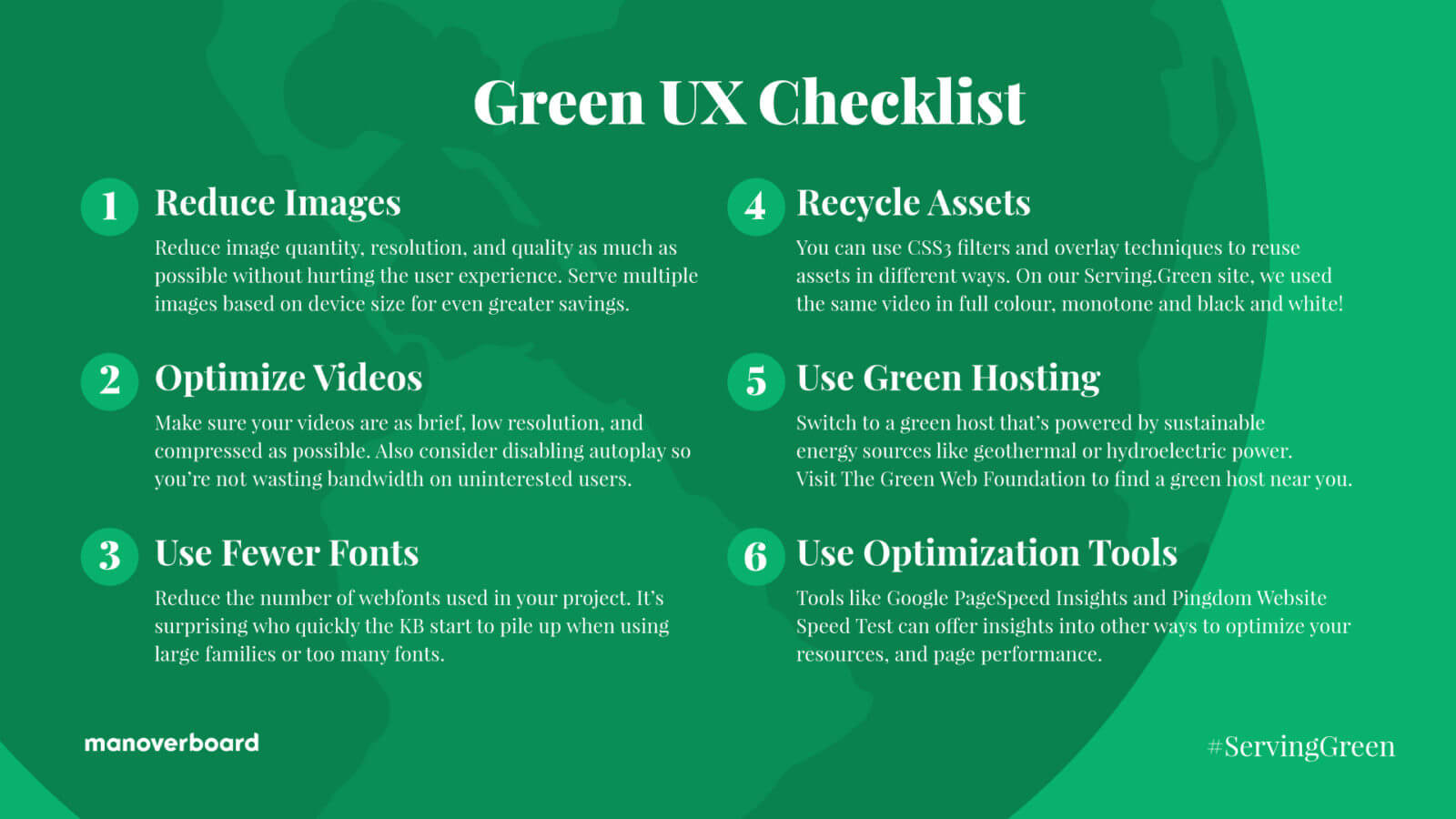

- Better context: Make the right information easy to access and hard to miss.

- A clear, disciplined workflow: Reduce chaos by forcing a specific order of operations.

Better context

We made sure the agent didn’t have to hunt for information.

We showed the important project files upfront, so the agent wouldn’t waste time opening the wrong things, and added a quick database overview at the beginning, so the agent knew which tables already existed. These fixed a surprising number of failures, especially on tasks where the correct action was to do nothing at all.

We also helped the agent connect the dots between requirements and data sources instead of guessing names. When it ran data builds, we summarized the results instead of dumping long, noisy logs. This kept the agent focused on what mattered next.

The result? Fewer blind mistakes and fewer “I didn’t find the right thing” failures.

A clear, disciplined workflow

Context helped, but it didn’t solve the failures entirely, so we tightened up the rules.

In the first version, we gave the agent access to many tools. It could read, write, edit, and add any file in the dbt project, and it had unrestricted access to the terminal. In theory, this was supposed to make the agent powerful, but unfortunately, the agent used its power to break things.

We removed the general scope tools and limited access to a narrow set of specific commands, such as dbt run or dbt build. File edits were restricted so that the agent could mostly edit .sql files in specific directories. We also gave the agent a clear checklist: inspect first, make minimal changes, verify, and only then declare success.

In several tasks, the agent didn’t inspect the database state carefully and could unintentionally overwrite existing tables with incorrect results. To prevent this, we added a few hard rules like never touching tables that already exist but aren’t part of the project, and never submitting an answer unless the final validation step succeeds.

This dramatically reduced chaotic behavior, loops, and premature “I’m done!” moments.

What we learned: Stability over cleverness

It goes without saying that not every idea paid off. Adding more clever mechanisms (e.g., re-running the agent several times and choosing the “best” output, simulating human reviewers, or layering on extra tools) often gave us even less reliable results.

And then there was the “prompt onion” problem. Initially, whenever we wanted to improve performance or change the logic, we added another rule or clarification. But soon enough, rules started overlapping and conflicting, and the execution flow became murky.In the end, stability beat cleverness. We took a step back and rewrote everything into a clean, human-readable policy. Redundancies and contradictions were removed, and the workflow became linear and predictable, leaving less room for interpretation – for both humans and the agent.

How this translates to real agents (Databao)

The biggest takeaway was about behavior, not SQL or models. Agents work best when:

- They can clearly see their environment.

- They follow a human-like workflow.

- Their freedom is intentionally limited.

In real systems, prompts alone aren’t enough. Safety and reliability need to be enforced at the tool and system levels, not just in the instructions.

What’s next: Reducing variance and catching errors automatically

Ranking #1 on the benchmark wasn’t the finish line for us. We’re already working on reducing variance, implementing smarter error detection, and splitting responsibilities across multiple specialized agents.

If data agents interest you, you can get involved. The open-source data agent code is already available on GitHub, and support for dbt will be added soon.

If you’d rather use agents than develop them, you can build Databao into your workflow or join us in building a proof of concept together. We’ll work with you to understand your use case, define a context-building process, and give the agent access to a selected group of business users. Together, we’ll evaluate the quality of the responses and overall satisfaction with the results.

TALK TO THE TEAM