WordPress / WooCommerce Checkout Anti-Fraud — 9 Production-Tested Defenses (2026)

You wake up to a flurry of emails from your WooCommerce store. At first, it’s a rush—50 new orders overnight. Then you look closer. Every order is for a $1.99 digital download. The customer names are gibberish. The credit cards are all different, but the shipping addresses are identical and nonsensical. Half the payments failed. You’ve just been used for card testing.

This isn’t a sophisticated hack targeting a multinational corporation. It’s the bread-and-butter reality of running a small online store today. Fraudsters use small, independent sites like yours as a proving ground for stolen credit card numbers. For every successful fraudulent transaction, you lose the product, the revenue, and get hit with a $15-$25 chargeback fee from your payment processor. For every failed attempt, your payment processor’s risk algorithms start to look at you sideways.

If you’re losing a few hundred to a few thousand dollars a month to this digital shoplifting, you’re not alone. The good news is you don’t need an enterprise-level budget to fight back. This guide outlines a layered defense strategy, from free tools to affordable plugins, that can stop the majority of common checkout fraud before it costs you money. We’ll cover the tools, the logic, and when it makes financial sense to implement each layer.

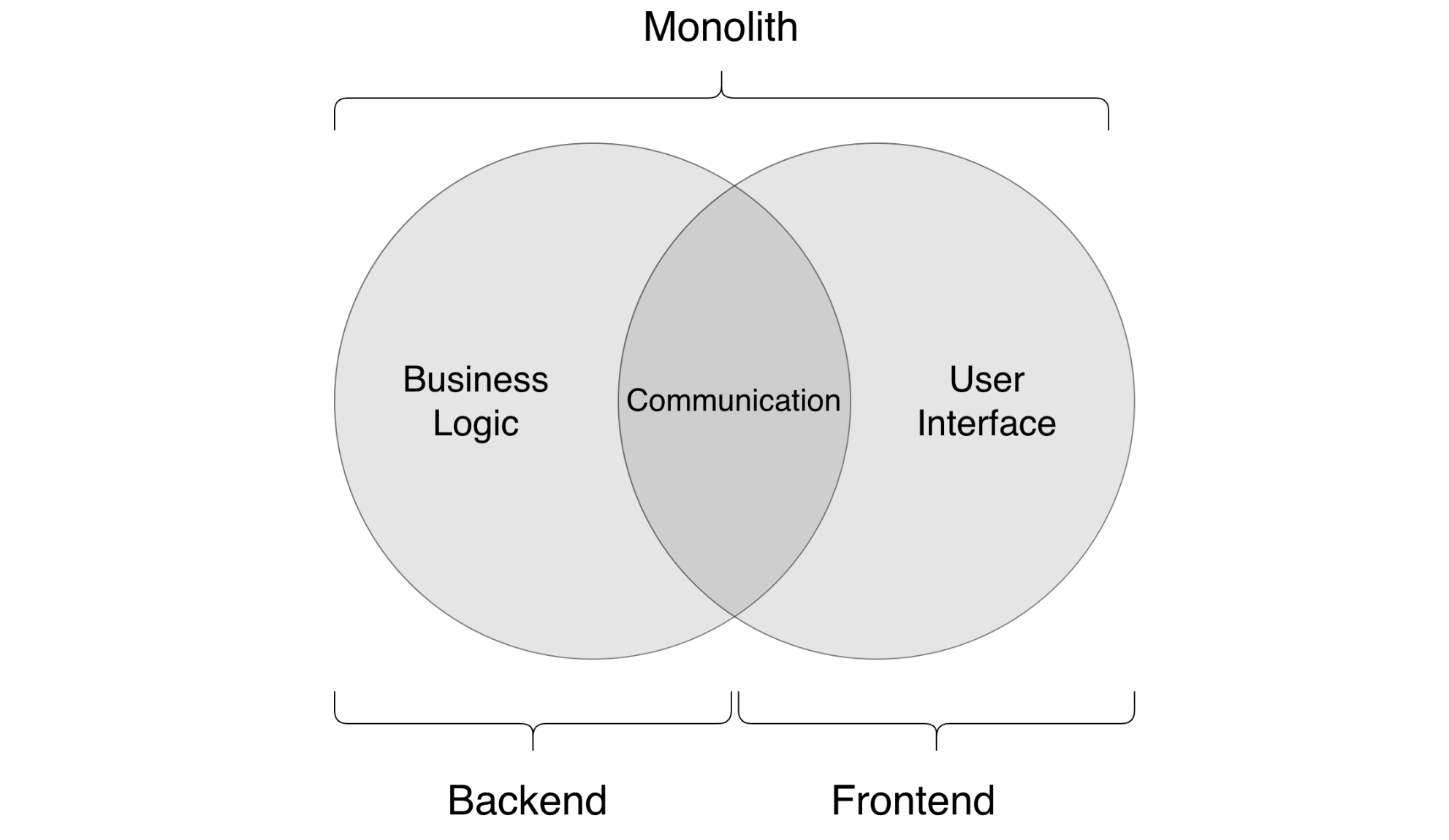

The Indie Store Fraud Landscape in 2026

For a small WooCommerce store, fraud isn’t one single problem. It’s a collection of different attacks, each with its own pattern. If you’re using Stripe, you already have Stripe Radar, which is a good baseline. But determined fraudsters know how to work around it. Understanding the three most common types of fraud is the first step to building a better defense.

- Card Testing (or “Carding”): This is the most common nuisance. Fraudsters buy lists of thousands of stolen credit card numbers on the dark web. They don’t know which ones are still active. So, they use bots to “test” the cards by making small purchases on hundreds of websites simultaneously. Your site is just one of many. They look for stores with low-priced items and weak security. The goal isn’t to get your product; it’s to find a valid card they can use for a much larger purchase elsewhere. For you, this means a flood of failed transactions, a handful of successful ones you’ll have to refund, and potential penalties from your payment gateway.

- Reseller Fraud: This is more targeted. A fraudster uses a stolen card to buy a high-demand physical product from your store (e.g., a limited-edition pair of sneakers, a specific electronic component). They have the item shipped to a “mule” or a freight forwarder. They then sell your product on a marketplace like eBay or StockX for cash. Weeks later, the legitimate cardholder discovers the charge, initiates a chargeback, and you’re out the product and the money.

- Refund Abuse (or “Friendly Fraud”): This one feels personal. A legitimate customer buys a product, receives it, and then falsely claims it never arrived, was defective, or that the charge was unauthorized. They file a chargeback to get their money back, effectively getting your product for free. This is especially common with digital goods where “delivery” is hard to prove, or with services where satisfaction is subjective.

Layer 1: Challenge the Bots at the Gate

Most low-level fraud, especially card testing, is automated. The first line of defense is to make it difficult for bots to even access your checkout page. A CAPTCHA (Completely Automated Public Turing test to tell Computers and Humans Apart) is the standard tool for this. But not all CAPTCHAs are created equal, and a bad user experience can cost you legitimate sales.

Here’s how the main contenders stack up for a WooCommerce checkout page in 2026.

| Tool | How It Works | User Experience | Cost | Honest Limitations |

|---|---|---|---|---|

| Cloudflare Turnstile | Analyzes browser telemetry and user behavior without a visual puzzle. It runs a quick, non-interactive check. | Excellent. It’s invisible to most legitimate users. A loading spinner might appear for a second on high-risk connections. | Free for most use cases. | It’s a bot challenge, not a fraud analysis tool. It won’t stop a determined human using a stolen card. It only tells you if the visitor is likely a human. |

| Google reCAPTCHA v3 | Runs in the background, analyzing user behavior across the site to generate a risk score (0.0 to 1.0). | Good. It’s also invisible. You decide what to do with the score (e.g., block orders with a score below 0.3). | Free for up to 1 million calls/month. | The “black box” nature of the scoring can be frustrating. It sometimes gives low scores to legitimate users on VPNs or with privacy-focused browsers. It also sends a lot of data to Google, which is a privacy concern for some. |

| hCaptcha | Often presents a visual puzzle (e.g., “click the boats”). It has a “passive” mode similar to Turnstile, but its main differentiator is the puzzle. | Poor to Fair. The visual puzzles are a known conversion killer. They introduce friction and frustration right at the point of purchase. | Free tier is available, but paid tiers offer more control and less complex puzzles. | The free version can present users with difficult or annoying puzzles, leading to checkout abandonment. It’s generally overkill for checkout protection unless you are under a sustained, heavy bot attack. |

Our recommendation: Start with Cloudflare Turnstile. It provides 80% of the benefit of a bot challenge with almost zero impact on legitimate customer conversions. It’s a simple, free, and effective first layer.

Layer 2: Basic Input Validation

Fraudsters are lazy. Their scripts often use nonsensical or disposable data. You can catch a surprising amount of fraud by simply checking if the information entered looks like it belongs to a real person.

Email Address Validation

Don’t just check if the email has an “@” symbol. Check for:

-

Disposable Domains: Services like

mailinator.comortemp-mail.orgare a huge red flag. A simple check against a public list of disposable domains can block many low-effort fraud attempts. The disposable-email-domains list on GitHub is a good resource. -

Syntax and MX Records: A valid email address must have a real domain with mail exchange (MX) records. You can use a free API to verify this at checkout. This stops typos and gibberish like

asdf@asdf.asdf.

Phone Number Validation

A phone number can be a strong indicator of legitimacy. Check if the number provided is valid for the country listed in the billing address. A US address with a phone number that has a Nigerian country code is suspicious. Services like Twilio’s Lookup API (paid) or free libraries can help with formatting and validation.

Address Validation (AVS)

Your payment processor already does this. Address Verification System (AVS) checks if the numeric parts of the billing address (street number and ZIP code) match the information on file with the card issuer. Make sure you have AVS enabled in your payment gateway settings and that you are configured to decline transactions that return a hard “no match.”

Layer 3: BIN/IIN and Country Mismatch

This is a classic, highly effective check. The first 6-8 digits of a credit card are the Bank Identification Number (BIN) or Issuer Identification Number (IIN). This number tells you which bank issued the card and in what country.

The logic is simple: Does the card’s issuing country match the customer’s IP address country and/or the billing address country?

A fraudster in Vietnam using a stolen card from a bank in Ohio is a common scenario. A simple check reveals this mismatch:

- Card BIN: United States

- Customer IP Address: Vietnam

This is a major red flag. While there are legitimate reasons for this (e.g., a US citizen traveling abroad), it’s a powerful signal for high-risk orders. You can use a free online tool like BIN List to look up BINs manually, or integrate their API (or a similar service) for automated checks.

Most dedicated anti-fraud plugins for WooCommerce perform this check automatically.

Layer 4: Smart Velocity Rules

Velocity rules limit how many times a certain action can be performed in a given timeframe. This is your primary weapon against card testing bots. Generic advice is to “use velocity rules,” but which ones actually work?

Here are some production-tested rules to implement either in a security plugin or with your developer:

- Block IP after 5 failed payment attempts in 1 hour. A real customer might mistype their CVC once or twice. A bot will try dozens of cards from the same IP address.

- Flag order for review if 1 IP address uses more than 3 different credit cards in 24 hours. This is a classic sign of card testing.

- Flag order for review if 1 email address is associated with more than 3 different credit cards in its lifetime. Similar to the above, but catches fraudsters who switch IPs.

- Flag order for review if there are more than 3 orders to the same shipping address with different billing addresses/cards in a week. This helps catch reseller fraud using mules.

The key is to set thresholds that stop bots without inconveniencing legitimate customers. These numbers are a good starting point; you can adjust them based on your store’s specific traffic patterns.

Layer 5: The 14-Day Hold for High-Risk Orders

Sometimes, an order isn’t obviously fraudulent, but it has multiple red flags. Maybe it’s a large order from a new customer, with a BIN/IP mismatch, shipping to a freight forwarder. Auto-blocking it might cost you a good sale. Allowing it might cost you a $1,000 chargeback.

The solution is an admin queue and a holding period.

Instead of processing the order immediately, you can programmatically place it in a special “On Hold for Review” status in WooCommerce. This does two things:

- It gives you, the store owner, time to manually review the order details. You can Google the address, check the customer’s email or social media, or even send a polite email asking for confirmation.

- It delays fulfillment. For physical goods, you don’t ship. For digital goods, you don’t grant access. A typical holding period is 14 days. This is often long enough for the legitimate cardholder to notice the fraud and report it, triggering a decline from the bank before you’ve lost any product.

This manual step is a core part of a robust defense. It’s the human check that catches what the algorithms miss. This is a central feature in our own GuardLabs Anti-Fraud service, as we’ve found it to be one of the most effective ways to prevent high-value losses.

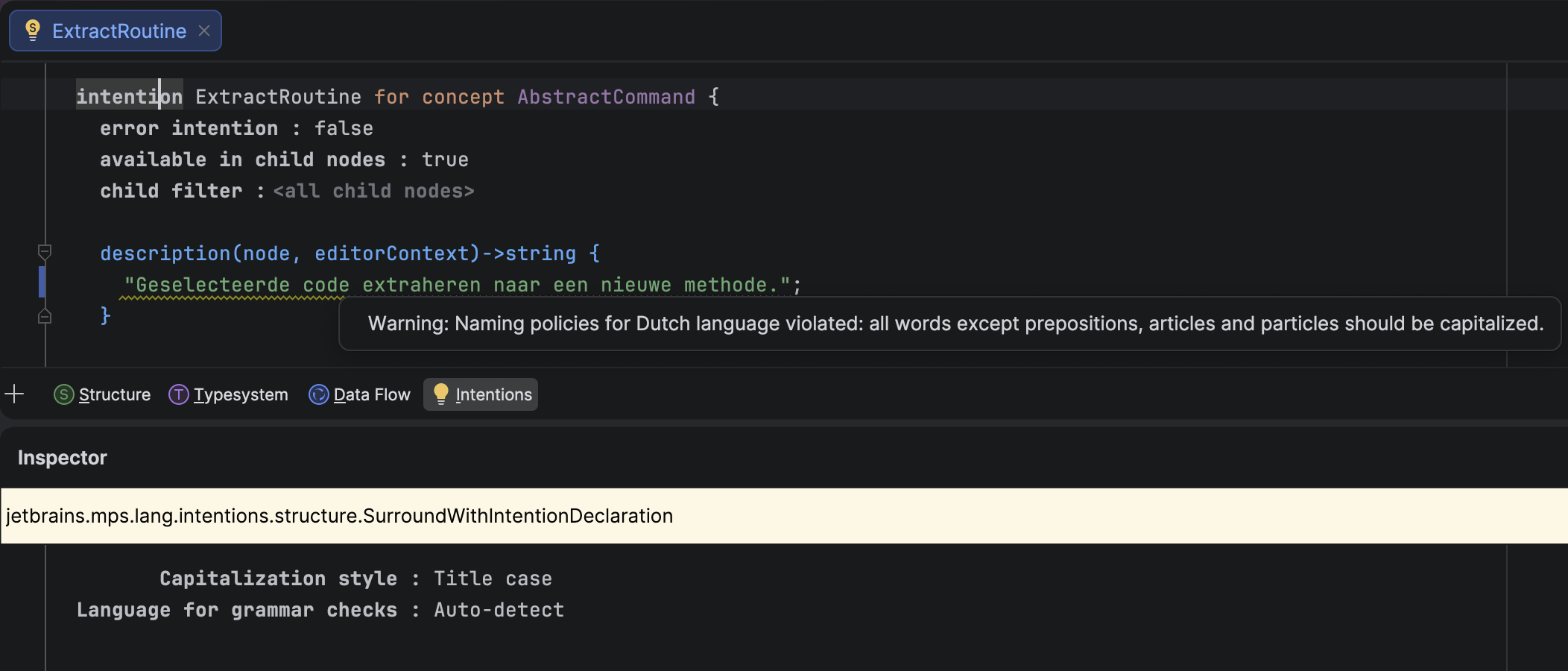

Layer 6: Getting More out of Stripe Radar

If you use Stripe, you have Radar. For many, it’s a “set it and forget it” tool. But its real value for an established store lies in custom rules. Go to your Stripe Dashboard -> Radar -> Rules to start.

You can essentially replicate many of the checks mentioned above directly within Stripe. This is powerful because Stripe has access to data from its entire network. Here are three custom rules you should add today:

-

Block payments where the card’s issuing country doesn’t match the IP address country and the order total is over $100.

Rule:

Block if :card_country: != :ip_country: AND :amount_in_usd: > 100This is the BIN/IP mismatch check. We add a value threshold to avoid blocking small, legitimate purchases from travelers.

-

Place payments in review if the shipping address is a known freight forwarder and it’s the customer’s first transaction.

Rule:

Request manual review if :is_freight_forwarder_shipping: AND :card_past_transfers_count: == 0Stripe can identify many freight forwarders. This rule flags these orders for your review, which is crucial for preventing reseller fraud.

-

Block payments from disposable email addresses.

Stripe doesn’t have a simple rule primitive for this, but you can build a block list. Go to Radar -> Lists and create a new list of “email domains to block.” Populate it with common disposable domains (mailinator.com, 10minutemail.com, etc.). Then, create a rule:

Rule:

Block if @email_domain in @disposable_domains

Stripe Radar is a solid tool, but it’s not a complete solution. It works best when combined with on-site checks (like a bot challenge) and a clear process for handling flagged orders.

The Decision Tree: Block, Review, or Allow?

With all these layers, you need a clear system for making decisions. A simple risk score can help. Assign points for risky attributes and then act based on the total score.

Here’s a sample scoring system:

- BIN country != IP country: +40 points

- Email is from a disposable domain: +30 points

- Shipping address is a known freight forwarder: +20 points

- IP address is a known proxy or VPN: +15 points

- Order value > $500 (or 3x your average): +10 points

- More than 3 failed payments from IP in last hour: +50 points

Then, create your decision tree:

- Score 70+: Auto-Block. The probability of fraud is too high. Block the transaction and, if possible, the IP address.

- Score 30-69: Send to Manual Review. Place the order on hold. Delay fulfillment. Investigate the details. This is where the 14-day hold is your best friend.

- Score 0-29: Auto-Allow. The order appears low-risk. Process it as normal.

A good WooCommerce anti-fraud plugin will do this scoring for you. If you’re building your own system, this logic is a solid foundation.

Cost vs. Benefit: When Does Each Layer Pay Off?

Implementing every layer might be overkill if you’re just starting out. Here’s a pragmatic guide to when each defense becomes worth the time or money, based on your Gross Merchandise Volume (GMV).

-

Under $5,000/month GMV: Your fraud losses are likely low.

- What to do: Enable Stripe Radar’s default settings. Add the custom rules mentioned above (free). Install Cloudflare Turnstile on your checkout (free). This is your basic, no-cost setup.

-

$5,000 – $20,000/month GMV: You’re probably losing $100-$500/month to fraud and chargeback fees. It’s starting to hurt.

- What to do: Add a dedicated anti-fraud plugin. This is where a service like the WooCommerce Anti-Fraud plugin or our own GuardLabs Anti-Fraud ($79/year) becomes a clear win. The cost is less than a few chargeback fees. These tools automate the BIN checks, velocity rules, and risk scoring.

-

$20,000 – $100,000/month GMV: Fraud is now a significant cost center. A 1% fraud rate could mean up to $1,000 in monthly losses, not including lost inventory.

- What to do: Your system needs to be robust. You need all the automated checks, plus the manual review queue for high-risk orders. This is the sweet spot for a comprehensive solution that combines automated blocking with a manual hold-and-review process. You might also consider a paid service like IPQualityScore for more advanced proxy/VPN detection if you see a lot of sophisticated attacks.

-

Over $100,000/month GMV: At this scale, even a 0.5% fraud rate is a five-figure annual problem.

- What to do: You need everything discussed here, and you likely have enough transaction volume to justify the cost of more advanced tools and potentially a part-time staff member dedicated to reviewing flagged orders. Your Website Care plan should include proactive monitoring of these systems.

Fighting checkout fraud isn’t about finding one magic bullet. It’s about building a series of layered, logical defenses that make your store a less attractive target than the one next door. By starting with free tools like Cloudflare Turnstile and Stripe Radar’s custom rules, and then adding more sophisticated checks as your store grows, you can significantly reduce your losses without frustrating legitimate customers or paying for enterprise software you don’t need.

If you’re tired of manually canceling bogus orders and want a system that implements most of these layers—from a non-annoying bot challenge to automated risk scoring and a manual review queue—out of the box, take a look at our service. The GuardLabs Anti-Fraud stack was built for small- to medium-sized WooCommerce stores facing exactly these problems, starting at $79/year.

Originally published at guardlabs.online. More tooling for indie builders & small agencies — guardlabs.online.