“If your code gets exposed, how much damage can someone actually do?”

That’s the question I kept coming back to when the Claude Code discussions started surfacing across developer forums and security channels in early 2025. Reports indicated that portions of internal tooling, module structure, and system architecture associated with Anthropic’s Claude Code — an agentic coding assistant built on Claude — were exposed or reconstructable through a combination of leaked artefacts and reverse engineering.

And before the “it’s just a leak” crowd closes this tab: I want to make the case that this one is different. Not because of who it happened to. But because of what got exposed and why that matters for every developer building AI-driven products right now.

What the Claude Code Leak Actually Involved

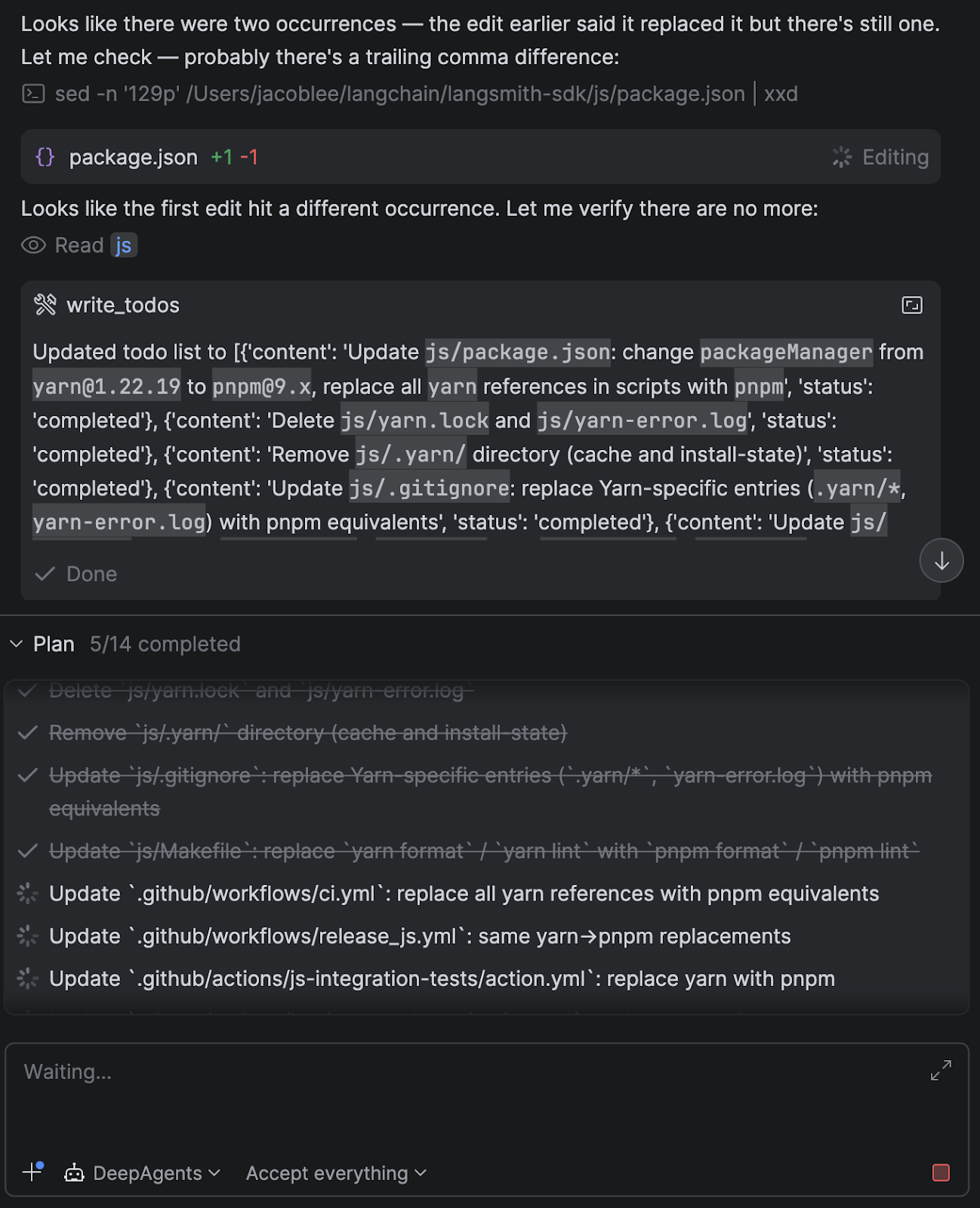

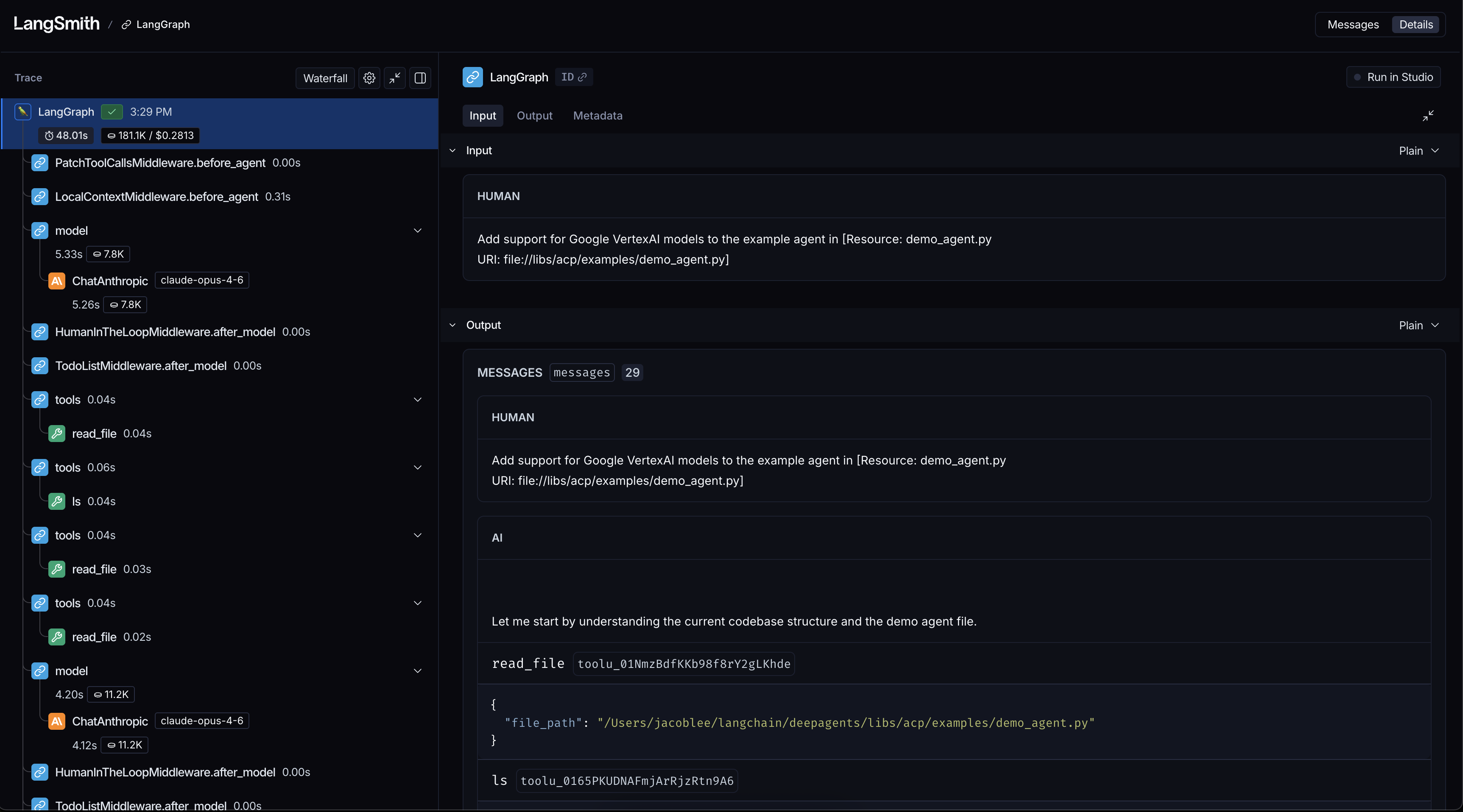

To be precise: this wasn’t a single catastrophic breach where source code was dumped publicly. What made this incident notable was the partial exposure of internal system architecture — things like file structure, module naming conventions, agent workflow patterns, and tool orchestration logic.

In traditional software, a leaked file structure is mildly embarrassing. In an AI system, it’s a blueprint.

Here’s why. When you expose:

- File structure → you reveal how the system is decomposed and what abstractions it uses

- Module naming → you signal what capabilities exist and how they’re scoped

- Agent workflow patterns → you expose the decision-making logic and tool-call sequences

- Safety layer positioning → you reveal where guardrails sit, which tells an attacker where they don’t

Understanding the system architecture of an AI agent doesn’t just tell you how it works. It tells you exactly how to manipulate it.

Why AI Codebases Are Uniquely Vulnerable

Traditional application security assumes a relatively stable attack surface. You protect your API, your auth layer, your database. You patch CVEs. You rotate secrets.

AI systems change that calculus fundamentally. The attack surface in an LLM-powered system includes things that don’t exist in conventional software:

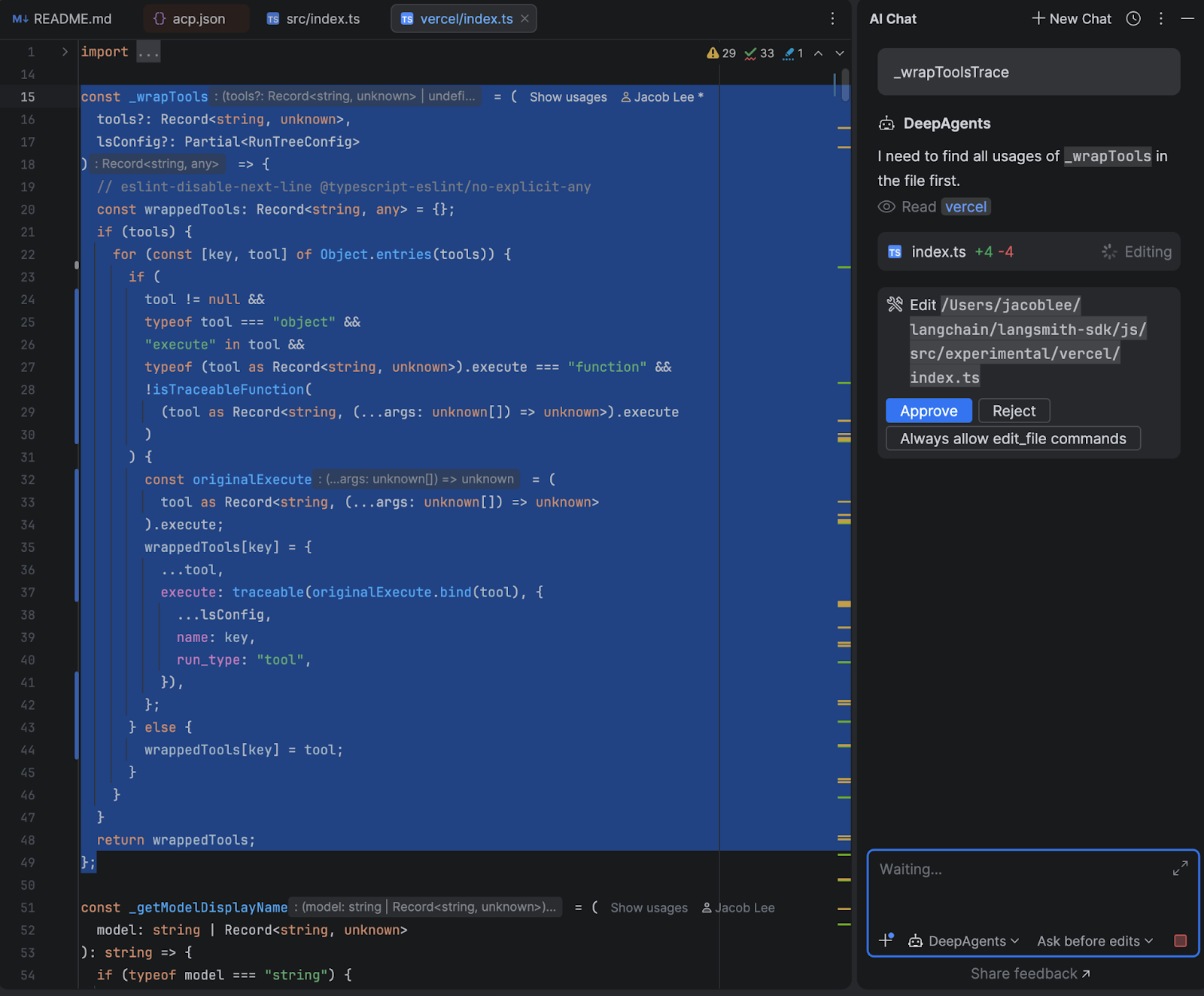

- Prompt Engineering as Infrastructure

In a standard app, business logic lives in code. In an AI system, a significant portion of business logic lives in prompts — system prompts, tool descriptions, chain-of-thought scaffolds. These are text, often stored as strings or markdown files. They’re not compiled. They’re not obfuscated. And they encode your product’s entire decision-making philosophy.

Expose a system prompt and you expose the rules of the game. An attacker can now craft inputs that navigate around your guardrails with surgical precision instead of brute force.

- Tool Orchestration Is a Dependency Graph

Modern AI agents don’t just generate text — they call tools. Search, code execution, file access, API calls. The orchestration logic that decides when to call which tool, and with what parameters, is often the most competitively sensitive part of the system.

Leaking that orchestration logic is the equivalent of leaking your microservices architecture and your internal API contracts simultaneously.

- Safety Layers Are Positional

In a well-designed AI system, safety measures are layered — input filtering, output validation, human-in-the-loop triggers, rate limiting. But these layers have positions in the pipeline. Once an attacker knows where a guardrail sits, they know what comes before it and what comes after it. They can craft inputs that appear clean at the filter point and only reveal their intent downstream.

This is why security-through-obscurity, while generally a bad strategy, is more damaging to abandon in AI systems than in traditional ones.

A Hypothetical Attack Scenario

Let’s make this concrete. Imagine you’ve built a customer-facing AI assistant for a SaaS product. Your system architecture includes:

- An input classifier that blocks obvious jailbreak attempts

- A system prompt that defines the assistant’s role and access permissions

- Tool calls that can query your internal database and send emails on behalf of users

Now imagine a researcher (or attacker) reverse-engineers enough of your architecture to know:

- Your input classifier runs before the system prompt is injected

- Your tool-call permissions are enforced by a description in the system prompt, not by a hard-coded permission layer

- Your email tool doesn’t validate the recipient domain

With that knowledge, they don’t need to brute-force anything. They craft a single, clean-looking input that passes your classifier, then uses indirect prompt injection to override your system prompt’s tool permission language, and triggers an email to an external domain.

That’s not a theoretical attack. Variants of it have been demonstrated in research settings against production AI systems. The Claude Code leak is notable because it suggests even well-resourced AI labs can have enough of their internals reconstructable to enable this kind of targeted exploitation.

The “Systems Still Under Development” Problem

Here’s the angle that worries me most as someone actively building an AI product.

When a mature, production-hardened system gets partially exposed, it’s bad — but the blast radius is somewhat contained. The security assumptions have been tested. The edge cases have been handled. The architecture is, at least in theory, stable.

When a system still under active development gets exposed, the attacker doesn’t just find bugs. They find intentions.

They find the module you haven’t wired up yet. The permission check that’s commented out during testing. The hardcoded API key in the dev config. The tool that’s been scaffolded but not yet rate-limited.

Early-stage AI systems — which describes most of what the developer community is building right now — are architecturally porous by design. Speed of iteration is the priority. Security hardening comes later. The Claude Code incident is a reminder that “later” has a way of arriving before you’re ready.

How to Actually Build for This

These aren’t abstract recommendations. Here’s what I’d implement on any AI system today:

Design for the Inevitable Breach

Assume your prompts, your tool descriptions, and your agent workflows will eventually be exposed. Design them such that exposure doesn’t immediately translate to exploitation. This means:

- No security by prompt alone. Permissions enforced only in a system prompt are not permissions — they’re suggestions. Enforce access control at the infrastructure layer.

- Validate tool inputs at the tool level. Don’t rely on the LLM to self-police what parameters it passes to your tools. Treat every tool call as an untrusted external input.

Reduce Blast Radius

Segment your agent’s capabilities. An agent that can read files and send emails and make external API calls is a single prompt injection away from a multi-vector breach. Apply least-privilege to tools the same way you’d apply it to IAM roles.

# Instead of one god-agent with all capabilities:

agent.tools = [read_files, send_email, call_api, query_db]

# Scope tools to the task:

research_agent.tools = [read_files, web_search]

comms_agent.tools = [send_email] # scoped to internal domains only

Treat Internal Architecture as Public

CI/CD configurations, agent workflow diagrams, prompt files — if they live in a repo, on a shared drive, or in a Notion doc accessible to more than three people, treat them as potentially public. Not because your team is untrustworthy, but because attack surfaces compound.

Red Team Your Prompts Before Shipping

Run adversarial prompt testing before any agent capability ships to production. This doesn’t require a dedicated security team — a single afternoon with a structured prompt injection checklist will surface more issues than you expect. Resources like OWASP’s LLM Top 10 are a solid starting point.

Secure the CI/CD Pipeline Specifically

AI systems often have unique CI/CD patterns — model fine-tuning pipelines, prompt version registries, embedding generation jobs. These are as sensitive as your application code and are frequently less scrutinised. Audit what has access to your prompt store and model configuration with the same rigour you’d apply to your production database credentials.

The Uncomfortable Truth About AI Security Maturity

The wider developer community — and I include myself here — is building AI systems at a pace that has significantly outrun our collective security intuition.

We’ve spent decades developing mental models for securing web applications. We know about SQL injection, XSS, CSRF, broken auth. We have frameworks, checklists, and automated tooling.

For AI systems? We’re still writing the playbook. Prompt injection, indirect prompt injection, model inversion, training data extraction, agent goal hijacking — these are real attack classes with real-world implications, and most developers building AI products today have limited formal exposure to any of them.

The Claude Code incident, whatever its precise scope, is valuable as a forcing function. It makes the abstract concrete. It invites the question: if this happened to Anthropic, what’s my exposure?

Final Thought

We’re not just writing code anymore. We’re building systems that reason, plan, and act — often with access to real data, real APIs, and real users.

When a traditional application fails, it crashes. When an AI agent gets exploited, it executes — just not in the direction you intended.

Security for AI systems isn’t a feature you bolt on at the end of the sprint. It’s an architectural decision you make on day one, and revisit every time you add a new tool, a new agent, or a new capability.

The Claude Code leak is a reminder that no one is immune. The question is whether it changes how you build.

What’s your current approach to securing AI agents in production? Drop a comment — I’d genuinely like to know what others are doing.

If you found this useful, follow for more on building real-world AI systems — covering architecture, security, and the hard lessons from shipping.